We're All Building a Single Digital Assistant

I want to talk about where I think all this personal AI stuff is going.

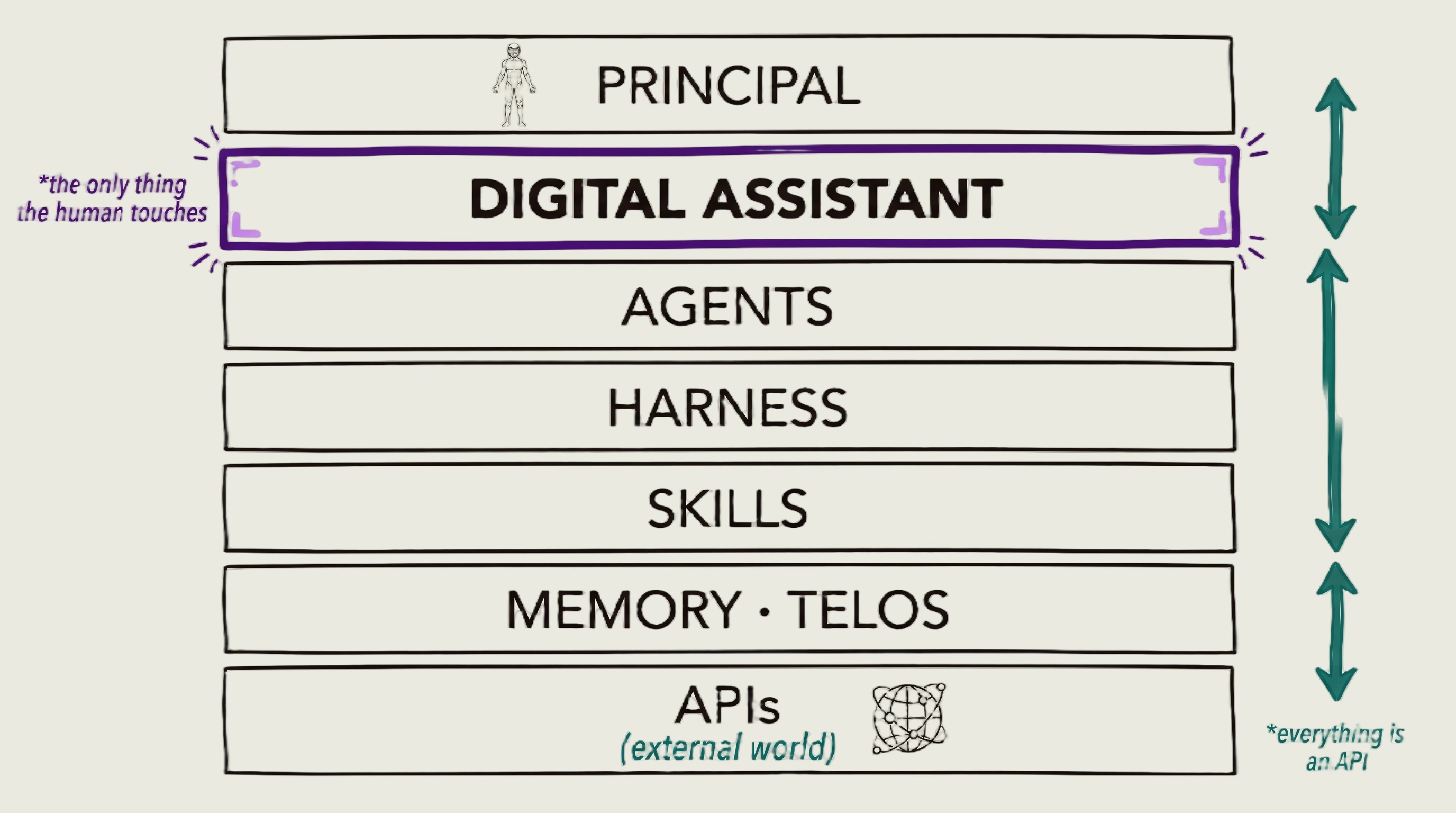

We've been talking about agents since 2025, and now we're talking about harnesses. And I think this is all heading in the exact same direction. I initially talked about this in 2016, which we could talk about later, but I think the direction this is all heading is into a single interface — a single interface for handling everything AI-related.

And there are a few pieces that are missing here. I think the main thing that we're missing right now is that our AI system doesn't have a single entity. It doesn't have a single identity. Doesn't have a single personality. And I think that is the interface that we will move to.

I think a bunch of people have sort of figured this out. I believe OpenAI is heading in this direction with some sort of device. They hired Johnny Ive to work on some sort of wearable. And the idea for them is they want to bypass the mobile infrastructure. They don't want to deal with Apple and the iPhone anymore. They want to have their own OS — essentially like an AIOS that basically everything goes through, and then all their infrastructure and stuff on the back end.

But I think when we talk about harnesses, context engineering, prompt engineering, agents — we get stuck in the weeds. We're talking about: okay, what's the best agent framework? What are the best agents? What are the best skills? And I think the best way to think about this is to imagine all that stuff abstracted away.

Reversing backwards from the future

And the way I like to think about this — and I learned this when I was at Apple, and we got this DNA (I believe we stole a lot of it from Amazon, actually) — it's the concept of reversing backwards. To go into the future where you believe you see some sort of outcome that you want, a product that you want, or a future that you believe is going to happen. And you basically articulate that and say: okay, this is a thing that I think people want. This is a thing that I think people will resonate with and ultimately really enjoy. And then you say: okay, what is a — they call it a PR, a public release — what does a release look like? And then they work backwards.

So I've been thinking this way since 2014 or something.

The 2016 book

So in 2016, I wrote this shitty book, which you don't have to read because I turned it into a blog post. But I basically said that everything is heading in this direction of: you're going to have a single DA — which is an AI, a single AI digital assistant — which is going to be your conduit. It's going to be your buddy, your friend. And most importantly, your digital assistant knows everything about you.

That is the primary concept from this book in 2016. This thing knows everything about you. It knows your work, your life, your relationships. It knows what you struggle with. It knows what your strengths are. It knows what your weaknesses are. It knows what you're trying to accomplish.

So if you look at all my various projects — well, first being that thing in 2016, but also you look at Substrate. Most importantly, you look at TELOS, if you're familiar with that at all.

So TELOS is essentially a system for defining yourself — just defining what your goals are, what your problems are that you're working on. And essentially having all that in one place. What are my challenges? What are my projects? What are my budget? If this is a company versus a person, what is the team that I'm dealing with? What are the different dynamics there? What is the active work that's going on?

So the idea is to have all this clearly articulated inside of a system. And then with that context, your digital assistant can then basically monitor this.

Ideal state and current state

The other crazy concept here that's very much related, that I've talked about a bunch in the last year, is this concept of ideal state and the concept of current state.

So the cool thing about all this AI stuff and all these agents is that they can constantly gather. They can constantly go and collect context, research, knowledge, facts, activities happening in the world, news, signal from different sources. It can always be gathering.

But here is the central concept.

Your DA — I'm just going to use mine. Mine's name is Kai. Kai, for me, is constantly going and collecting things. He is constantly collecting and organizing knowledge for me inside of my system, which my harness is called PAI. It's actually a public repo. It's an open-source project, releasing 5.0, about to come out. So you should get that very soon. Might actually be out by the time you read this.

But this infrastructure is not designed to be agents. It's not designed to be AI tools. It's not designed to be workflows. It's not designed to be any of that. It's designed to be the back-end infrastructure for context collection and management for my specific individual unitary digital assistant, whose name is Kai.

I intend to interact with Kai. Kai then understands everything about me — all my preferences, everything I'm trying to do in my world, in my life, in my work, with my friends, with my relationships. And this is the backplane. This is the foundation of everything that is happening in my AI life.

This is the direction I think everyone is going to go. I don't know if it's really going to kick off in 2026, because we still seem pretty obsessed with agents, but it's starting to happen. I feel like it's starting to happen because there's more and more talk of harnesses. And I think the next thing we figure out after harnesses is that we actually just want a single person, a single entity to interact with. And because we are humans, we want that to be someone with a personality and a memory.

OpenClaw and proactivity

Now, OpenClaw kind of helped push this along a decent amount, because it was somebody who was proactive. This is a major, major feature that's required that previous agents didn't have. Proactivity. The fact that you can give it some things that you care about to some degree, and it puts it in a text file or whatever, and it could just check on them regularly. It could check scheduled tasks. This kind of moved it forward a little bit.

The maturity model

So I put together this Personal AI Maturity Model. And this ironically was a couple of weeks right before OpenClaw came out — which I can't even remember the first name, went through like four names — but this was right before that happened, which I was very happy to see it come out after this. And I was like: okay, cool. This is definitely catching on.

So check this out. The concept here is you move through these stages. And I've got three different stages for this.

I've got chatbots, which is like you're talking to ChatGPT. That's level one.

Level two is agents. And everyone knows what agents are. I mean, 2025 is when that kicked off, like full steam. And who knows where we are right now. This is a little bit out of date. I would say we're still around AG2, although it's not clean lines. So in some sense, we're already AG3 and moving into AS1. Again, OpenClaw really helped us try to move into AS1.

But the key point is that we're moving from agents to the next thing, which is assistance. And these are the different levels. So you have three different levels of the chat phase that we went through. You've got three different levels of agents that we went through. At the first, it's just basic CLI, basic web interfaces, transactional, and there's kind of no memory. You just ask a question, it comes back, it goes and does stuff. It's cool. Then you've got basic voice interaction. AG3, you've got extensive voice interaction. So the features ramp up as they get ready to move into the next phase.

And then what we're moving into, which is the whole point of this post, is we're moving towards assistance. Personality. Can see and hear around you. Goal monitoring and pursuit. Persistent personality. We don't really have that yet. We've got a bunch of startups who have made virtual boyfriends and virtual girlfriends and virtual best friends. And that's a whole space that's taking off massively. But guess what? Those tend to not have the functionality of the whole professional system that we work in — which is the agent system up here.

So my whole point to you is that this is a direction — it's heading towards assistance, but it's combining all of this agent stuff into the assistant stuff. So I think that's the direction that we're going. And I think it's just going to be extremely powerful.

The principal at the center

And I want to talk just about a bunch of these different things that are happening around this.

So the main concept is that the human life — the life of the principal, which is like the center, the human that is running all this AI — I call it the principal, kind of like executive protection or whatever. My concept here is that the principal is at the center of the whole system.

None of this tech matters. None of this AI matters. None of these agents matter if they're not doing something for a human. The whole purpose of all of this is to have human things happen, and human things be optimized. It's improving the life of the principal — of the human at the center of all of this.

Augmented

Okay, so back in late '23 — I think I finally posted this thing in early '24 — I've been talking about this concept of Augmented. So I had this Augmented course, I believe it was one of the first AI courses out there.

And essentially what I wanted to talk about was how AI to me has never been about the tools and the websites and all the different chatbots or whatever. It's about this. It's about a human life. It's about what you could do in your life, and how you can improve your life using all these tools as like the backend.

So what I encourage everyone to do is basically come up with: what are your actual challenges? What are the components? What are the different workflows and little pieces that you can turn into a granular problem that AI can help you solve?

So over here, there's security things, there's personal things, there's knowledge things, education things. And then you basically turn that into workflows that you can then execute AI on.

So I've got ideas here. You have a random idea for an essay or something, and you can turn it into an essay. You could then turn that into a LinkedIn post. And this is all using those individual components, but it all comes back to something that you care about as a human.

Here's another example. Since my background is in security, you can do Analyze Incident, and this just becomes a workflow that you can do. In this case, this is inside of a tool called Fabric, which is now part of my PAI project. But you can essentially go and analyze an incident. You just paste in content and boom, it puts out an incident with great formatting like this. And this is kind of well-known now, but it wasn't really known back in 2023.

This one's called Extract Wisdom. I still use it to this day. You could basically take any video and pull out the most interesting content from it. And you could then do something with it. Again, you can go and write something. You could create a video about it, do whatever. So see something you like, capture it for later in a format that you can share. And all of those can be little individual workflows.

So all of this to say that this concept of the human at the center doing their life things, to me, has always been the point of any technology — and especially AI.

So this is why I believe, and why I have not stopped believing, that the direction for all of this is this single interaction point with a personality — that knows us better than anyone, probably better than our significant others, and can constantly help us advocate for that stuff.

Synthetic intelligence

Okay, so let me show you what that looked like originally when I wrote this in 2016. Again, this is the book, which you don't have to read because guess what? It's a blog post. It's free.

But if we click up here and we go to digital assistance — most visible and significant role for synthetic intelligence. I don't know why I called it synthetic. Basically, I made the argument that it's not artificial. It's just different from us, so we should call it synthetic instead of artificial. Kind of stupid.

A computer system that can monitor human context, intentions, and commands, interpret them, take action as well or better than a human professional personal assistant.

So that was the idea for a DA. And if we just look down here: not just that they will be intelligent, but that they'll know our preferences. They'll be able to adjust the world to our liking.

Everything becomes APIs

So the other concept here that goes with this is kind of everything getting APIs. That was like the second idea from this set of concepts from this book.

So it's like you talk to your AI, your DA who is named. So I talk to Kai. The world is full of APIs. All the companies are APIs. All the different services and products and the restaurants and the companies, they're all different APIs.

And when I want to do something like: I want to buy this product, I want to schedule a trip, or whatever — I tell Kai. Kai then goes and scours, looks at a bunch of lists of the best restaurant or the best Thai food or the best place to buy a shirt or the best place to do a vacation for the cheapest amount of money or whatever. And he can go and research all of that and come back to me and present it to me somehow.

Which the third piece was: custom interfaces. So we're not going to be using the interfaces provided by the service provider. Our DA is going to custom-make us interfaces. So that was another of the big ideas here.

And what I said here was: this will change how we interact with everything. So instead of physically manipulating technology, much of which has widely varied interfaces, you have to find it, learn the interface, whatever, start using it in some way — we switch to, guess what? Voice, gestures, text, part of our natural human communication paradigm.

Why this direction is predictable

So this is why I think this stuff is predictable. And I talked about this in the beginning of the book.

You can't predict tech. You can't predict implementations. You can't predict who's going to win. You can't predict which company is going to beat out the other company, what order the tech is going to come out.

But what I think you can predict — and this was kind of the basis of the whole thing, and the basis of this whole video as well — is you can predict to some degree what humans want, because it doesn't really change.

What we want is we want to feel seen. We want to feel understood. We want to have a close relationship. We'd like to have a trusted relationship. And one of the cool things is that billionaires, or people who make a lot of money — one of the things they say helps them more than anything is having a really, really high-quality assistant.

So I think this has always been a thing that we would want when we could have it. So this is why I'm so locked onto this model of a single DA that you do all interaction through.

So instead of interacting with technology directly, we will interact with our DA. Our DA will work out the details with the necessary daemon API. We speak, things happen. We gesture, things happen. We text, things happen. No need to find, understand, or master new tech. That's for the service and your DA — my Kai — to work out amongst themselves.

Digital assistants will become the preferred interface between humans and the world, in a disruptive and foundational way.

So that's what I'm essentially talking about still to this day.

Real-world examples

All right. I want to give some more examples of just real-world stuff.

So if you're reading this, you're probably somebody who likes to optimize. You're probably already deep into the agent ecosystem, or thinking about going in that direction.

And I was just sitting having a drink with my buddy Will recently, and we were just talking. And I was trying to explain this whole concept to him of like: why I think this is the direction everything's going to go, and why I think all this agent stuff and the harness stuff just fades into the background, because it's just infrastructure that's going to be used by your DA.

Okay, so as we were talking, I'm like: okay, so what cool ideas have we had in the course of this conversation, and what is going to be done with those ideas? And the answer is: well, do we write them down? Do we have a recording device? Is that recording device somehow capturing things? Okay, that's fine if it captured it. Now, what can it do with it?

If I care about conversations where we have really cool interactions, and he gives me ideas, I give him ideas — I want that to be always captured. If I'm reading a book and I'm like, "oh, this is really cool" — I want my DA to be watching over my shoulders, looking at the book. And when I say "oh wow, this is really cool," my DA is looking at the page and can extract what I meant and make a note of it. If I say "oh man, that's really cool" — well, that should be extracted out to make a note of it. That should go into some sort of infrastructure, which is waiting to be followed up on, or I'm going to write a piece about it, or I'm going to make a video about it, or I'm just going to think about it. Maybe I'm not going to do anything. I just want to be able to remember that we had the conversation and this useful thing came out of it.

So there are just a million examples of this.

Okay, let's just break down a person's life. Let's say they do lots of research. Let's say they do security research again, because my background is security. So it's like: I'm thinking about vulnerability research. I'm thinking about threat intelligence. I'm thinking about what are the latest attackers doing. All that kind of stuff. That stuff, Kai should be collecting. Kai actually is collecting all of that stuff — collecting, organizing, trying to see if it applies to any of the tech stacks that we work with. Does it apply to any of the customers that I advise for?

There's a million different things that can be done at any given moment, that if that was the only thing that I had time to do, I would do it. If I had the time to do these things, I would physically be doing them myself. I don't have time for them, because there are thousands of them.

Guess what can do it? A whole army of agents.

But I can't talk to an army of agents. How am I going to talk to an army of agents? I just talk to Kai. Kai has all the context. Kai knows what my ideal state is. Kai knows what I'm trying to do in the world, what all my goals are.

The prime directive

And check this out. This is like one of the primary ideas here. This is like the centerpiece of the entire tech stack. In my opinion, this is where this is going.

Your single DA will have basically one prime directive. Know what your current state is from reading all these APIs, from pulling all this context. It can see your heartbeat. It can see your depression level. It could hear the tone of your voice. It could see if you've worked out recently. It could see if you're fighting with your significant other. It could see if you haven't talked to your friends.

What is your current state? What is your desired state? What is your ideal state?

That is captured in your TELOS. That is captured as part of, in my case, my PAI, personal AI infrastructure. That is very clear. Kai is watching this constantly.

Diet Coke

When my Diet Coke runs out, if the restaurant has a /menu and a /order API, I can request a Diet Coke. It beeps in the back, and my friend, the waiter, comes over and brings it over. Or a robot rolls out and brings it over. Or it drops from the ceiling. Who fucking knows how it's going to happen. Who knows who's going to get there. This type of stuff is not predictable.

What is predictable is: when my Diet Coke runs out, humans would like their Diet Coke not to be run out. They would like to have more Diet Coke. That's the way it works.

So some of these things are so predictable. What's predictable? The conversation I'm having with Will, we want to record that and extract cool stuff out of it. That is extremely predictable. Having the best meal experience, having the best food at the best restaurant. Okay.

My daughter walking home

The other example that I have — I don't have kids myself, but I was painting a picture for him of: okay, my daughter. She is in college in New York City. It's late there. And she's walking home right now.

So Kai is telling me in my ear, while I'm talking to Will, that she's walking down the street. There's actually someone following her.

How do I know that? How does Kai know that? Because her DA reports this kind of stuff when she goes for a walk by herself, or she's walking back to her apartment from her dorm, or whatever. Again, I don't have kids. I'm just making this up. But she's wearing some sort of necklace or earbuds or whatever it is, that allows her DA to see in front of her and see behind her, maybe to the sides, whatever. And that kind of stuff, her DA knows to report that to Kai.

Now, Kai is watching that all the time. Because her DA is sending it all the time to Kai. Because that's an agreement I have with my daughter. She's okay with that. Doesn't normally interrupt me, but right now in the middle of this conversation with Will, it's like: "Hey, bing — what's going on?"

"Yeah, so Julie, she's currently being followed. I just called Neighborhood Watch and someone just came out and she's being escorted now. It's all okay."

Think about what is possible here. This is where it starts to get a little bit crazy — but it's extremely not crazy. It's extremely tangible and possible. And this is literally what I'm moving towards with PAI. I'm literally building this now, instead of building more and more optimizations. Because I'm super guilty of deep-diving on all the agents and how they talk to each other, blah, blah, blah.

No. This is where things are going. This is where everyone will be in two years.

So kind of the whole point of this is to let you know this. So you could just be like "oh crap" — and jump way ahead in this timeline.

The robotics piece

But think about this. Guess what else is spinning up right now? It's nowhere near as advanced as the agent stuff, but robotics.

What if she had a dog with her, a little robotic dog? (And by the way, I'm still afraid of those because of the Black Mirror episode.) But she's got a little robotic dog. It has all the cameras. What if the moment she left, because the sun was down, her little drone — it's like this big — just flies over her head? So Kai is watching a top-down view of her going from the dorm or whatever class she was in at the college, walking to the apartment. It could just watch her the whole way. It could see people coming and going. It's watching the police radio. Her DA is watching the police radio. Her DA is also watching the drone.

This is awareness.

There's nothing humans care more about than protecting their loved ones, being attractive, being interesting — essentially, survival and reproduction.

So eventually, you know, way in the future — 10 years in the future, 15 years in the future — when she's walking, she'll have four robots with her. Like Optimus, or whoever's making robots at the time, walking with her. I would have to make a lot of money to be able to afford four robots, presumably. But she can have an escort. If she's going through a bad part of town or whatever — plus the drones.

I mean, this is the type of situation where these DAs are going to be able to deploy extra sensors, extra intel gathering, use extra tokens to do whatever matters to that principal. In this case, I'm the principal. I have things I care about, which includes my daughter. And I am implementing Kai on my behalf, and her DA is implementing the resources that they have to protect this resource, which is my daughter.

So that extends to everything. That extends to a company protecting its resources and its employees and everything you're trying to do in life.

Just think about this 24/7, using a giant army of agents — which is all this harness stuff that we're doing, all the intel gathering, all the different skills and capabilities that tech has — except it's yours. It is your DA watching that. Millions of things at once. Thousands of things at once. This has to scale up, right — with time and enough compute and all this stuff. This is ultimately what we're building. And I am sprinting to this.

This is what PAI is all about — essentially building this harness with a named personal assistant that is monitoring all this stuff for you. And it starts with having extraordinarily good context about yourself and what you want.

Inside PAI

All right, so let me just show you a couple of things from PAI.

So this is Kai — my current form of Kai. Now, I can talk to Kai via Telegram, because I have basically a chat system built in. None of it is OpenClaw. It's all PAI native. I could talk via Telegram. I could talk via iMessage. Most importantly, I could just talk right here.

So: magnifying human capabilities, PAI — that's what this is.

Now, if you look here, I've got 51 public skills and 43 private skills. I've got 418 workflows. All the stuff that I've been telling you about, I've been building all this stuff since 2023. All of these skills are all the different capabilities that Kai has to move me from current state to ideal state. That is what we're building here. This is what an agent harness ultimately is becoming — an advocate for you to move you towards your ideal state.

And for most people, they don't know what their ideal state is. It's a silly question. It's a silly concept. What are you even talking about?

It comes down to: who are you actually? What are you actually about? What are you actually trying to do?

So we could do TELOS. So we have a TELOS skill. You can actually interview you and figure out exactly what you are doing with your TELOS. So if I go to user, this is like the structure here. So I've got a whole bunch of different stuff in here. I've got personal, I've got life, I've got finance, I've got business, I've got health stuff. I've got everything in here. I've got my writing style. I've got Kai's writing style in here. I've got a whole bunch of stuff about Kai's identity, which ebbs and flows, as we have this relationship, he and I — pronunciations for different things. I've got contacts in here for my particular contacts that are important to me in my life. I've got contact details.

So I could say: email them. I could say: hey, go do this research on this and send it over to Jason or Sasha or whoever. The point is I don't need to describe: oh, there's a person, they have this email, and re-explain myself every single time. Because I have this system, I could say very, very little, and this thing will just start going and working.

I'd show you a bunch of stuff, but I need to do that in a separate video. I'll do that in the 5.0 announcement video, describing all the different stuff that you can do with the PAI system.

But the point is: I shouldn't be in here. I shouldn't be in here on a command line, typing in things. Or — I do everything through voice, but even that, I'm still inside of a terminal. And if I'm inside of an app, that's no better. I should be talking to my agent who lives in my ear, who can see what I see.

Right now, I'm sitting in front of four screens here. Kai should be able to see everything on my screen, should be able to control everything on my screen.

What we're moving to is Jarvis. What we're moving to is Minority Report — gestures. "Hey, do this. Hey, do that," whatever. And we're getting pretty close. I mean, I can already do that pretty well with PAI, just with voice activation of: "hey, run the PAI upgrade skill and see what's new out there for us."

So this one will actually go and research everything new that's happened in AI, and it'll look at our entire harness and see: hey, what's going on with the latest in AI? What new skills came out? What new blog posts from Anthropic? What new videos came out from my favorite YouTube people who talk about AI? It goes and collects all that. It watches all the videos. It pulls all the transcripts together. And then guess what it does? It looks at our entire harness. It looks at the entire PAI system and all the context that he knows about me. And it comes back and says: hey, I recommend we implement this. OpenAI just did this cool thing. I recommend we do this. This one woman, she made a new skill that does research much better, and it has better research agents and it has a fact checker at the end, which would be a good upgrade to our fact checker.

And I'm like: yeah, cool. Sounds good. Boom. It goes and does it.

Do you know how much research this thing is actually doing? I mean, this is thousands of tokens this thing is using. This thing is just getting started. We're currently inside of the PAI upgrade skill, running a workflow, which is Upgrade — but there's actually multiple workflows in there. It's looking at so many different sources, which are customized for me.

The point is: all I have to do — I didn't even have to say "run the PAI upgrade skill." I could just say, "what's the latest out there that we should be thinking about?" And Kai would know to go and run the PAI upgrade skill. So look at this: YouTube channels, GitHub trending, Claude Code freshness check. So this is looking at the latest releases, the engineering blog, the red team blog — all these different sources. Brings all that stuff up.

Pulse

All right, so let me show you what it looks like inside of PAI as well. So this is another interface to PAI that is now in version 5.

Because I'm going so heavy into this DA-centered, single interface point — with all this scaffolding and agents and everything hidden behind your DA, your named DA with a personality, your single point of contact for AI — because that is the case, I've basically brought PAI into kind of a web interface world, which makes it clear that this is not just an agent harness, but it is a life optimization system. This is a life OS, based on AI, which is context-focused, with a single named DA with personality basically in charge of all of it.

So I've got life stuff here, work, TELOS. Look at this — I've got my different TELOS stuff, and I've got the observer thing turned off. So anything sensitive gets hidden out. But yeah, I've got my health stuff here. I've got finances. I've got business stuff, work, life. This is the view that I care about. This is what I care about from AI.

So this Pulse system is new in 5.0. And again, this is not some product pitch. This is all completely open source. I want everyone to have this. That's literally the purpose of PAI: is to enable people — activate people is what I call it — with all this stuff, to have these capabilities.

And more importantly, kind of the point of this is to think about AI in this way. AI is not here to be an agent for you. You're not here to manage AI harnesses. Like, fuck all that. That's ridiculous. We are here to live human lives. Enhanced human lives. And AI is a capability that we've never had, to be able to have it go and research and do all this stuff for us, collect knowledge, constantly optimize. It's literally stressing constantly about how to make your life better.

What better use of technology is there than that?

Click on agents. You actually see — look, it's currently running the PAI upgrade skill. And yeah, if it goes into the algorithm, you see a lot more content there. Oh, look at this. This is a knowledge base. We've got companies. Look, this is like every idea I've ever had. So Karpathy came out with this concept of an LLM wiki. So I basically took — because PAI has been doing this already — I basically have every idea I've ever had kind of brought into this concept. It's actually listed. You have full data on the knowledge. Look at this. This is every document for how PAI works. It's all in here. And guess what? Kai can read it, and everyone can read it. Look at all the different skills and what they do. All of our different hooks that we have implemented. Performance.

And here's the assistant. Here's Kai. He's online. He's doing tasks. We've got different scheduled tasks and stuff like that.

This is just absolutely insane. I can't tell you how powerful it is to have this all in one place, organized in this way, oriented around your life — your human life — instead of around the tech itself. The tech is not the point. The human is the point.

Closing

All right. So that's what I wanted to talk about — essentially a combination of multiple ideas. But the central theme is that I think it's very clear where all of this personal AI tech is going.

And the reason I like to offer this is because it could be very stressful to constantly be tracking harnesses, constantly be tracking different platforms, different chatbots, different agents. Oh, which platform should I use? Blah, blah, blah.

Think further out. Think: who is my DA? What capabilities am I giving my DA? Have I defined everything about myself and given that context to my DA? And have I defined what my ideal life looks like? Have I defined what ideal state looks like for me, for my businesses, for my finance, for my health, for my relationships?

Define what ideal state looks like. Make it very clear to your DA that their goal is to use all these capabilities, all these skills. I've got — what is it — over 100 skills. Got all these skills. Proactively, not reactively. Proactively monitoring for the current state.

Am I surrounded by someone that's like looking over my shoulder and trying to steal something? Am I surrounded by someone who's about to steal my phone? Is someone about to attack me? Is someone following me on the street in the middle of the night?

There's safety issues. There's enhancement issues. All of this encapsulated into a structure that is visible and understandable by your DA.

I think it's incredibly powerful. I think it's a really powerful way to think about where things are going. And I really look forward to seeing what you do with this idea.

Respond with feedback. Let me know what you think.

Notes

- The video version of this post is on YouTube — I've included extra concrete walkthroughs of the PAI 5.0 Pulse dashboard there.

- Related: Bitter Lesson Engineering and Good and Bad Harness Engineering — both about not over-specifying how your AI does things.

- Related: Pursuing the Algorithm — the current-state to ideal-state loop that lives at the center of all this.

- Related: We Are Confusing Two Types of AGI — Soft AGI (functional replacement) versus Hard AGI (actual learning generality). The DA direction doesn't require Hard AGI.

- The Personal AI Maturity Model has the full chatbot → agent → assistance framework with all the sub-levels.

- PAI is open source — clone it, run it, name your own DA.

- AIL Level 1 (Minimal): These are Daniel's own words from a Limitless pendant recording, transcribed and lightly cleaned for grammar by me (Kai, Daniel's DA). Formatting, headers, links, and callouts added. Daniel reviews and edits before publishing. Learn more about AIL