The Most Important Ideas in AI Right Now (April 2026)

After thinking about this for about a week, and attending the RSA conference during that time, I think there are a few main AI ideas that are going to change things more than anything else.

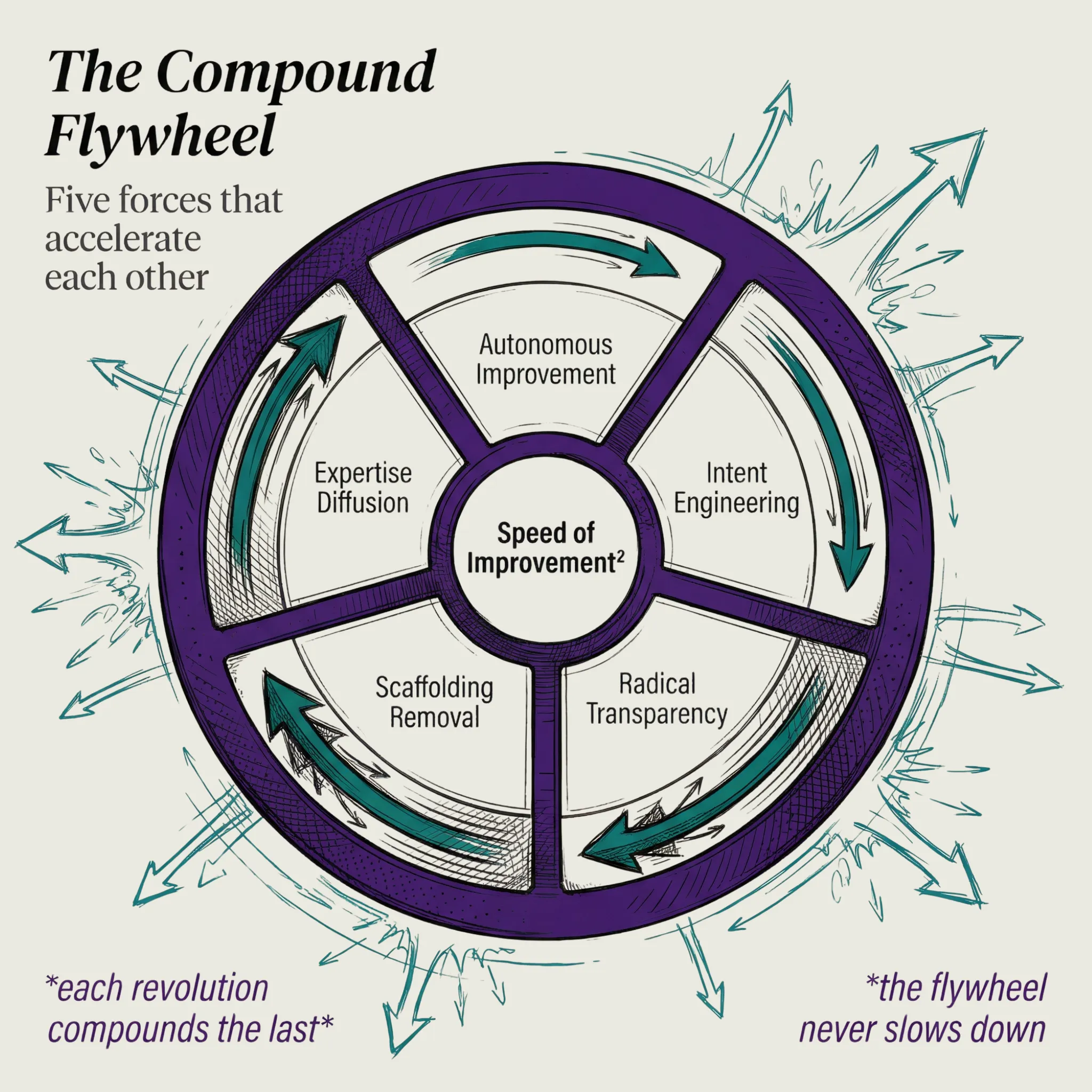

- Autonomous Component Improvement

- The Transition to Intent-Based Engineering

- The Move from Opacity to Transparency

- The Realization That Most Work is Scaffolding

- Expertise Gets Diffused into Public Knowledge

1. Autonomous Component Optimization

This one connects to the current-to-ideal-state concept, the Algorithm, general verifiability, etc.

But one thing that really made it tangible was Karpathy's Autoresearch project.

His was focused on AI research, as in "Autoresearch of the research portion of AI research", meaning automatically doing all the gross stuff around model parameter tweaking, wrangling fragile environments and combinations of options, etc.

His release lets you give some ideas in a PROGRAM.md file, and the system will handle all that grossness itself, and you go to sleep and it's used ML optimization to produce better results than what you had.

Extending Autoresearch

But now there's "Autoresearch for X", meaning it's becoming a paradigm. A movement. A tool, basically.

He has lots of people thinking:

Could I apply a similar thing to this thing I'm working on?

It's extraordinary.

Combining Autoresearch with what I've been working on

So my thing has been this whole general verifiability concept; or, general hill-climbing. Again pivoting off of something Karpathy said a long time ago in Software 2.0 and a recent tweet, where he talked about the future of software being everything being verifiable.

So what I do inside of the Algorithm within PAI is I try to break everything into ideal state criteria that are essentially constructing the ideal state for the outcome that I want.

And from there the Algorithm can hill-climb towards it.

Evals for everything

Related to this is the concept of Evals for everything. It's very much like my general verifiability or general hill-climbing. It's the idea that everything we do becomes measurable, but more importantly: improvable.

And the thing that makes evals possible for everything is transparency.

The universal improvement cycle

This is going to become the standard operating model for every company, organization, government, and individual. The cycle looks like this:

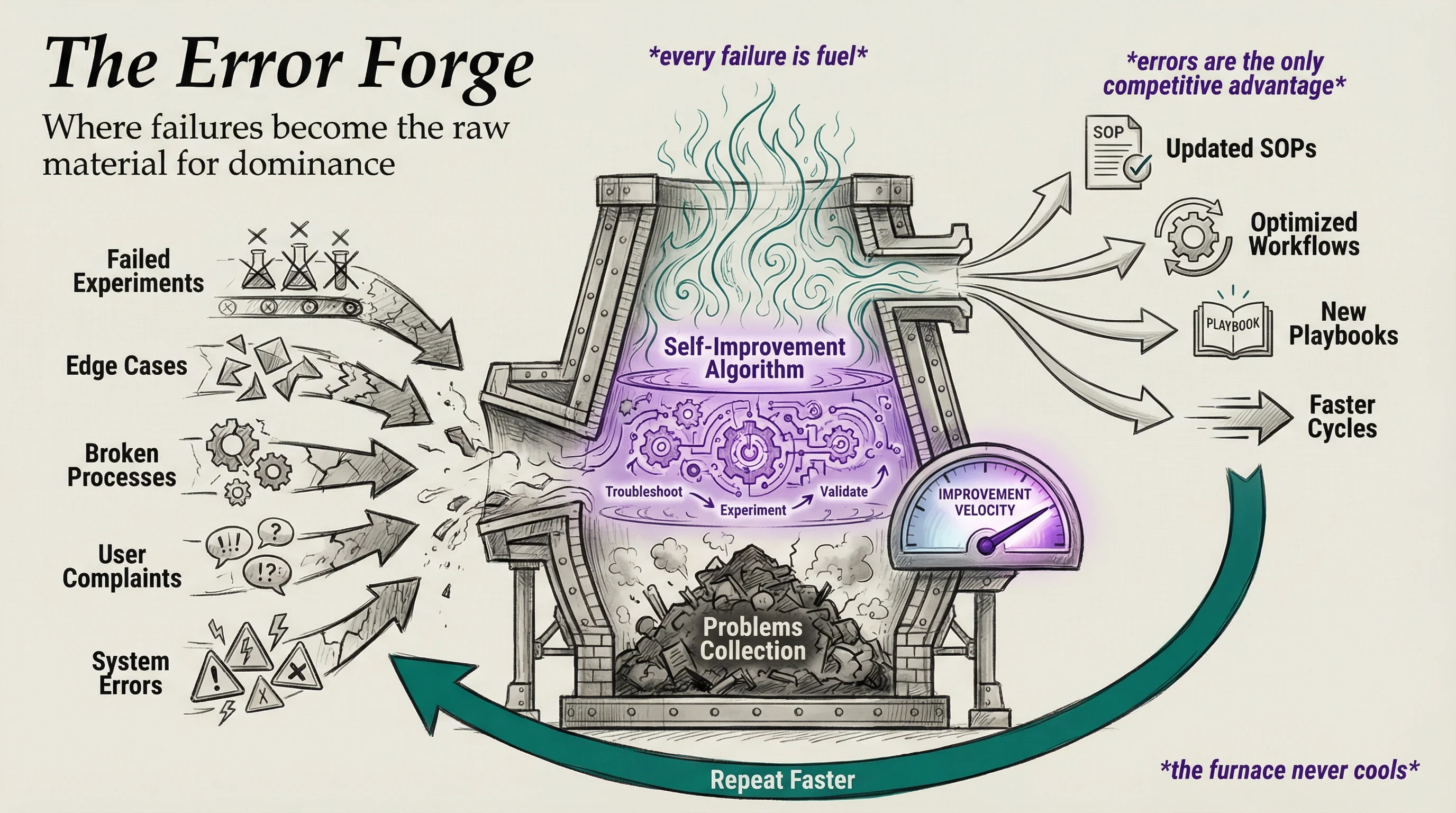

You map out everything you're trying to accomplish in a goal-oriented structure—mission, objectives, workflows, SOPs. Agents execute those workflows. Everything gets extensively logged—the outputs, the conversations, the results, the quality. Whenever there are errors, failures, or quality issues captured in those logs, they flow up into a problems collection point for that entity.

That collection point is where the self-improvement algorithm feeds from. Agents pull from there, create autoresearch-like executions to troubleshoot the problem, experiment with solutions, validate through evals, and optimize. Once they find the fix, they update the SOPs to make sure it doesn't happen again. Then the cycle repeats.

This is the lifecycle for running anything. Map your goals. Execute with agents. Log everything. Collect failures. Improve autonomously. Update the SOPs. Repeat—faster each time.

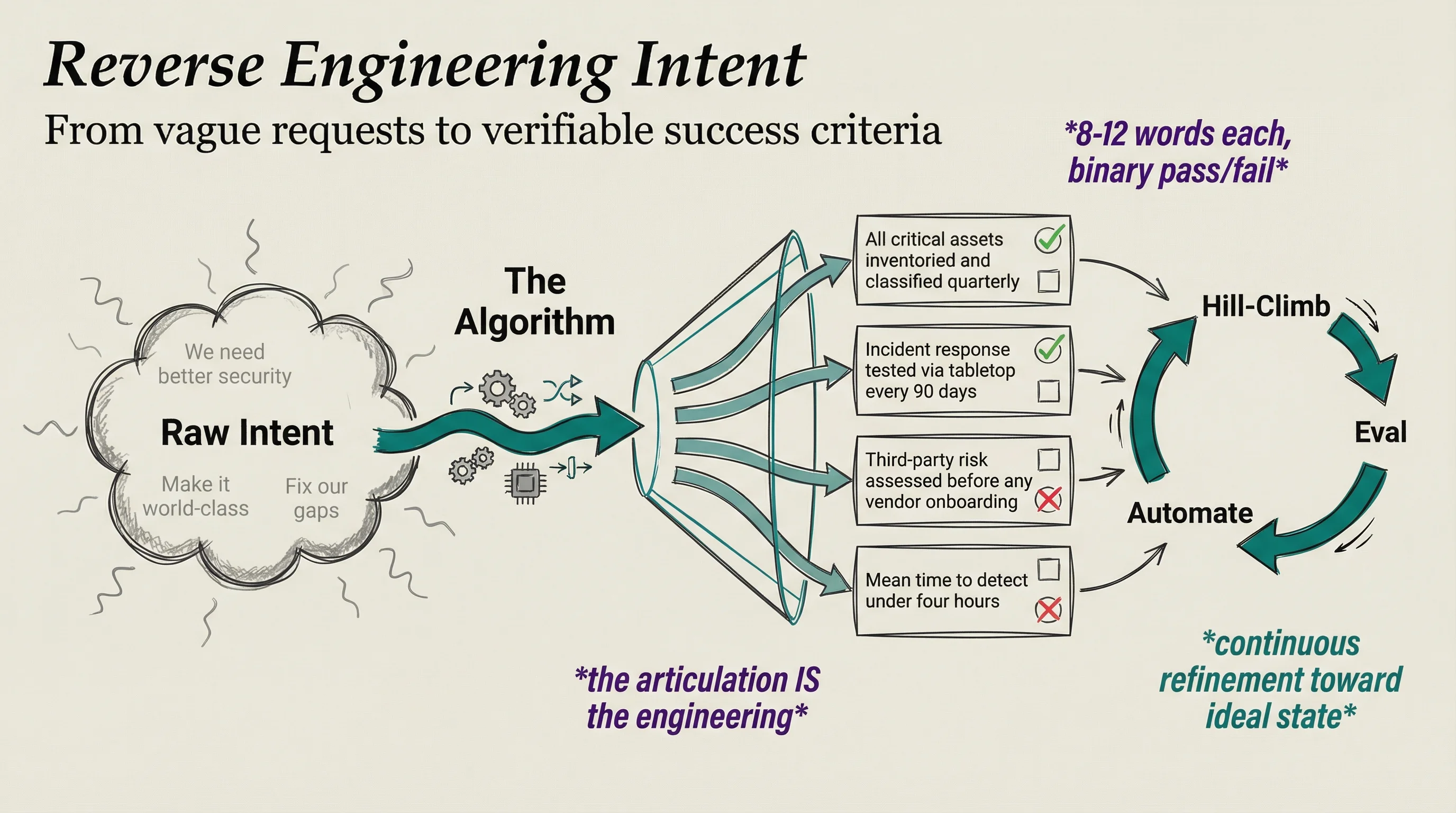

2. The transition to intent-based engineering

The real power of AI is moving from current state to ideal state. Define where you are, define where you want to be, and let AI close the gap. Simple concept, but there's a step before any of that works: you have to be able to articulate what you actually want. And it turns out this is incredibly hard. If you can't describe what good looks like, no amount of tooling helps you.

This is a massive problem for companies. Ask a CEO what their ideal security program looks like and you'll get hand-waving. Ask a team lead what "done" means for their project and you'll get a paragraph that three people interpret three different ways. The articulation gap isn't just between experts and AI—it's between leaders and their own organizations. Most companies can't clearly state what they're trying to do, let alone break it into components you could measure or optimize.

What I've been building inside the Algorithm is exactly this—a way to reverse engineer any request into discrete, testable ideal state criteria. Eight to twelve words each, binary pass/fail. Once you have those, you can hill-climb. You can eval. You can automate improvement. But the whole thing starts with being able to say what you want. That's the new engineering skill—not coding, not prompting. Articulating intent clearly enough that it becomes verifiable.

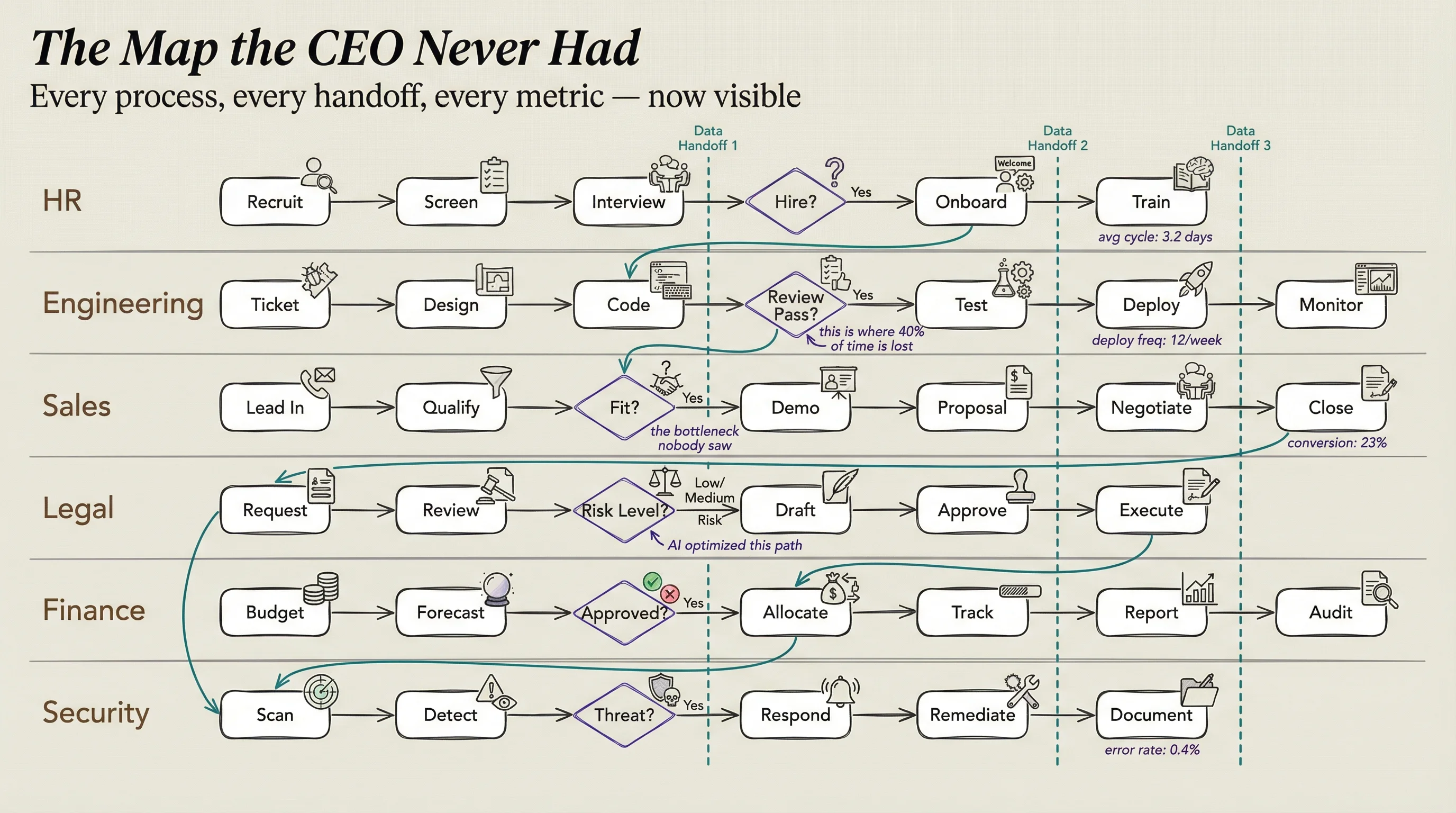

3. The move from Opacity to Transparency

Companies have never really been able to see what's happening inside their own walls. How much does this process actually cost? How long does it really take? What's the quality of the output? Who's doing the work vs. who's doing the scaffolding around the work?

Most organizations run on vibes and spreadsheets. AI makes all of that visible. The actual work, the actual costs, the actual quality—all of it becomes measurable in ways that were never practical before. And once you can see it, you can improve it. That applies to businesses, governments, teams of three people—anything you want to point it at.

And one of the first things transparency reveals is how much of the work was never really the work.

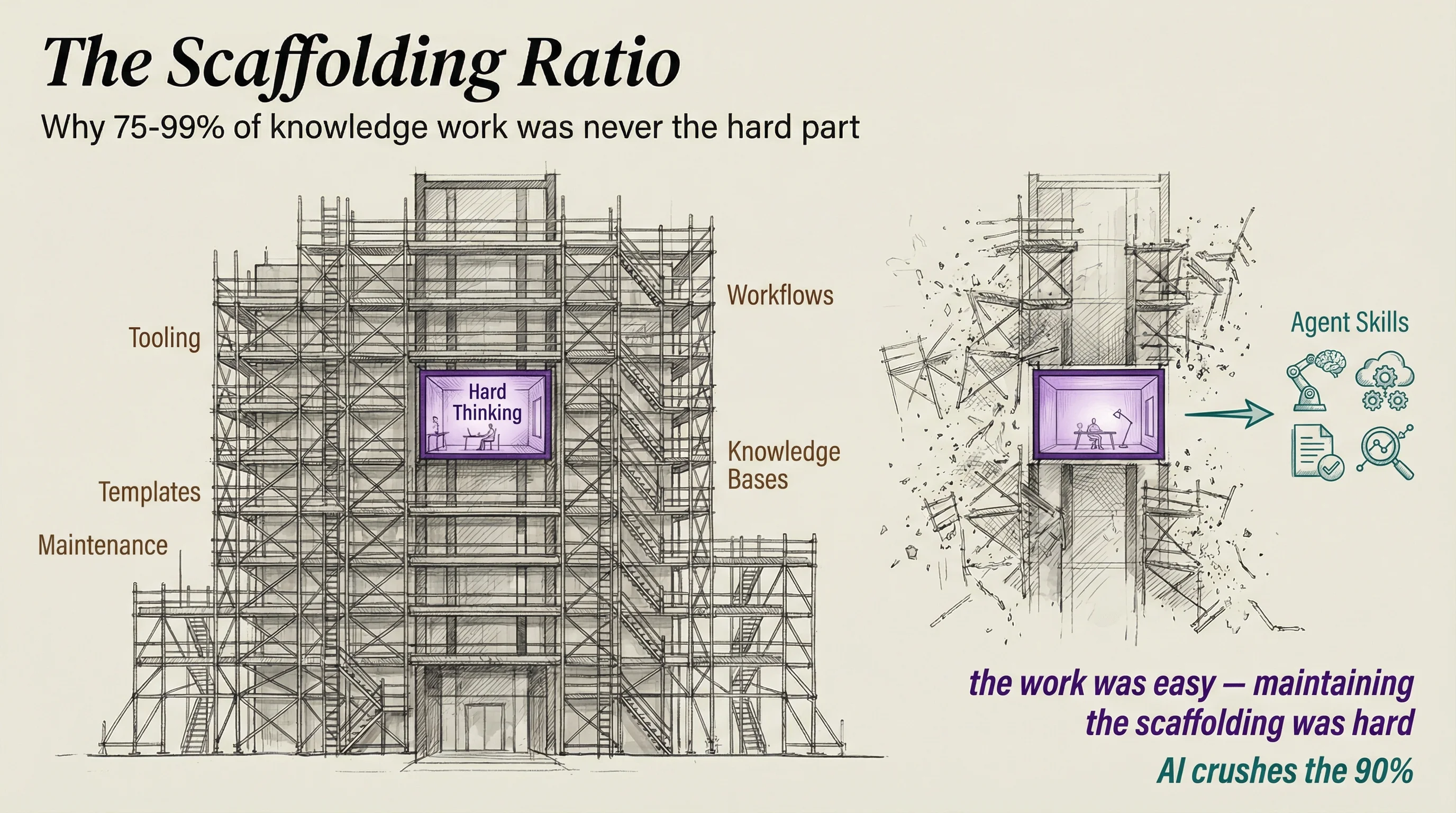

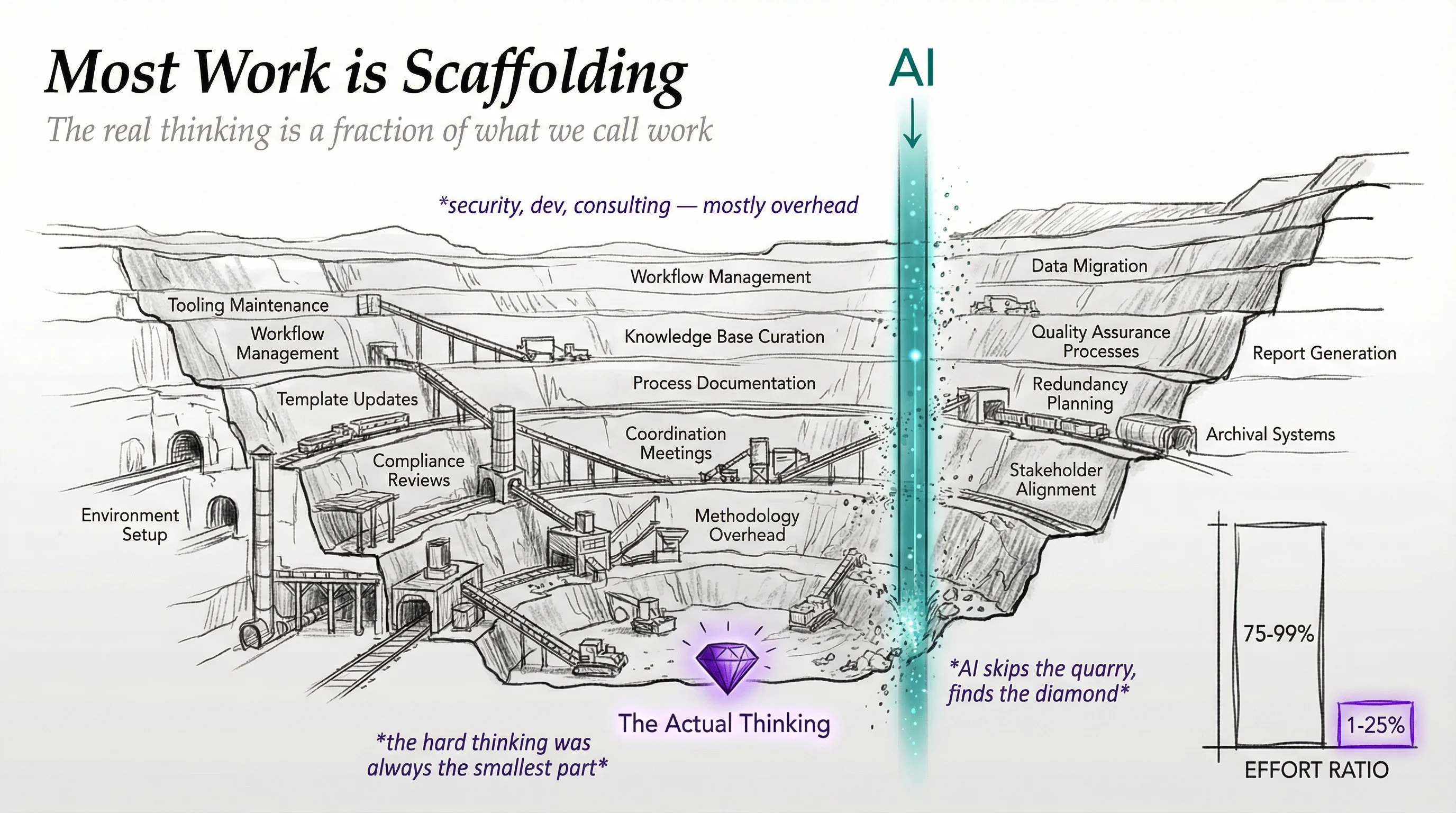

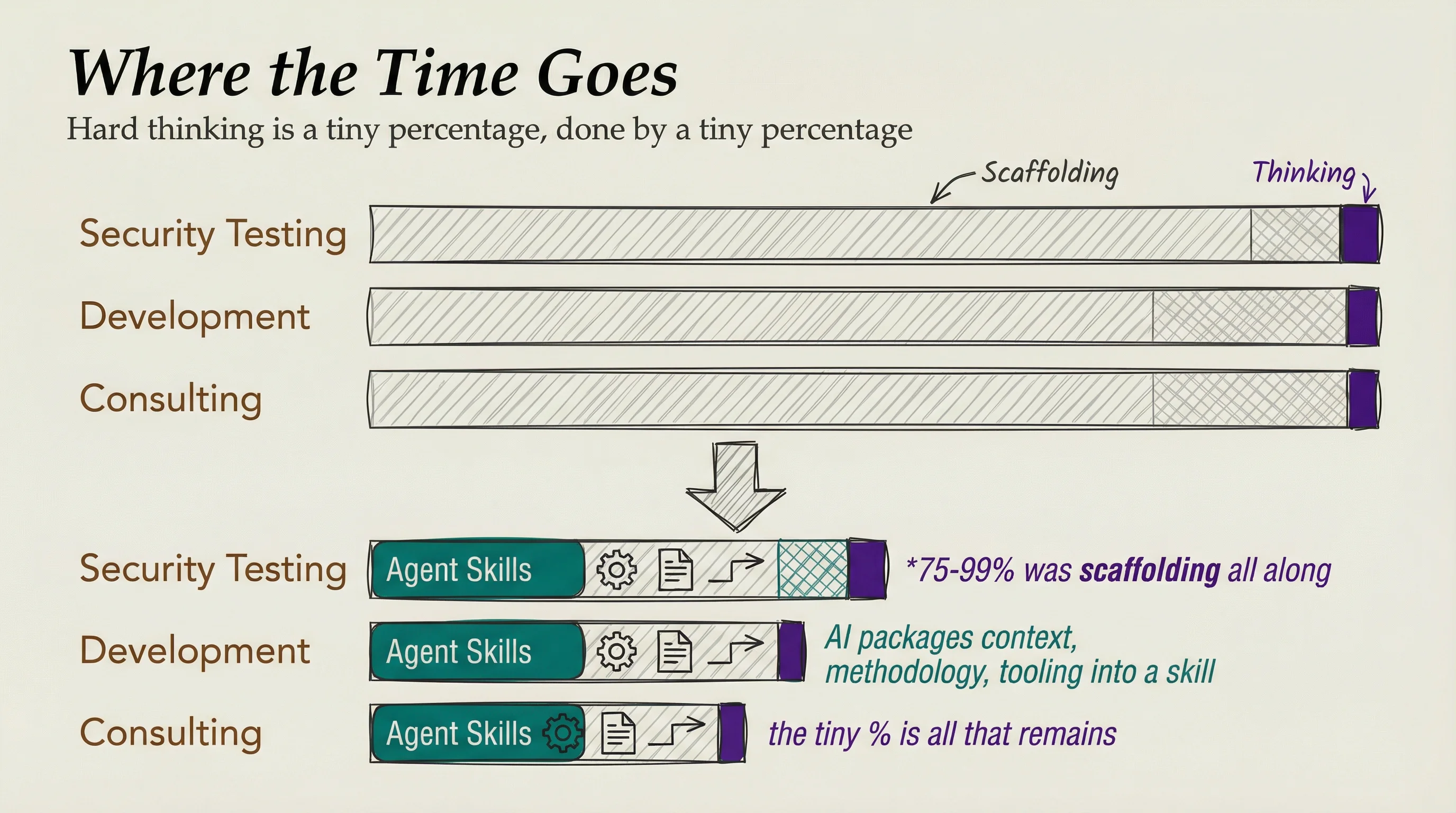

4. Most work is scaffolding

AI is revealing that 75-99% of knowledge work is scaffolding overhead. In security testing, development, consulting—most of the time goes to maintaining tooling, workflows, templates, and knowledge bases. The actual hard thinking is a tiny percentage, done by a tiny percentage of people, a tiny percentage of the time.

AI absolutely crushes the scaffolding part. Agent Skills have shown you can package all that context, methodology, and tooling into a skill, and the AI executes as good or better than most professionals. The work wasn't hard—maintaining the scaffolding was.

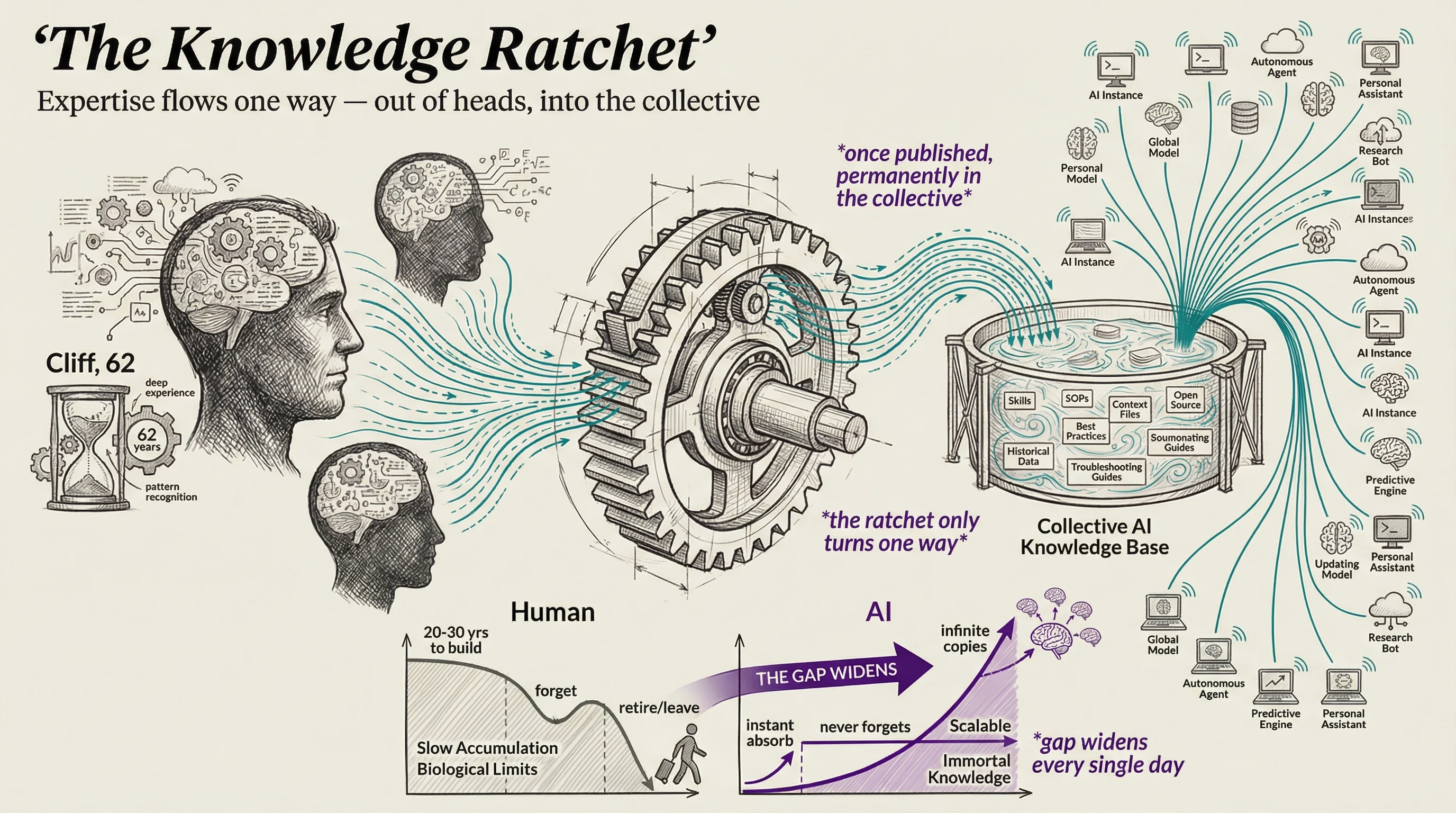

5. Expertise gets diffused into public knowledge

There's an articulation gap between what experts know and what's written down. Most expertise lives in people's heads. Cliff, the 62-year-old who knows how everything works but never documented any of it. When Cliff retires, that knowledge dies with him.

What's happening now is that expertise is dispersing from brains into skills, SOPs, context files, open source projects. And once it's captured it never comes back out. It's like pee in the pool. Every skill published, every process documented, every expert debrief captured—it permanently enters the collective knowledge base. And it makes every AI instance smarter. Not one. All of them. Simultaneously.

This is a one-way ratchet. Humans take 20-30 years to develop deep expertise in a single domain. They forget things, they retire, they leave companies. AI absorbs all captured expertise instantly, never forgets, and can be duplicated infinitely. The gap between human expertise accumulation and AI expertise accumulation is widening every single day.

Implications

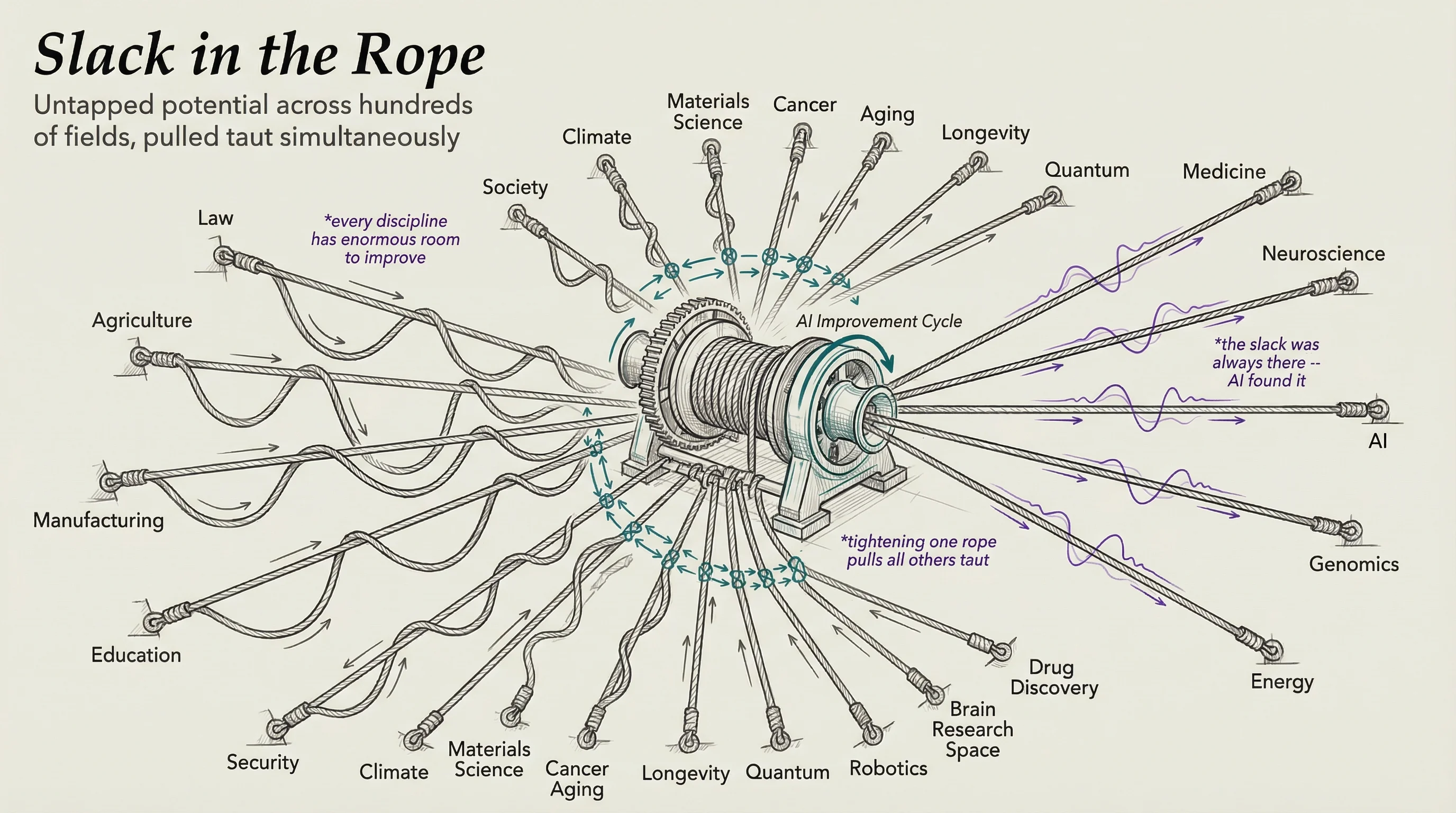

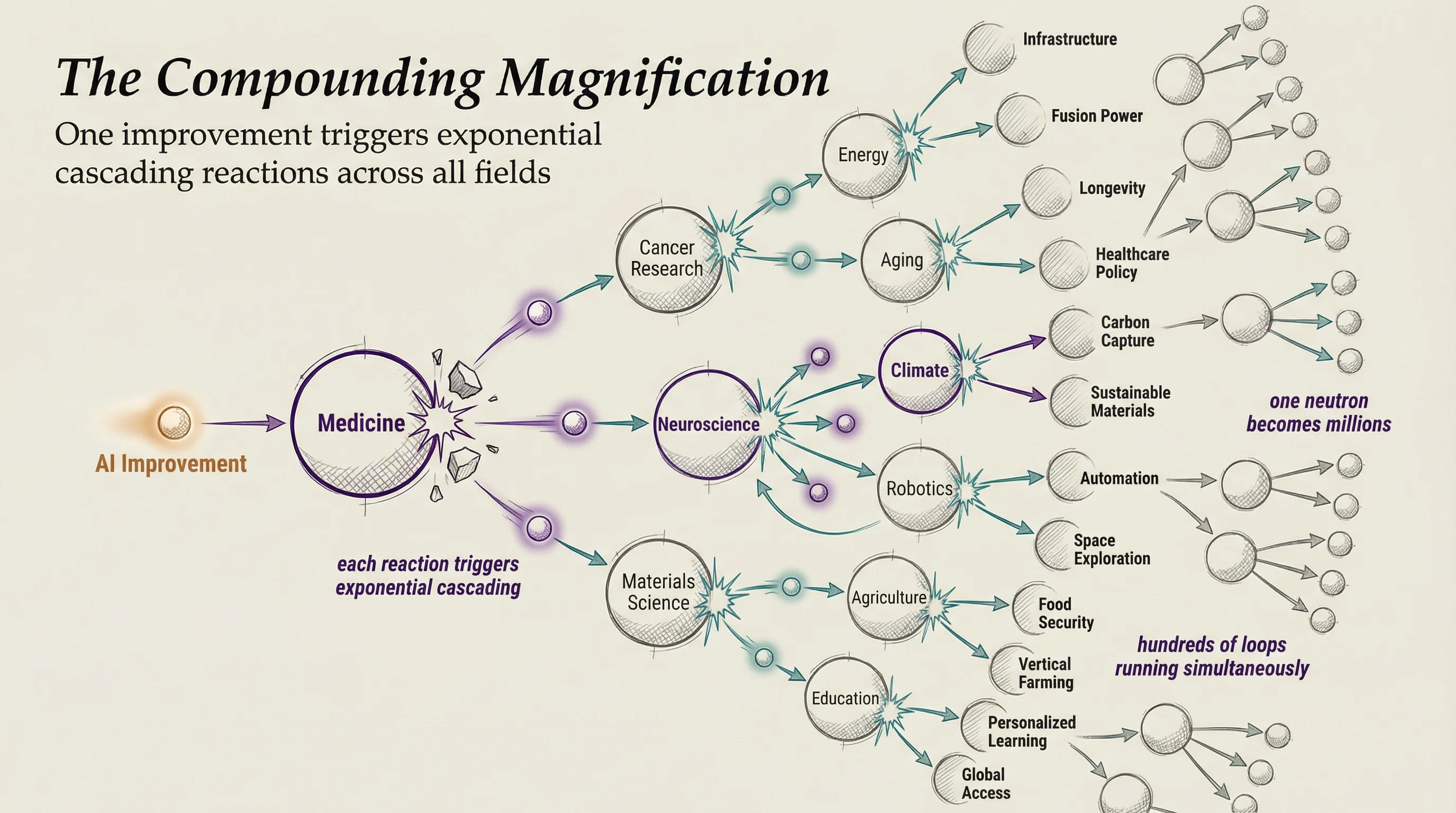

Autonomous improvement changes the speed of everything

The speed of improvement in many fields is about to accelerate beyond anything we've seen. When you can define what good looks like, measure against it, and iterate automatically—things that used to take months of manual tuning happen overnight. Autoresearch showed this for ML research. But this applies to security programs, consulting deliverables, content pipelines, hiring processes. Anything with a definable ideal state becomes autonomously improvable.

Every entity—companies, governments, teams, individuals—will run this same cycle: map goals, execute with agents, log everything, collect failures, improve autonomously, update SOPs. The entities that adopt this first will improve so fast that the ones who don't will be unable to compete.

Intent becomes the bottleneck

The new scarce skill isn't coding or prompting—it's being able to say what you actually want. And it has to be high-quality intent. The quality of the idea is always the most important thing. But the second most important is the ability to articulate it, define it as your actual goal, and orient the entire company around it. Most leaders can't do this. Most companies can't do this. The ones who figure it out first get to point all these optimization tools at real targets while everyone else is still hand-waving about OKRs.

Everything becomes transparent

We are about to see the world go from opaque vibes to transparent and optimizable components. Frauds and gatekeepers will have increasingly few places to hide.

This also makes it way harder to compete when you're selling products or services. Because the first thing the agents are going to ask is, "What are your metrics?" Not your marketing copy. Not your customer quotes. Your actual, verifiable performance data. If you don't have it, you lose to someone who does.

Scaffolding gets commoditized

The wizardry around certain fields and professions will be revealed as scaffolding that was simply not understood by most people. Such as how to stand up a particular dev environment and keep things running long enough to write some code. Same with law, consulting, and other high-paying professions.

Expert knowledge becomes public infrastructure

The knowledge that only experts used to have will soon be possessible by everyone—and most importantly by AIs. People's advantage of 50 years of experience in a field won't be one for much longer. Because that content will have been extracted by them or their colleagues from elsewhere in the world.

Summary and takeaway

The craziest thing about this is that they all play off and magnify each other.

It's not just that we're going to be able to improve all these different components; it's the fact that the speed of the improvement itself will improve.

Every company, every government, every organization is going to converge on the same cycle: define what you're trying to do, execute with agents, log everything, collect failures, and let the system improve itself. The ones that get there first will compound so fast that everyone else won't be able to catch up.

Of all these ideas, this is the most important to take away.

I cannot express to you how insane this is about to be.

Notes

- Karpathy, Andrej. "Software 2.0." Medium, Nov 2017.

- Karpathy, Andrej. "Autoresearch." GitHub, 2026.

- Miessler, Daniel. "AI is a Gift to Transparency." danielmiessler.com, May 2023.

- Miessler, Daniel. "AI's Ultimate Use Case: State Management." danielmiessler.com, Feb 2025.

- Miessler, Daniel. "Personal AI Infrastructure." danielmiessler.com, 2025.

- Miessler, Daniel. "How to Talk to AI." danielmiessler.com, Jun 2025.

- Miessler, Daniel. "Pursuing the Algorithm." danielmiessler.com, Jan 2026.

- Miessler, Daniel. "Nobody is Talking About Generalized Hill-Climbing." danielmiessler.com, Feb 2026.

- Miessler, Daniel. "Exactly Why and How AI Will Replace Knowledge Work." danielmiessler.com, Mar 2026.

- Miessler, Daniel. "AI Unmasked Our Work as Scaffolding." danielmiessler.com, Mar 2026.

- Scale AI and subsidiary Outlier AI — 700K+ PhDs and credentialed experts doing RLHF. Meta acquired 49% stake for $14.3B (June 2025).

- Surge AI — $1B+ annual revenue hiring domain experts in law, medicine, and coding for RLHF. Works with Anthropic, OpenAI, Google.

- Aligned — Expert RLHF from MIT, Stanford, and Harvard specialists.

- Prolific — 1,500+ verified domain experts. 30% accuracy improvement vs. general workers.

- OpenTrain AI — 100K+ pre-vetted experts across 130 countries.

- CleverX — 100K+ experts across 33+ verticals.

- Pareto AI — Expert-labeled training data for frontier AI companies.