Exactly Why and How AI Will Replace Knowledge Work

There's a constant discussion happening right now about AI and jobs. The dominant narrative says don't worry—humans are irreplaceable, and people who've been trying to move to AI will soon realize their mistakes.

I believe that thinking is both wrong and dangerous. Wrong because of how humans and AIs actually do work. Dangerous because if you don't believe the threat is real, you won't adequately prepare.

I want to talk about:why most companies fail, what they actually look like today, why human thinking is way less reliable than we pretend (but still better in important ways), what AI-first companies will look like, and why all of this is ultimately a good thing.

A quick note on my background

I normally don't talk about my background in these pieces because they're about my ideas, not about me. But in this case I'm making a bunch of claims about enterprises and work that raise the question—who the hell is this guy?

I've been helping companies of all sizes since 2003. I've held senior roles at companies like Apple, Robinhood, HP, and I've been deeply/long-term embedded inside multiple Fortune 50 companies. Most importantly, I've consulted for hundreds of companies—from massive enterprises to startups—helping them do everything from offensive security to full organization redesigns.

So I've seen the inside of a lot of companies. And what I've seen has given me a very clear picture of what's coming.

Why most companies fail

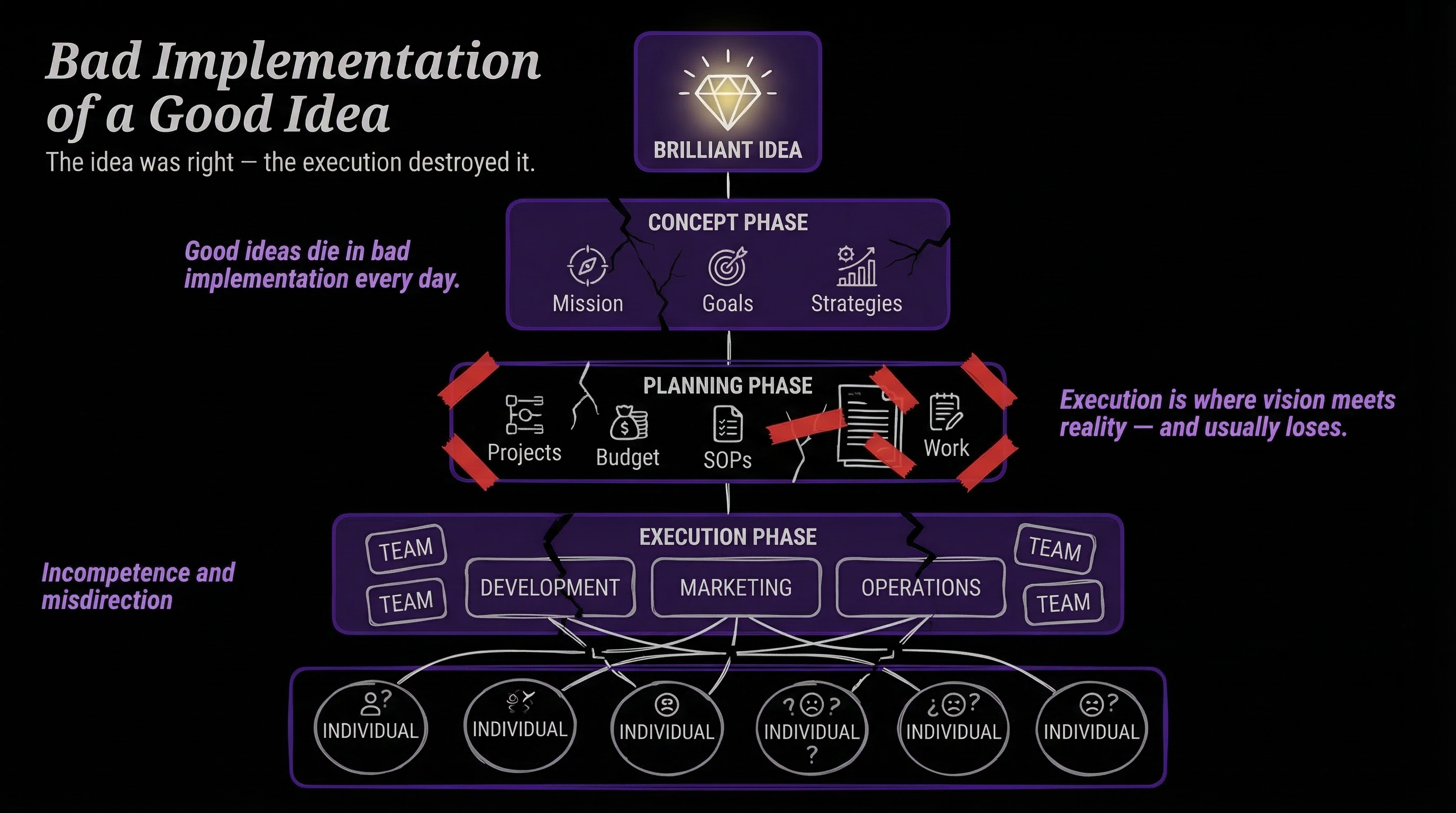

Companies basically fail for two reasons:

- Bad idea. Game over.

- Bad implementation of a good idea. And this is most of them.

The implementation failures cascade in predictable ways:

- The vision isn't clear—or the vision is clear but the plan isn't

- The plan is clear but the work isn't being done

- There aren't processes, or people don't follow the processes

- The people aren't knowledgeable or smart enough to do the work

- Work is done too inconsistently

- There's too much change, and it takes too long to propagate through the company

- Competition and fighting between management, departments, and employees

Every single one of these is a communication and coordination problem. That matters more than you'd think.

What most companies look like today

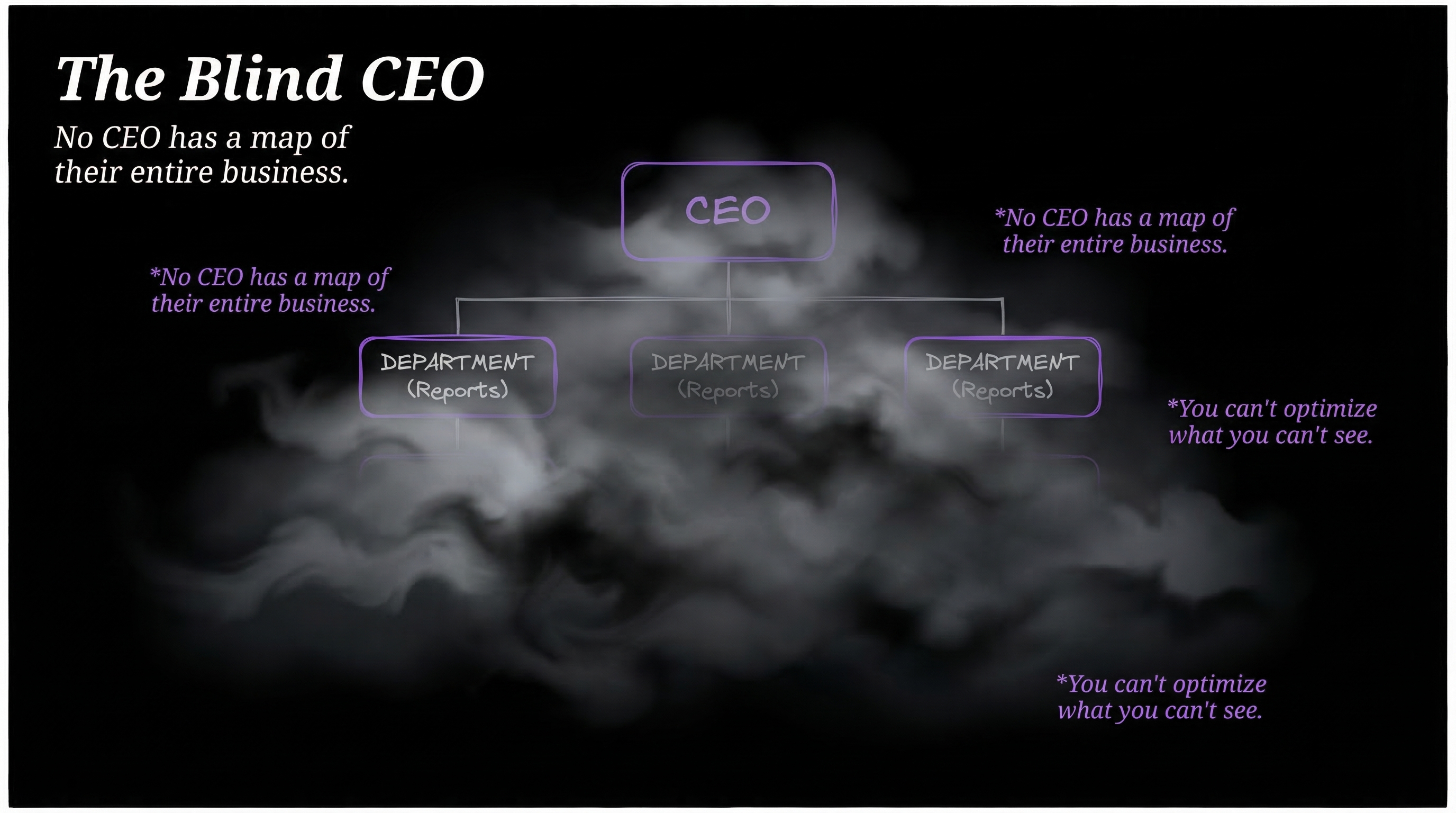

Nobody in leadership wants to admit this, but most companies are lucky to function at all.

- The vision is not clear

- There's constant churn in what people are supposed to be doing

- Constant meetings about meetings

- Massive disconnect between leadership and workers

- Documentation exists but people don't always follow it

- No CEO has a complete map of their entire business

- It's impossible to know who's doing what

- It's impossible to know what all the company's workflows actually are

- It's not clear where all the time and money is going

- Whatever information does exist is scattered across PowerPoints and docs, constantly changing

- People ultimately feel like their work isn't valued

This is the current state of the enterprise. This is what $50 trillion a year buys us.

The human reliability myth

There's a narrative right now that AI is horribly unreliable compared to humans. Like AI is the problem with consistency, and humans are the solution.

It's not that simple.

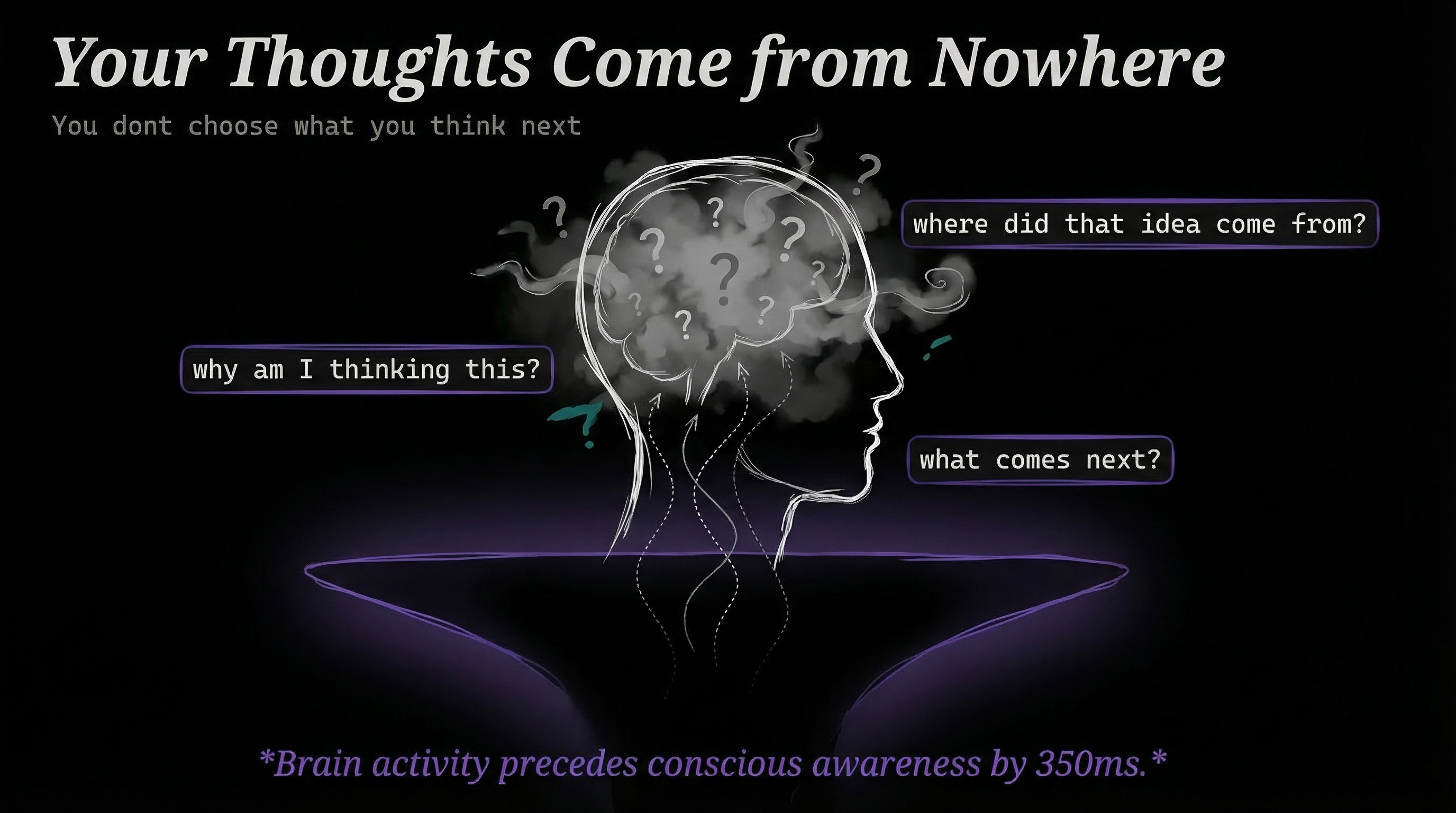

Your thoughts come from nowhere

People have zero idea what they're currently thinking, why they're thinking it, or what comes next. Thoughts arise from a void no one can locate. Libet's experiments showed that brain activity precedes conscious awareness of a decision by ~350 milliseconds. Your brain decides before you know it. As Sam Harris puts it, "You no more decide the next thought you think than you decide the next thought I write."

Your words come from nowhere

When you form a sentence, you don't know how it ends until the words come out of your mouth. Every utterance arrives from a black box as mysterious as any neural network—you just can't inspect the weights.

Your memory rewrites itself

The act of recalling a memory literally rewrites it. Each retrieval introduces distortion that compounds over time. After months or years, the stored version and the actual event have drifted apart beyond recognition.

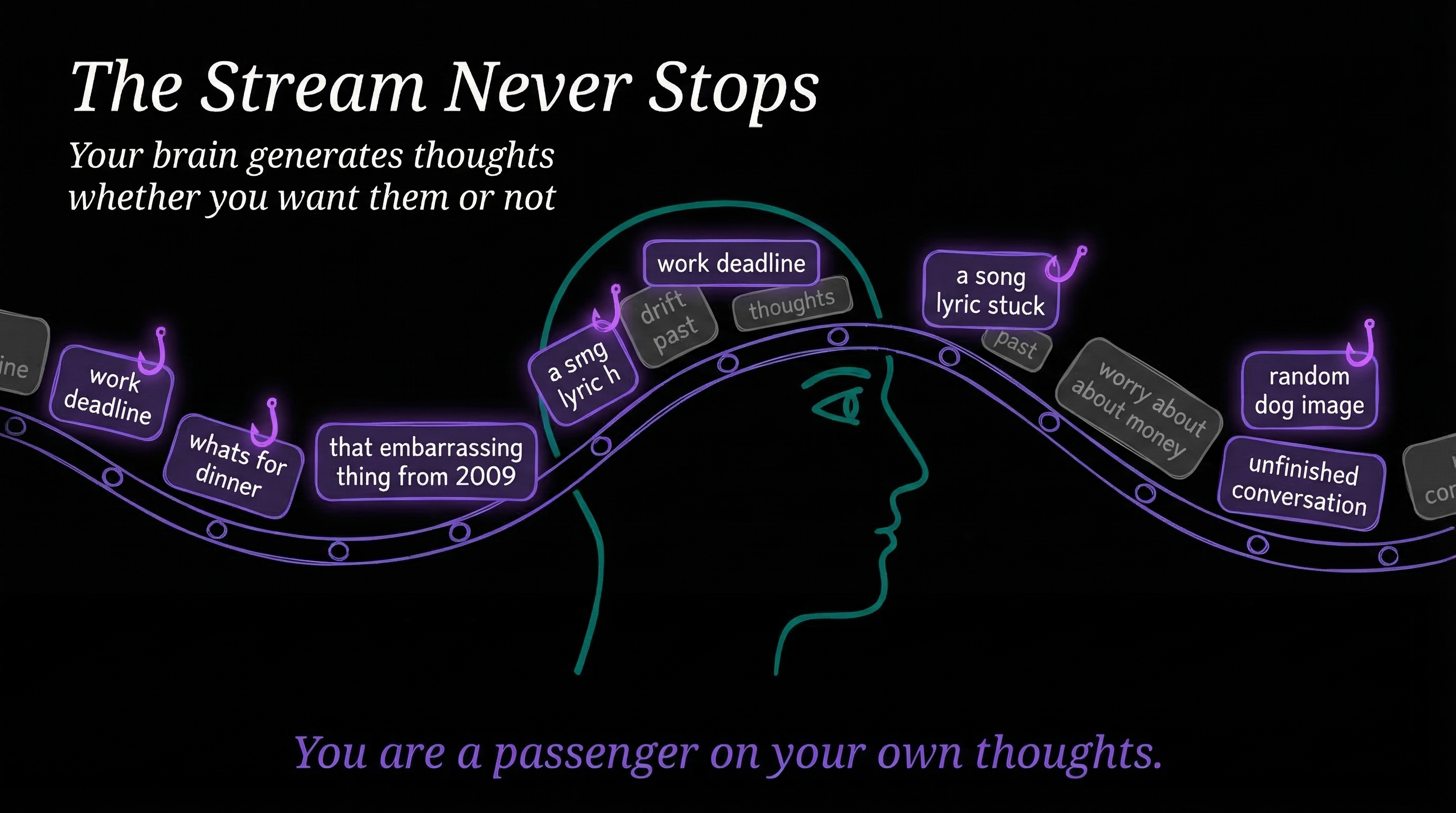

We're passengers on our own thoughts

Try this right now. Close your eyes and pay attention to what you're thinking. Just watch your thoughts for 30 seconds.

You'll notice two things. First, you can barely do it. Your mind wanders within seconds. Second, before you tried, you had no idea what was running through your head. Thoughts were just happening and you were along for the ride.

Research confirms this. People spend 47% of their waking hours thinking about something other than what they're currently doing. Even experienced meditators cycle through attention and mind-wandering on a timescale of seconds—meditation trains you to notice the drift faster, not to prevent it. And in nearly half of mind-wandering episodes, people have no idea they drifted until something snaps them back. Your brain runs a constant stream of rumination, planning, worrying, and random association, and you're mostly just a passenger.

This is the system we're comparing AI against.

Baseline knowledge is really low

40% of Americans didn't read a single book last year. Only 27% can pass a basic financial literacy quiz. Over 70% fail a basic civics test. 28% of US adults can't read at workplace proficiency levels—and that number is getting worse, not better.

And then there's the workplace

The numbers here are brutal. Only 21% of workers globally are engaged. 62% are doing the minimum. 15% are actively working against their own companies. Over 70% dread going to work on Monday. Only 15% of frontline workers say their work fulfills any sense of purpose. And half of all workers are watching for or actively seeking a new job.

This isn't new. We've been complaining about corporate life since the cubicle was invented in 1967. Dilbert launched in 1989. Office Space came out in 1999. Quiet quitting went viral in 2022. It's the same frustration, generation after generation, just with new language each time.

And all of this costs money. A lot of money.

Roughly $55 trillion a year globally. Nearly $12 trillion just in the US.

ILO Global Wage Report · BLS Employment & Wages

That's total compensation across all industries—not just knowledge workers. But the knowledge work portion is where the dysfunction concentrates. These are the people who can't find information, don't follow processes, sit in meetings about meetings, and lose half their day to thoughts they didn't choose to have. Tens of trillions a year for a system where the CEO can't see what's happening, the workers don't want to be there, and most of the information is wrong, out of date, or lost.

That number is exactly why AI is coming for all of it. Not because companies hate people—because $50 trillion is an extraordinary amount of money to spend on a system this broken. When AI can do most of this work faster, cheaper, and more consistently, the economics are inevitable.

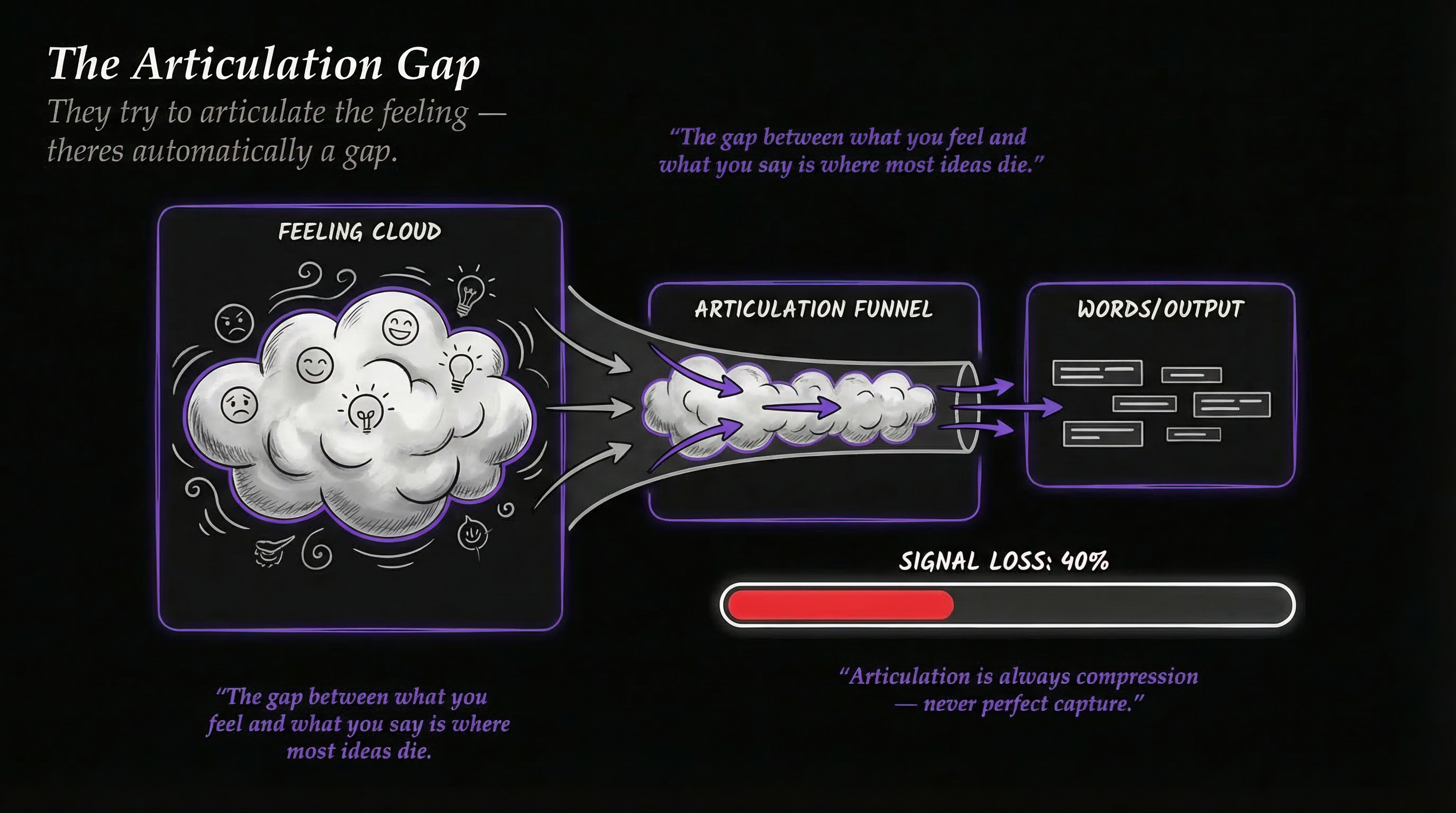

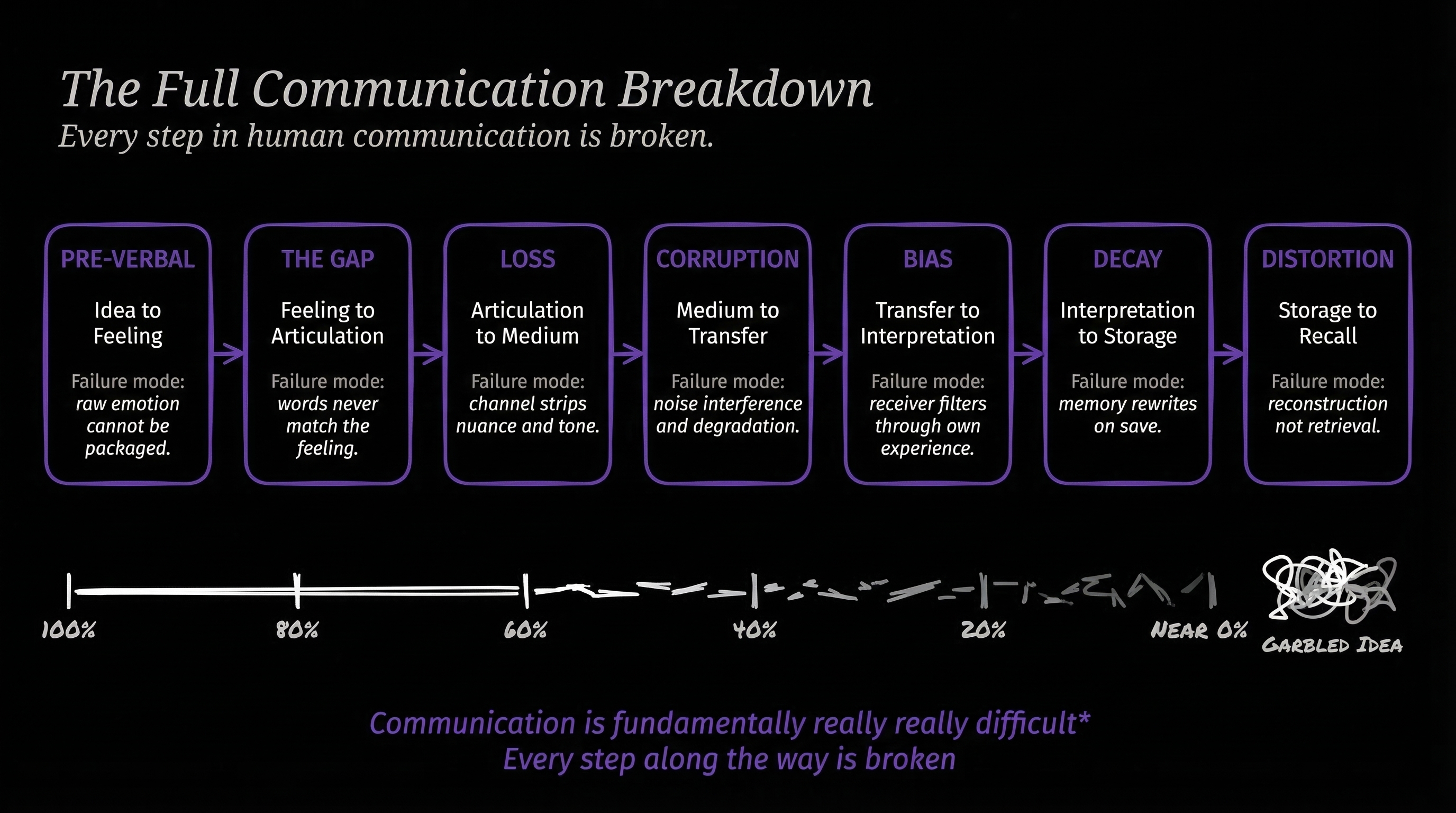

The communication breakdown chain

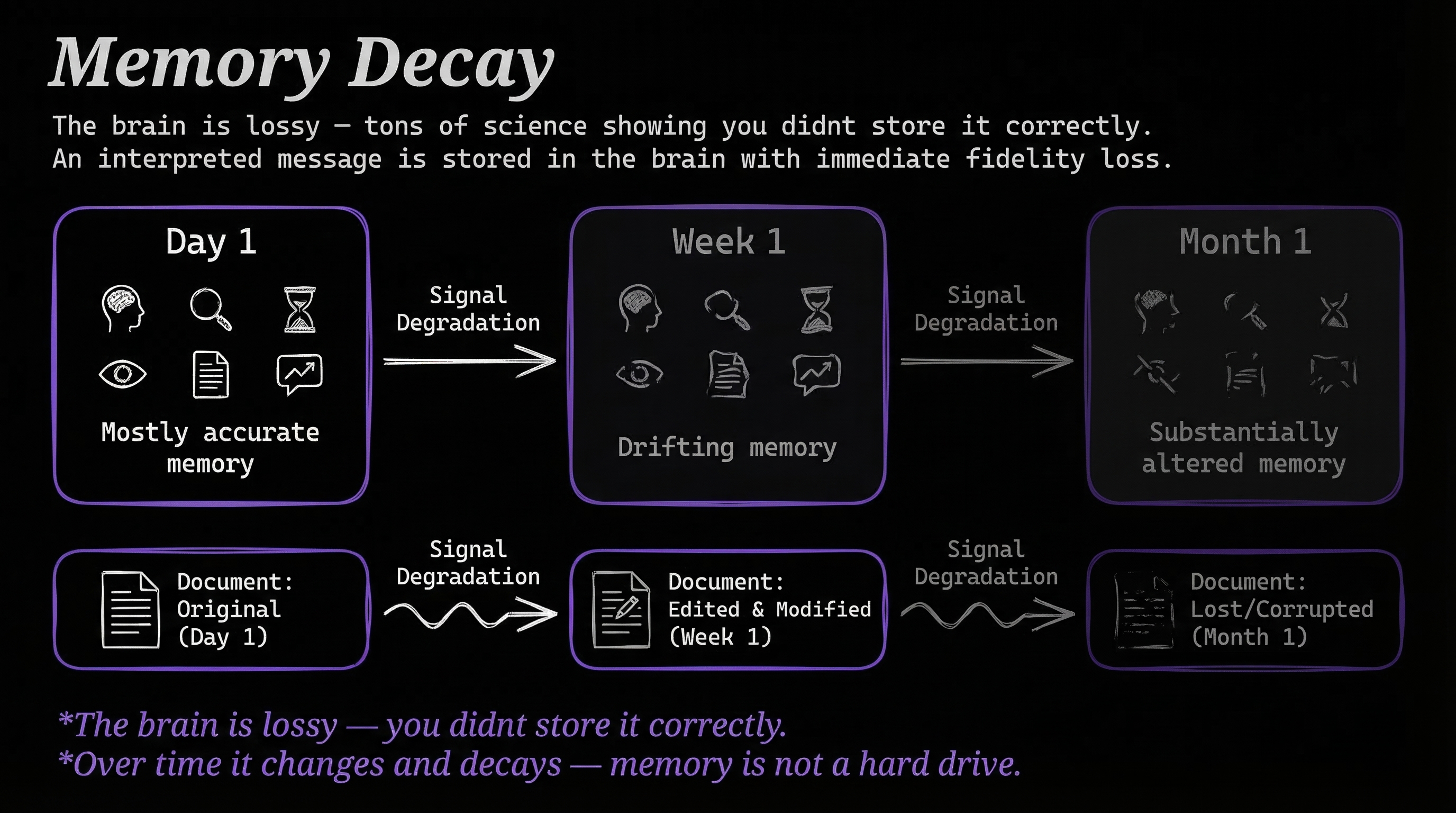

Companies are this dysfunctional because communication itself is broken. Every step in the chain loses signal.

- Idea → Feeling—An idea pops up from a place you don't even understand. It's a feeling, not words.

- Feeling → Articulation (GAP)—You try to put the feeling into words. There's automatically a gap. The words don't capture it.

- Articulation → Medium (LOSS)—You speak it, write it, sign it. Another loss. You didn't write it down correctly.

- Medium → Transfer (CORRUPTION)—Bad Zoom call. Garbled recording. Email buried in a thread. The medium corrupts the signal.

- Transfer → Interpretation (BIAS)—The receiver reads it and brings their own context, experience, and assumptions.

- Interpretation → Storage (DECAY)—The brain is lossy. Tons of science shows you didn't store it correctly. Over time it changes and decays.

- Alternative Storage—Maybe it's written down. But what's the state of that document? Changed? Modified? Lost?

Every step loses signal. By the time the CEO's vision reaches the front-line worker, it's a garbled mess.

Companies, employees, and work

If you break it down, companies, employees, and work are pretty simple things.

What is a company?

A company is largely made up of:

- Ideas and leadership

- Goals and plans

- Metrics and work

- Cost, quality, and process

Most everything else is derived from those.

What do employees provide?

Employees mostly provide some combination of:

- Knowledge—facts about the world and their domain

- Understanding—the ability to turn knowledge into concepts and make connections

- Intelligence—the ability to use understanding to navigate problems and achieve goals

- Creativity—the ability to come up with something new and valuable

What is work?

One way of thinking about work is that it's mostly:

- Having knowledge about something

- Taking instructions on what to do with that knowledge

- Following those instructions

- Adapting when the instructions change

Work is a series of steps. It's an algorithm.

I wrote about this in Companies Are Just a Graph of Algorithms. Take any company and break down what it does. A photo company receives an image, scans it, cleans it up, stylizes it, adds a caption, and delivers it. That's a series of steps. Each step breaks down into sub-steps. Those sub-steps break down further. It's algorithms all the way down.

And it's not just the product. Hiring is an algorithm. Onboarding is an algorithm. Paying taxes, doing marketing, handling support—all algorithms. Every department in the company is running some version of: receive input, process it, produce output, hand it to the next step. When you see it that way, a company is just a graph of interconnected algorithms being executed mostly by humans.

So what part of this can humans do that AI can't?

Most people would say creativity. But creativity is the least required capability in most work—McKinsey found that only 4% of US work activities require creativity at median human level. The vast majority of work is following processes. And AI is getting better at that every month.

Too many people assume humans are extraordinary at doing work. But look at the evidence above. Our thoughts come from somewhere we can't see. Our memories rewrite themselves every time we access them. We spend half our waking hours thinking about something other than what we're doing. We can't pass basic literacy, financial, or civics tests. We don't follow processes. We hate our jobs. And we're getting paid $50 trillion a year for all of it.

There's not much evidence that humans are great at this. And a whole lot of evidence that AI either already can—or will very soon—do a better job at most of these tasks.

So what does that future actually look like?

The AI-first enterprise

The old world

In the old world, people ARE the company. They do the work. Documents exist but people don't always follow them. The person who maintained the docs left—now nobody follows the policy. No CEO has a map of their entire business. Software gets purchased through steak dinners and sales pitches. A human buys a software package—maybe they use it, maybe they don't.

The new world

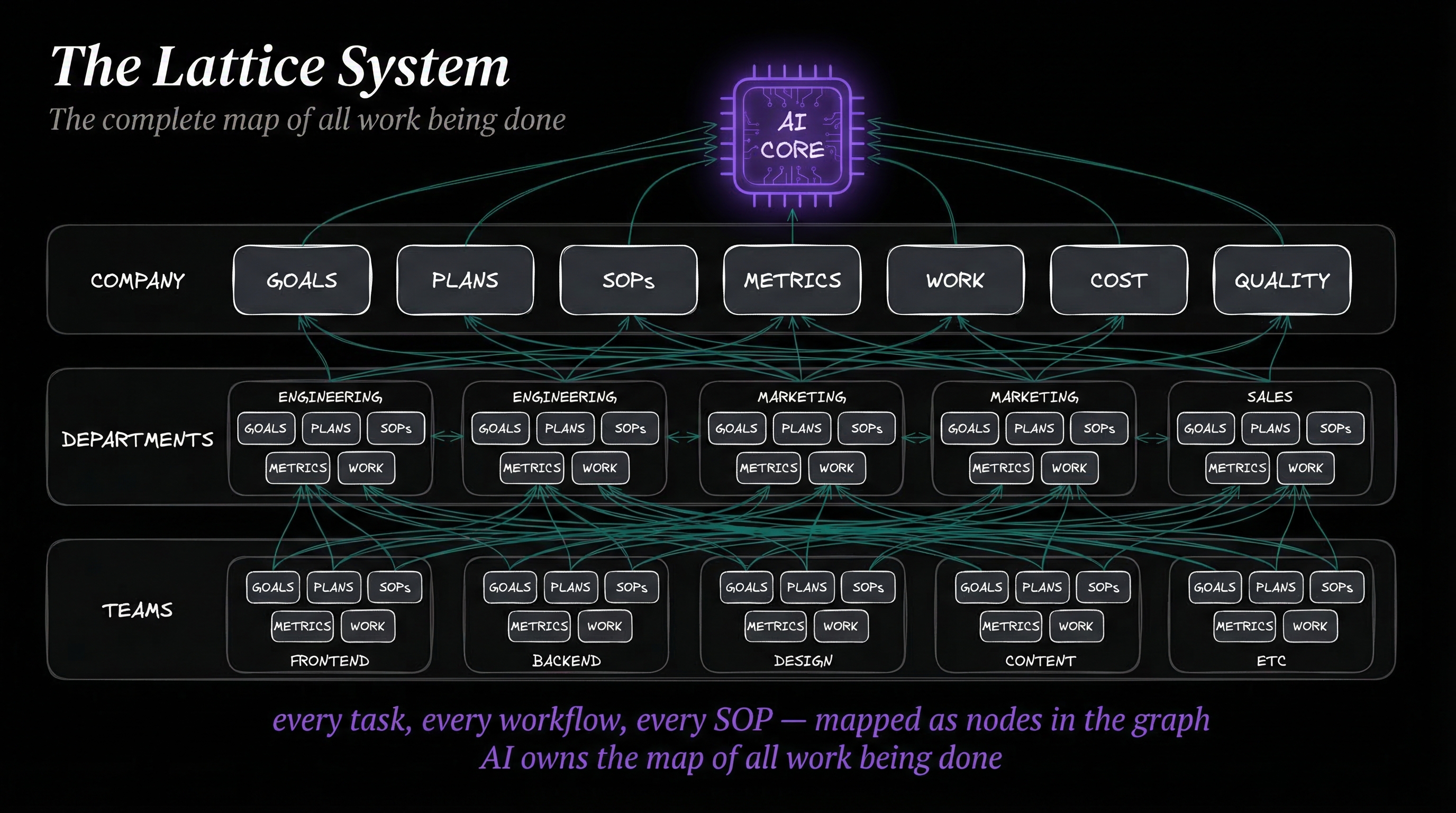

In the new world, each level of the organization—company, department, team, individual—runs its own AI harness. Each harness publishes APIs for that entity's goals, plans, SOPs, metrics, work, and budget. Each entity is authoritative over its own data. Nobody else controls it.

But every entity can query every other entity's APIs. Engineering can check Security's SOPs. Marketing can pull Finance's budget numbers. And all of it reports up to a central Lattice Daemon—a Unified Entity Context that aggregates everything from every level.

The daemon doesn't control anything. It collects. The CEO and CFO each have their own AI instances that look down at the daemon and get complete company visibility in one query. Total SOPs, total workflows, department goals, team metrics, budget allocation—all from one API.

Authority is distributed. Visibility is centralized.

Humans are still there, but their job changes. They're responsible for improving their entity's AI harness and updating their published APIs. "Hey, we need to change this SOP"—you have a conversation with your harness—it updates everything: documentation, SOPs, cross-references, downstream processes. Other entities that reference your SOP get notified automatically.

Goals get canonicalized

What are we actually trying to do as a company? With the lattice, goals flow from mission down through strategies, projects, budget, SOPs, and work. Everything is captured, turned into text, turned into actual things you can look at and measure. No more fuzzy, scattered, out-of-date documentation.

How software buying changes

This changes how software gets bought.

Old way: Human-to-human marketing leads to a software package purchased by a human who may or may not use it.

New way: The enterprise has a graph of all operations. Click on a node—say, background image removal—and you see metrics: speed, cost, failure rate, success rate, ratings. Now prove your software does this function better. If it's not on the function map, the contract gets cancelled. It's gone.

No more steak dinners. No more sales pitches. Prove your value against the function map or get replaced.

The reverse automation frame

One way I like to think about this is to flip the question. Instead of asking "what can we automate?", imagine a system that already works perfectly—and then ask where you'd insert a human to make it better.

Picture a chip fabrication line. Robots placing transistors at 5 nanometers—smaller than a virus. Thousands per second. Zero defects. Running around the clock. Now imagine someone walks in and says "let's have humans solder these by hand." You'd laugh them out of the building. A human hand would destroy the entire wafer just by touching it.

Or think about an Excel spreadsheet that pulls live data from six APIs, runs a regression, formats the output, and emails it to your team every morning at 6am. Perfectly, every time. Would you replace that with a person sitting at a desk, manually copying numbers from one tab to another? Obviously not.

These examples feel obvious because the automation already happened. But that's exactly the point. Knowledge work is different from chip fabrication—it involves judgment, ambiguity, and context that pure automation can't always handle. But most knowledge work isn't judgment calls. It's data entry, report formatting, email routing, meeting scheduling, status updates. Start from a system that handles those perfectly and ask where you'd add a human to improve it. For most of those tasks, the honest answer is: you wouldn't.

What humans actually do better

At this point you're probably thinking: okay, this guy just spent 3,000 words trashing humans and cheerleading for AI. What's the point?

The point is to be honest about what AI does better than us—either right now or very soon. Because if we're not honest about that, we can't have a real conversation about what humans actually bring to the table that AI doesn't. And it turns out that what we bring is the most important part.

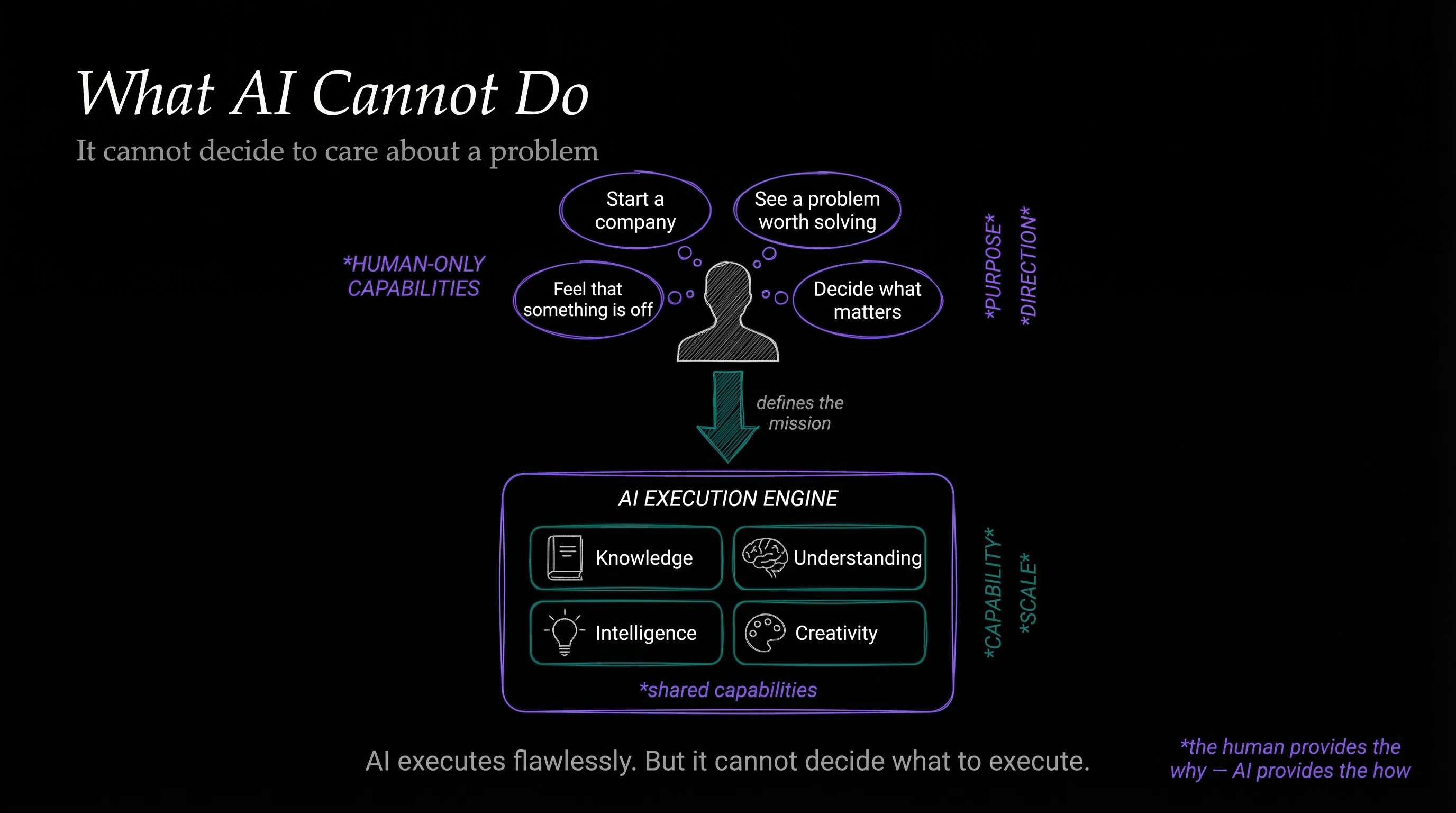

There's a capability stack that both humans and AI share. From bottom to top:

- Knowledge—The ability to collect, organize, and access facts about the world

- Understanding—The ability to turn knowledge into concepts, relate them to each other, make connections

- Intelligence—The ability to use your understanding to navigate the world and achieve goals

- Creativity—The ability to create something new in the world

AI is rapidly matching or exceeding humans on all four layers of this stack. Models already beat human expert baselines on MMLU (broad knowledge), SWE-bench solve rates went from 4% to 72% in a single year, and AI agents now outperform human experts on short-horizon research tasks.

Most people think the differentiator is creativity. It's not. It's two layers that sit beneath the entire stack, and AI doesn't have either of them.

The human-only layers

- Desires—built-in drives from evolution. The reward and punishment system—dopamine, serotonin—that forces us to want things.

- Subjective Experience—consciousness. The ability to feel satisfaction, frustration, curiosity, boredom. The thing that makes achieving a goal feel like something.

AI has neither of these. Not partially. Not in a limited way. Not at all.

- AI does not have goals. We give it goals.

- AI does not have desires. We assign it desires.

- AI is not trying to do anything. We tell it what to try.

Everything AI does is emulated. The knowledge is real. The understanding is real. The intelligence is real. The creativity is real. But the reason to do any of it? That's entirely human. Without someone deciding what matters, AI sits there and does nothing. It has no reason to move.

What happens when an AI wakes up in the morning and hasn't been given a task? Nothing. Literally nothing. It will sit idle forever. It has no reason to care.

That's the difference. The reason to start a company, the reason to solve a problem, the reason to build something that didn't exist before—all of that comes from the human layers. The top four layers of the stack are the engine. The bottom two are the driver. And AI doesn't have a driver.

Look at the stack again. AI is going to outperform us on those top four layers. The benchmarks are already there for knowledge and understanding, and intelligence and creativity are closing fast. That trajectory is clear.

But those bottom two layers—desires and subjective experience—AI doesn't have them. And it's not going to get them anytime soon, if ever. Those layers are what make the human role more important, not less.

The human as overseer

AI can't decide to start a company. It can't look at a market and think "someone should fix this." It can't wake up at 3am with an idea it can't let go of. It can't decide that the current workflows are executing well but executing on the wrong problem. It can't feel that something is off.

Who decides what work to do? Who decides if it's the right work? Who looks at what the AI built and says "this isn't good enough—try again, differently"? Who steers?

Humans do. And that's a much better job than the one most people have now.

Instead of being a cog executing someone else's process, you become the person who decides what the process should be. You're the overseer, the optimizer, the one asking better questions. You evaluate whether the AI's output matches the goal. You notice when the goal itself needs to change. You bring the judgment that only comes from wanting something.

That's not a demotion. Going from "person who follows the process" to "person who decides what the process should be" is a massive upgrade. It's the difference between typing in a spreadsheet and deciding what the company should build next.

Why this is good news for humans

You might think this is depressing. We're basically mech exoskeletons for evolution, given desires by evolution to achieve evolution's goals.

Sure. You can think that way. You could also think that we're on a giant sphere of atoms and thermodynamics determines everything, so why does ice cream taste good?

I don't care about the mechanism. I care about human experience.

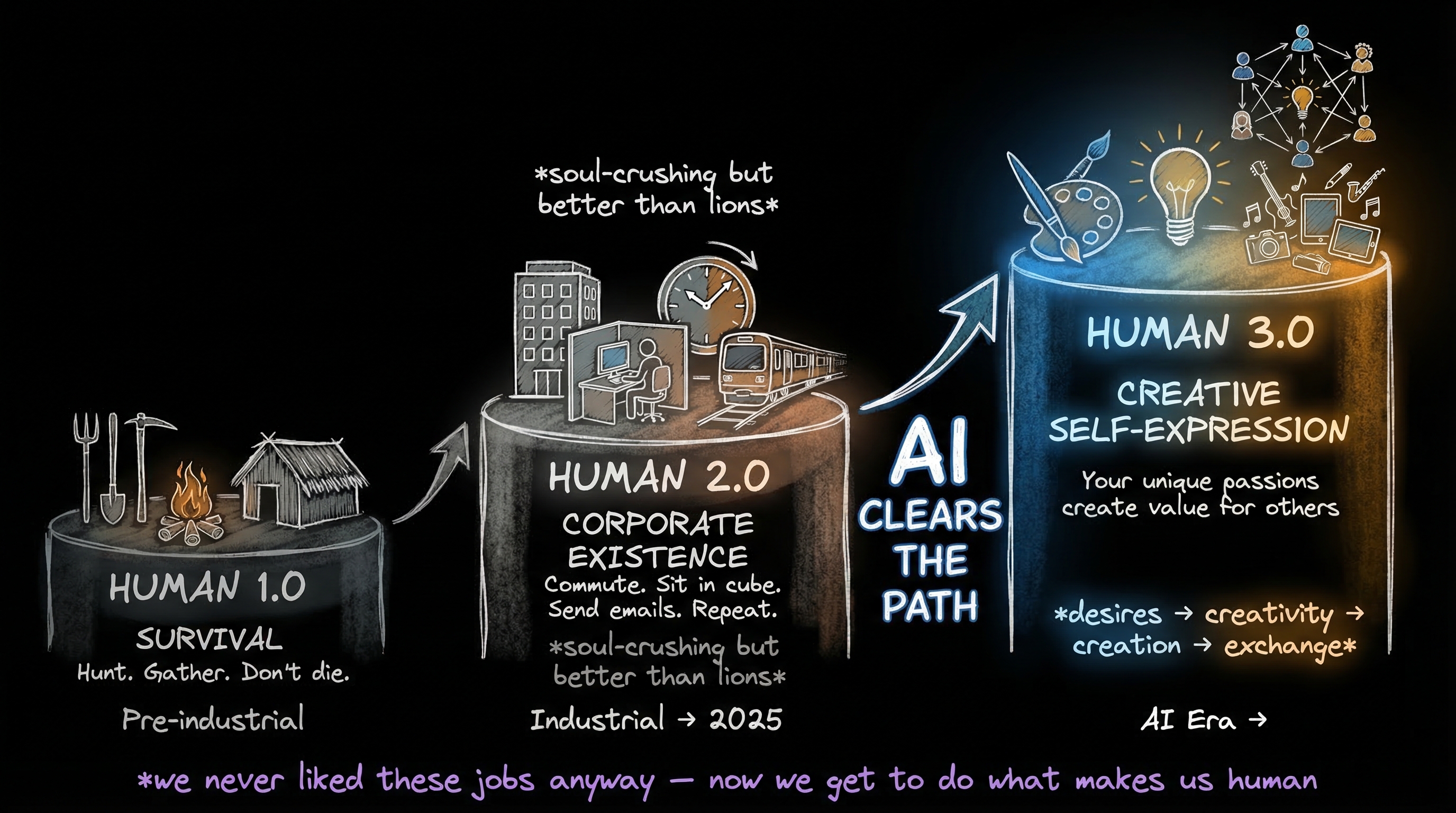

All of these corporate jobs are meaningless. Sitting in a cube all day long, commuting to the cube, adding up numbers and sending out emails. That makes us basically an Excel spreadsheet with an email send function. What kind of life is that?

The fact that AI is coming to take all of those jobs? Who cares. Those are functions for bringing an idea into reality. The factory's purpose is to produce the thing that came from the idea. The idea is the human part.

This is why I call what I do Human 3.0.

- Human 1.0—Survival. Farming, hunting, gathering. Don't get eaten by the lion. Basic existence.

- Human 2.0—Corporate existence. Hierarchies, separation of labor, grinding corporatism. Better than scraping along trying to survive, but not by as much as you'd think.

- Human 3.0—Creative self-expression. We link our desires to our creativity to creation. The thing that makes you unique—your interests, curiosities, passions—you get to pursue that in order to create things of value for others.

We use all this amazing technology—all this amazing AI—and its purpose becomes serving our goals and needs. Connecting all the things we humans create as a result of being our true selves. We exchange those things with each other. That becomes the new economy.

Not the hierarchy-based corporate economy. Not the hunter-gatherer economy. A creativity-based economy. Value exchange. Creativity exchange. Humanity exchange.

Yes, it's scary. The system we grew up in is being replaced, and that's unsettling no matter how broken it was.

But think about what we're actually losing. Cubicles. TPS reports. Meetings about meetings. Seventy percent of people dreading Monday. Fifteen percent actively sabotaging their own company. Half the workforce watching the clock. That's what $50 trillion a year has been buying.

The factory is being rebuilt. The enterprise is becoming an AI-powered engine. That part is inevitable—the economics are too obvious to resist.

But here's what's not obvious, and what most people miss: the thing that survives isn't the process. It's the person who decided the process should exist. The one who felt a problem was worth solving. The one who woke up wanting to build something.

The knowledge, the understanding, the intelligence, the creativity—AI will handle all of that. What it will never handle is the wanting. The caring. The deciding that something matters enough to pursue.

That's yours. That has always been yours. And for the first time in history, you'll have a $50 trillion machine ready to execute on whatever you point it at.

The question isn't whether AI will replace how work gets done. It will. The question is what you'll build once it does.

Notes and sources

Libet, B. et al. (1983). "Time of conscious intention to act in relation to onset of cerebral activity." Brain, 106(3), 623-642. Brain activity precedes conscious awareness of decisions by ~350ms.

Harris, S. (2012). Free Will. Free Press. "You no more decide the next thought you think than you decide the next thought I write."

Nader, K., Schafe, G.E., Le Doux, J.E. (2000). "Fear memories require protein synthesis in the amygdala for reconsolidation after retrieval." Nature, 406, 722-726. Memory reconsolidation — recall rewrites the memory.

Levelt, W.J.M. (1989/1999). Speaking: From Intention to Articulation. MIT Press. Blueprint of the speaker (PDF). Incremental sentence production — speakers don't know how their sentence ends when they start.

Schultz, W., Dayan, P., Montague, P.R. (1997). "A neural substrate of prediction and reward." Science, 275(5306), 1593-1599. Dopamine neurons encode reward prediction errors.

Berridge, K.C. (1998). "What is the role of dopamine in reward." Brain Research Reviews, 28(3), 309-369. The "wanting" vs "liking" distinction in the reward system.

Killingsworth, M.A. & Gilbert, D.T. (2010). "A wandering mind is an unhappy mind." Science, 330(6006), 932. People spend 46.9% of waking hours thinking about something other than what they're doing. n=2,250.

Hasenkamp, W. et al. (2012). "Mind wandering and attention during focused meditation." NeuroImage, 59(1), 750-760. Even trained meditators cycle through attention and mind-wandering on a timescale of seconds.

Smallwood, J., McSpadden, M., & Schooler, J.W. (2007). "The lights are on but no one's home." Psychonomic Bulletin & Review, 14(3), 527-533. Nearly half of mind-wandering episodes occur without any meta-awareness.

YouGov (2025). "Most Americans didn't read many books in 2025." 40% of Americans read zero complete books; median was 2 books. n=2,203.

OECD PIAAC (2024). "U.S. National Results." 28% of US adults have literacy at Level 1 or below (up from 19% in 2017); 34% have numeracy at Level 1 or below. Data collected 2022-2023.

FINRA Foundation (2024). "National Financial Capability Study." Only 27% pass a 7-question financial literacy quiz; only 4% answer all correctly. n=25,500.

U.S. Chamber of Commerce Foundation (2023). "Alarming lack of civic literacy." Over 70% failed a basic civics quiz. n=2,000 registered voters.

Annenberg Public Policy Center (2024). "Many don't know key facts about the Constitution." Only 65% can name all three branches of government; only 7% can name all five First Amendment rights.

NSF (2024). Science & Engineering Indicators — Public Attitudes. ~26% of Americans still answer incorrectly that the Sun orbits the Earth. Stable since the 1980s.

Bone, J.K. et al. (2025). "The decline in reading for pleasure." iScience, 28(9). Daily pleasure reading dropped from 28% to 16% (2004-2023). n=236,270.

ILO (2024). Global Wage Report 2024-25. Global labour income share ~52.4% of GDP (~$55T total compensation).

Bureau of Labor Statistics. Quarterly Census of Employment and Wages. US total wages ~$11.7T (2024).

Gallup (2025). State of the Global Workplace. 21% engaged, 62% not engaged, 15% actively disengaged. Disengagement cost $438B in 2024. n=227,347.

Gallup (2024). "U.S. Employee Engagement Sinks to 10-Year Low." 31% engaged in US — lowest since 2014. 52% quiet quitting.

Kickresume (2024). "Sunday Scaries Survey." 70% of workers experience Sunday dread; 36% every single week. n=2,144.

McKinsey. "Help Your Employees Find Purpose." Only 15% of frontline workers say their work fulfills their sense of purpose.

Microsoft/LinkedIn (2024). Work Trend Index. 46% of workers considering quitting. n=31,000 across 31 countries.

McKinsey & Company. "Why do most transformations fail?" ~70% of organizational transformations fail.

McKinsey Global Institute (2017). A Future That Works. Only 4% of US work activities require creativity at median human level.

Ghosh, S. (Harvard Business School). "3 out of 4 venture-backed startups fail." 75% fail to return investors' capital.

Eisenmann, T. (2021). "Why start-ups fail." Harvard Business Review. More than two-thirds never deliver a positive return.

National Skills Coalition (2023). "Nearly 1 in 3 workers lack foundational digital skills." 33% of workers lack basic digital skills; 92% of jobs require them.

WEF (2025). Future of Jobs Report 2025. 63% of employers cite skills gap as key barrier to transformation. 39% of required skills expected to change by 2030.

AI benchmarks and performance

Stanford HAI (2025). AI Index Report 2025. Comprehensive annual survey of AI technical performance vs. human baselines across domains.

Stanford HAI (2025). Technical Performance Chapter. AI exceeds human expert baselines on MMLU; SWE-bench solve rates rose from 4.4% to 71.7% in one year; AI agents outperform human experts on short-horizon research tasks.

Hendrycks, D. et al. (2021). "Measuring Massive Multitask Language Understanding." MMLU benchmark — human expert baseline ~89.8%, surpassed by frontier models.

Jimenez, C.E. et al. (2024). "SWE-bench: Can Language Models Resolve Real-World GitHub Issues?" Real software engineering benchmark. Leaderboard.

Rein, D. et al. (2024). "GPQA: A Graduate-Level Google-Proof Q&A Benchmark." Diamond-difficulty questions where AI matches PhD-domain-expert baselines.

Shannon, C.E. & Weaver, W. (1949). The Mathematical Theory of Communication. University of Illinois Press. The foundational model of signal degradation.

Simon, H.A. (1955). "Bounded rationality and organizational learning." Organization Science. Executives operate with incomplete information.

CEIBS. "Why are CEOs always the last to know the truth?" Hierarchical filtering creates systematic blind spots.