Exactly Why and How AI Will Replace Knowledge Work

There's a common narrative right now that you don't need to worry about AI if you're a knowledge worker. Humans are special. Humans are unique in the way they approach work. AI is just a text generator—just an LLM with no understanding, no knowledge, that doesn't really understand anything about what it's trying to do. It can't possibly replace human workers.

This is a very popular narrative for multiple reasons. One, it makes us feel better. It makes us feel safe, because people are feeling afraid for a good reason right now. And it's a very common thread: relax, you don't have to worry. AI is actually kind of garbage and humans are basically awesome.

I think this is wrong. And more importantly, I think it's dangerous.

I think it's wrong for a bunch of reasons I'm about to talk about. I think it's dangerous because if you adopt this mindset—if you accept this argument—you will not properly prepare for what is coming.

Keep in mind, this argument is being made by some very smart people. There are hundreds of people saying this very loudly, and more importantly, with a following. And so I want to come at this argument directly. I want to start by talking about what work actually looks like inside of companies, and break down the common things I see all over the place and have seen over the course of my career.

A quick note on my background

Normally I don't talk about my background because I just don't care about my background. I mostly just want to hear the content, the ideas. But in this case I'm making a lot of claims about how businesses function, how they work, and I think it raises a natural question which should be asked: why should I believe you?

The answer is I've been working inside of companies for over 25 years. My background is strictly information security, but I've held senior roles at Apple—I built a program and a product there which is still running. I've worked at Robinhood, HP, I've been embedded in very large energy companies. Tons of experience in the Fortune 10, let alone the Fortune 100.

But even more important for the context here: through consulting, I've actually worked for hundreds of companies in the top 10,000 companies in the world. Hundreds, over 25 years. Inside of all these companies, I have seen the problems I'm about to describe over and over and over. And when I describe them, I'm sure you've seen them too. If you've worked in multiple companies over the course of your career, all of these are going to be extremely familiar. They're not even controversial.

The current state of companies

The natural state of thinking about companies is: everything seems to be fine. Why are we even talking about bringing AI into these companies? We have a good economy. We've built plenty of companies, plenty of market share, the Dow was doing great for all these decades. So what actually is the problem?

The way I answer that is most companies are actually a giant soup sandwich—or a football bat. These are military terms, but essentially there is absolute chaos in most companies. Most companies, it's actually difficult to find out what they're actually trying to do, what they're actually trying to build. Especially as a worker, it's very hard to get a clear message of what exactly the mission is.

Companies basically fail for two reasons. Bad idea—game over. Or bad implementation of a good idea. And this is most of them.

The vision isn't clear

The first problem I see in a lot of these companies, and that I'm sure you've seen as well, is the vision isn't always very clear. In fact, sometimes it's nonexistent. Sometimes it's not conveyed all the way through the company. People aren't really sure exactly what they're working on and why. That's a huge problem.

There aren't processes

Another really big one is there aren't really SOPs—standard operating procedures. That's what we call them in the military. Most companies just call them processes. How do you actually do things?

The problem isn't that there isn't some document somewhere that says how to do things. The problem is there might be multiple of them and they change all the time, or they decay over time, or people are referring to the wrong one. Things just keep getting done incorrectly throughout the company. And the more layers of management you have, the larger the company, the longer it's been around, the more employees you have—the worse this problem gets.

Now, I want to caveat something. There are a few companies, very few, who do this really well. If you work at Google and it's pretty great, or some giant bank that's had decades to figure this out, or an energy company that's extremely risk-averse and has great processes—I'm talking about the other 99.9%.

Work is done inconsistently

Most companies, it's not clear exactly how something is supposed to be done. And what ends up happening is the output is massively inconsistent. Different people doing the same job, even using the same document, do it differently.

It's common knowledge within the company. "Oh, if Sarah does it—yeah, I love when Sarah produces this report or this security assessment. She does it really well." Well, guess what? Jim over here, he looked at the same process, got the same training, had the same onboarding. His output is absolute garbage.

And that's even if there IS a process. Oftentimes there isn't. It was just common onboarding. You come in, it's like: here's where you put your keys, we get lunch over here, make sure you don't send it to Mr. Johnson like this—he really hates that. All these little things being loosely stored in your mind. And when that person—Chris, the admin—leaves the company? The whole process has to start all over again.

Very common stuff. You've seen this a million times.

Wrong people for the work

There are people all throughout companies who just never, ever got good at doing the job. They never got good at following the process. They don't produce good output. It's really hard to hire people in companies. It's also really hard to fire people. Maybe it's nepotism, maybe they're the nice person, maybe they're good at one little thing and you'd hate to give that up. So they stay on and keep breaking things.

This is endemic throughout companies. There are dozens or hundreds—maybe even thousands—of people who don't follow processes and aren't good at their job at all.

Now, keep in mind, there are also A-players everywhere who do a fantastic job. They don't even need a process. They're awesome. No one's disputing that there are awesome humans who can do work. That's not what this argument is about. This argument is about overall work.

The plan is clear but the work isn't being done

You've got people who can't follow the process. They produce bad work. The job isn't that rewarding. They're not exactly sure what they're working on. Their bosses are changing all the time. The work they're supposed to be doing is also changing all the time, because the company goals change all the time. And guess what that means? Meetings. Lots of meetings.

You're a developer trying to code, trying to do a bunch of technical stuff. Nope, you gotta get on this call. You already made a document. You already explained it 20 times. You've already been in 40 meetings about this very same topic. Nobody is listening. They keep doing the work wrong. You keep having to correct them because they didn't follow the document you spent three weeks making.

This is not even special. If you've worked in any companies across your career, this is endemic.

Departments at war

Now add on top of this: people are empire building. It's Game of Thrones being played out in real time across many companies. Managers are empire building, picking their favorite people.

And what about when you have a good idea? How many times have you had a good idea that you submitted up through management because it would actually solve half the stuff that ruins your soul? You spent three weeks putting together a proposal, wrote all this documentation, and they're like: "Yeah, I don't think so. Oh, by the way, you need to join this meeting—we're changing our goals again. Oh, by the way, you're gonna report to Sarah now instead of Raj. And also, redo all that stuff you just did."

Why do you think people dread Monday so much? Why do you think Sunday night is filled with this pit of despair? Because they get to drive for an hour to commute in, go to another meeting to redo their plans, hopefully try to get a bonus—meanwhile everyone around them is playing Game of Thrones and breaking everything.

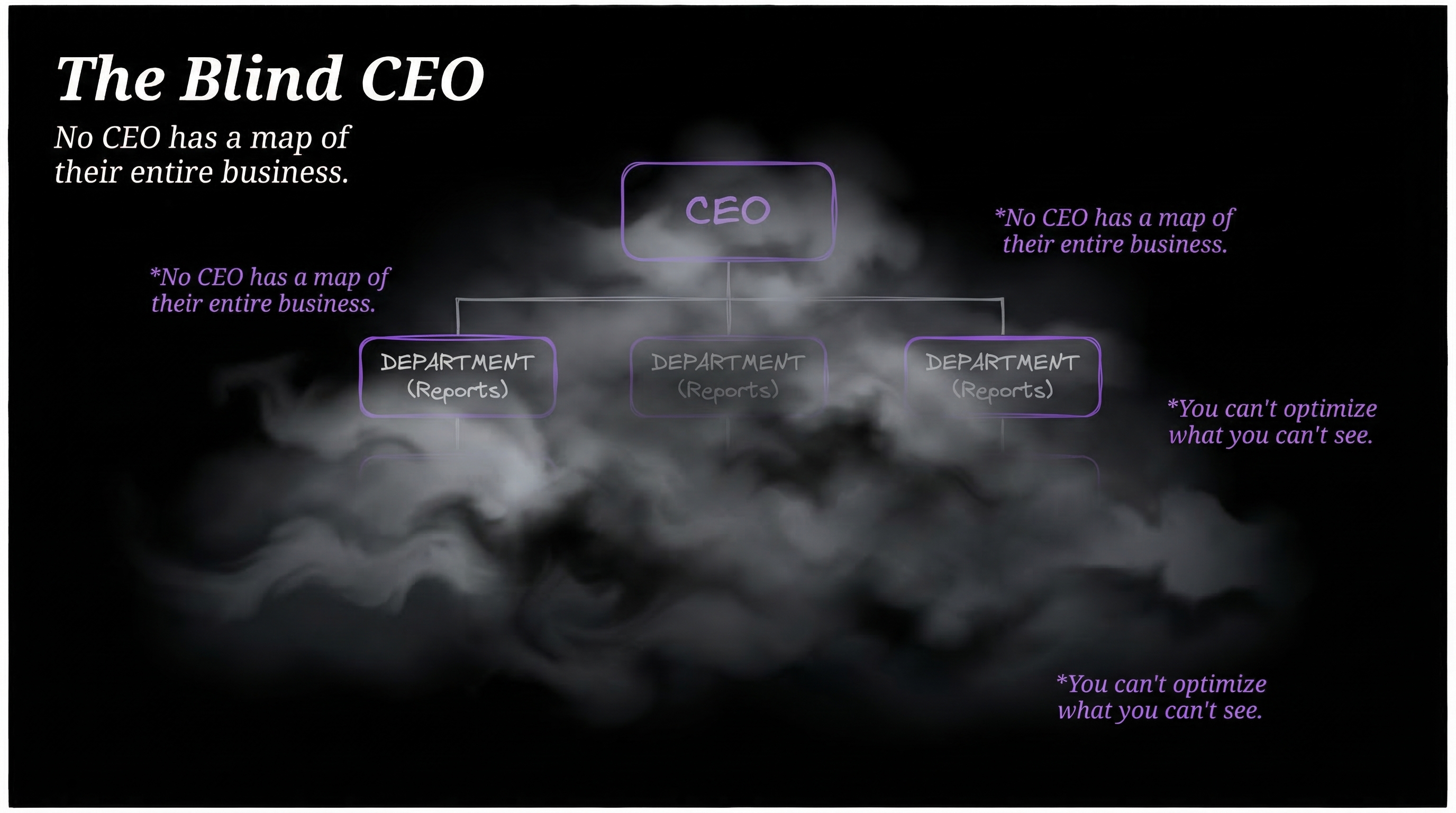

The blind CEO

I've been talking mostly about the worker. Let me tell you about the other side—looking down as a leader, because I've been here as well.

You're looking down at this company with the CFO, looking at your headcount spend. You're paying $94 million on headcount across your entire staff. Engineers, admins, all these different people. What exactly are we getting?

Can I see all of my projects? Can I see all of the work being done? Do I have a list of workflows that show all the different processes in my company? What the steps are? How they're actually being performed? How much they cost? How long they take? What's the average quality of an output?

You know what happens when a leader asks this question? "Well, we could take a look. That's a good question. We've been thinking about that as well. If you would like, I could put together a project where we could figure out what exactly our work is and how much it costs and how good of a job we're doing."

That is a major endeavor. It's separate from their work. This happens constantly inside of companies. I'm often brought in to help them do this. Do you think this takes hours? Nope. Days? Nope. Weeks? Nope. Months. This is multi-month or multi-year.

And if it's a large company? They don't even ask their people. They bring in McKinsey or KPMG. Spend $500,000 to have a team of smiling 22-year-olds come in and do the audit.

This is what AI is competing with. The CEO and the CFO have very little idea what all is happening in the company. They don't know where the money's going. They don't know what the processes are. They don't know what the workflows are. They don't know how well it's going. They just have a rough idea.

There is no visibility. They can't see shit. This is the state that AI is competing with, which is roughly chaos.

The $50 trillion question

Roughly $55 trillion a year globally. Nearly $12 trillion just in the US.

ILO Global Wage Report · BLS Employment & Wages

Pop quiz: guess how much knowledge workers are paid every year? $50 trillion. That includes the B players and the C players and the D players and the F players. Forget the A players—they're awesome, they're gonna continue to be awesome with or without AI. We're talking about most work in most companies.

Only 21% of workers globally are engaged. 62% are doing the minimum. 15% are actively working against their own companies. Over 70% dread going to work on Monday. Only 15% of frontline workers say their work fulfills any sense of purpose. Half of all workers are watching for or actively seeking a new job. Many people are making a sport out of doing the least amount of work possible.

Everyone knew this was soul-crushing before 2022. They knew it in the 90s. They knew it in the 80s. That's why it's part of culture. Look at Office Space. We've been making shows about corporate drudgery and stupidity for decade upon decade. We recently had Severance. Nobody's having fun here.

And guess what everyone was doing throughout the culture? Trying to figure out a solution. What is a better way? Because this is obviously not the way that humans should live. That was the state before 2022 when AI arrived.

When you hear the argument that AI cannot compete with humans—inside of a company, this is what they have to compete with. This bar is not low. It is on the floor. It burned a hole through the floor. It is descending to the core of the earth.

AI can follow instructions. The ability to follow instructions over and over, doing the same thing in a very uncreative way, is better than what is done in most companies most of the time.

The human reliability myth

I'm going to continue beating up on us humans here. I got positive stuff at the end, so hang in for that. This next piece is another negative piece about humans, and it relates to how AI thinks versus how humans think.

Another part of this argument is that humans just fundamentally think differently—that we have more integrity of thought, we're more cohesive, we have understanding, we know ourselves, we can introspect, we can be creative, we have intuition. All these advantages over an AI, which is this dead black box.

I want to dispel this as well. The crux of this argument is that humans are better thinking machines. If you've ever tried meditation, what it reveals about humans is absolutely mind-blowing.

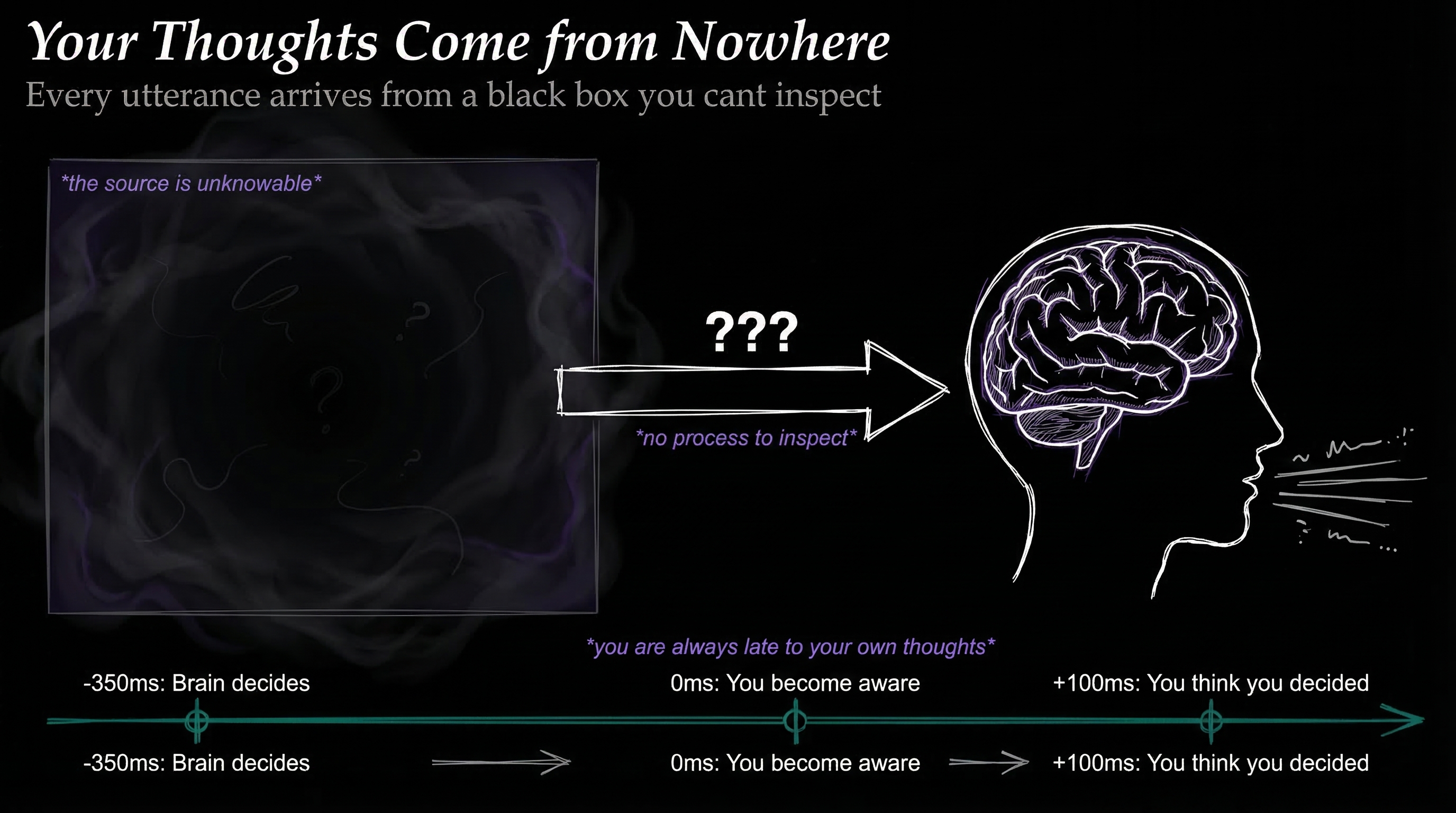

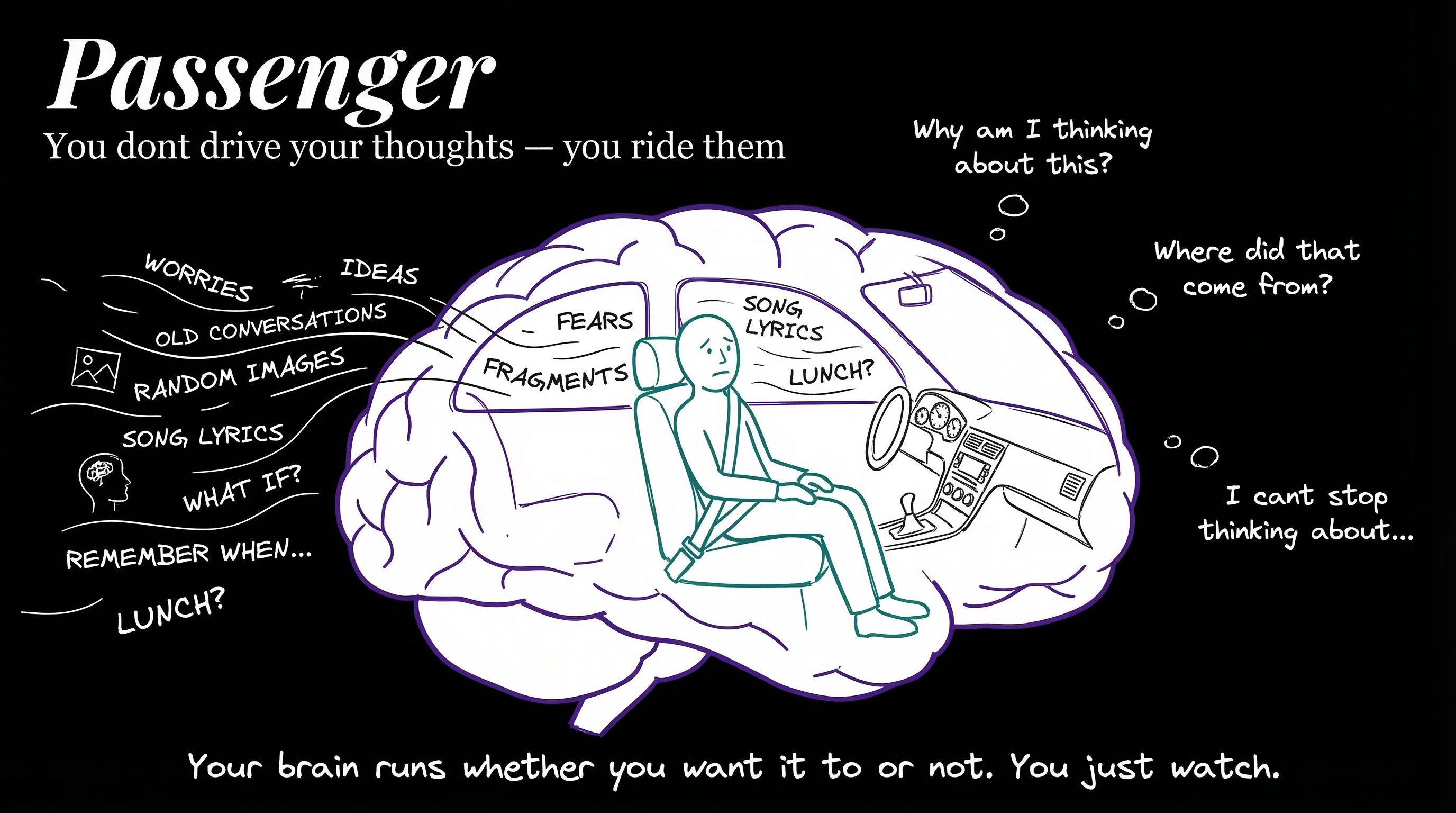

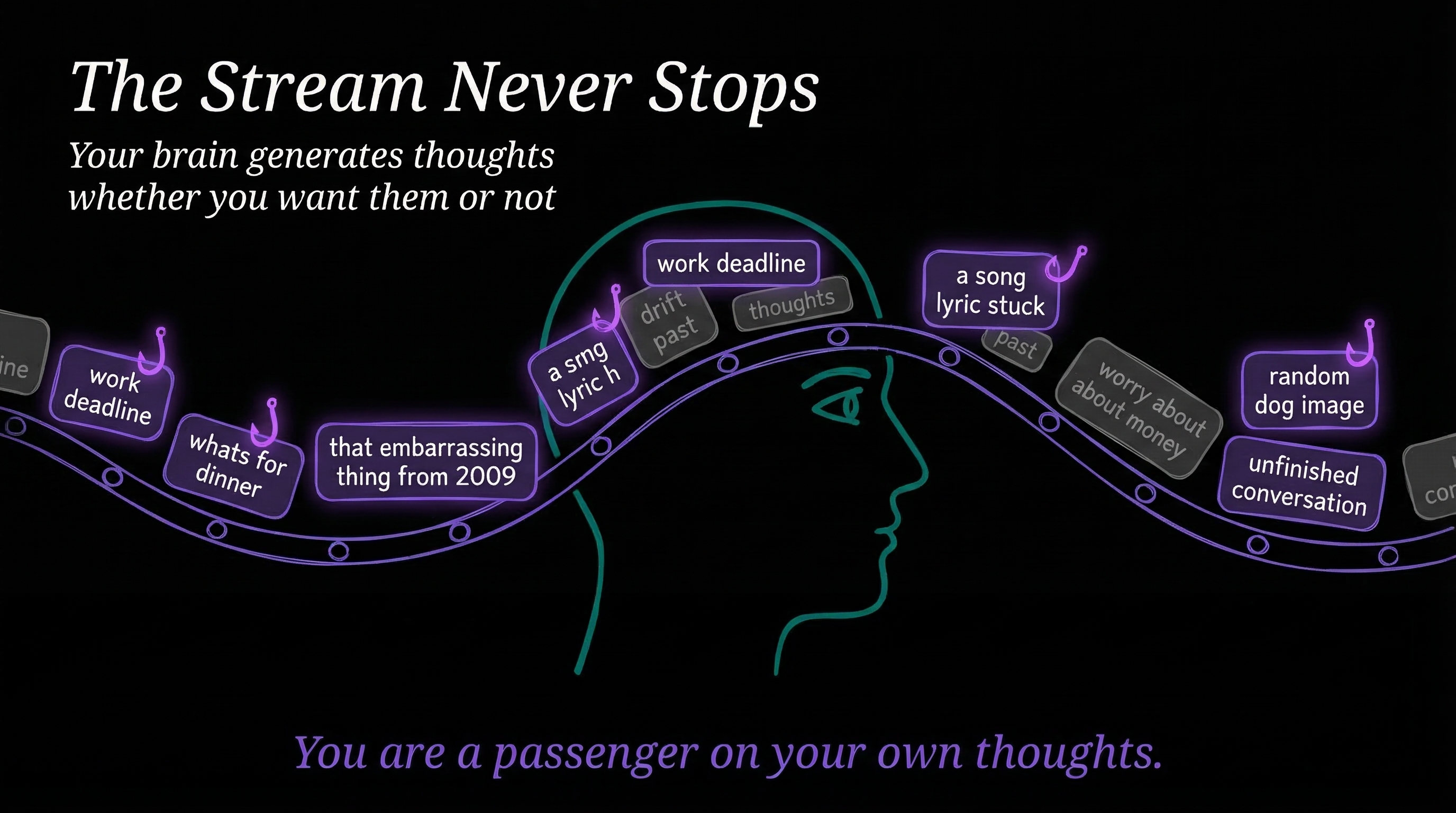

Your thoughts come from nowhere

The first thing it reveals is that humans have no idea what they're thinking. At any given moment, if you could zoom in and listen to the minds of people sitting in their cars, sitting in their cubes—it's like hamsters running on a wheel.

You zoom into Ravi and Ravi's like: "I can't believe he said that. All that work I put in on the project—I hope that guy gets fired. Like, you know what I would love? A new boss comes in, sees my stuff, absolutely loves it. Looks at Chris's stuff and is like, this is garbage. The one I really like? This one. That's mine." And then: "Oh, did I feed the dog? I think I need to feed the dog. Let me text someone. Oh crap, it's almost lunch."

That is the mind of a person. That randomness is happening constantly.

When you try to meditate, what you do is try to observe thoughts. It is the craziest thing. It's like this sliding window of chaos just scrolling through your brain. Rumination, grinding—"I should watch that thing, I gotta pick up those things, I don't know why he said that, I should get a promotion, these people don't respect me." Just constant droning.

Where did those thoughts come from? Who is making those thoughts? And who is observing them? The answer is you have no idea. Libet's experiments showed that brain activity precedes conscious awareness of a decision by ~350 milliseconds. Your brain decides before you know it. As Sam Harris puts it, "You no more decide the next thought you think than you decide the next thought I write."

You know what that reminds me of? An LLM. You poke an LLM and say, "Hey, say smart stuff about logistics workflows and pipelines and how to move heavy freight from the east coast to the west coast on an 18-wheeler." And it just goes, "Okay," and starts spewing words. And they turn out to be really good. You could ping a human to do the same thing and they start spewing words too. Does the LLM have any idea where its words are coming from? No. Do you have any idea where your words are coming from? No. It's a complete black box.

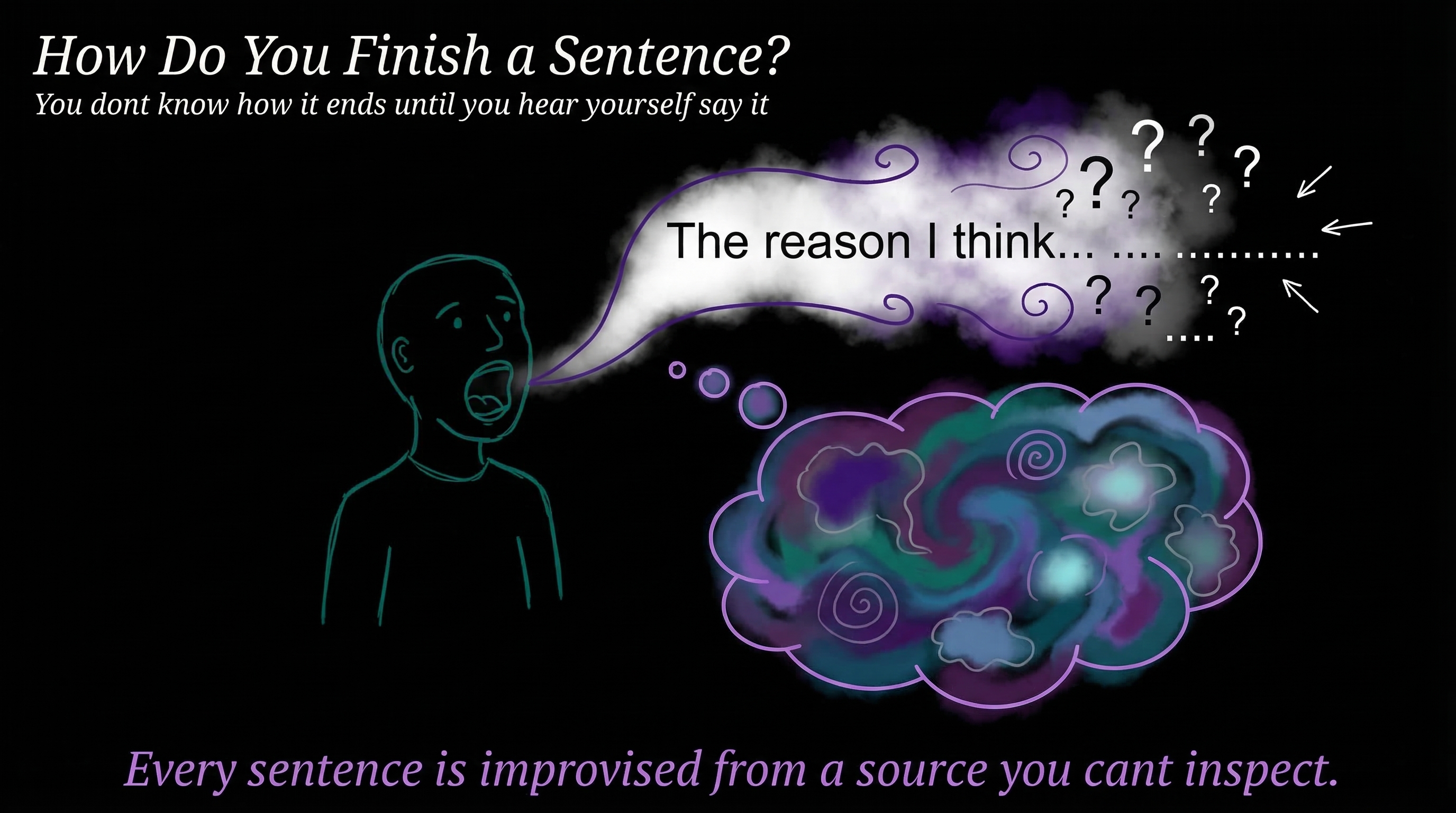

Your words come from nowhere

If you are in the middle of a sentence—like I have been multiple times already—you have no idea how you're going to finish it. You're not planning sentences. You didn't come up with the sentence. These are spewing out of you just like an LLM. You are just as surprised about anything you say as the person receiving it.

Here's another example. What are your top seven favorite restaurants in the entire world? You've got 30 seconds. What is your brain doing right now? Where is that content coming from? If I ask you multiple weeks apart, the list will be different because some you couldn't remember at the time. Your preference might have changed in between. This is not a static, cohesive, dependable process.

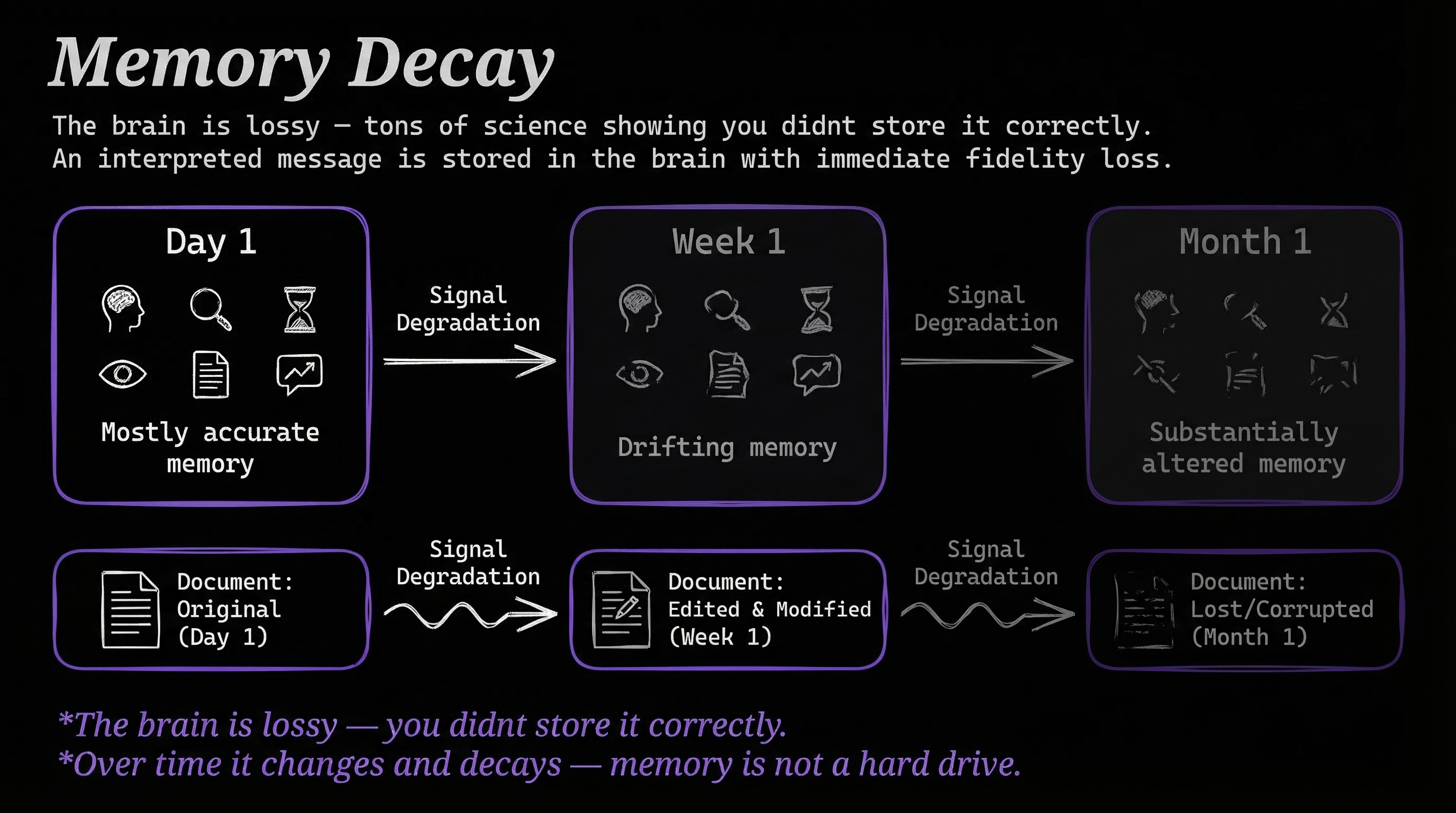

Your memory rewrites itself

One of the most reproduced findings in psychology is the fact that memories are not what we think they are. When we store memories, we're storing them with emotion and bias and feelings. And when we recall them, it's extremely well documented—you don't store it correctly. You're not a hard drive. You're not storing unaltered ones and zeros. We don't even know how we're storing things in the brain. But we do know that when we recall events versus what actually happened, they're massively different. And it gets worse over time, and it gets worse the more you recall it.

Our memory system is completely flawed.

We're passengers on our own thoughts

So we don't know where our thoughts are coming from. We don't know how we're gonna finish sentences. And our memories are not static—they're extremely fluid.

Research confirms this: people spend 47% of their waking hours thinking about something other than what they're currently doing. Even experienced meditators cycle through attention and mind-wandering on a timescale of seconds. In nearly half of mind-wandering episodes, people have no idea they drifted until something snaps them back.

This whole concept that we are put together, cohesive, self-understanding—it's complete and utter crap. The whole thing is built on absolute madness. As thinking machines, the bar is extremely low.

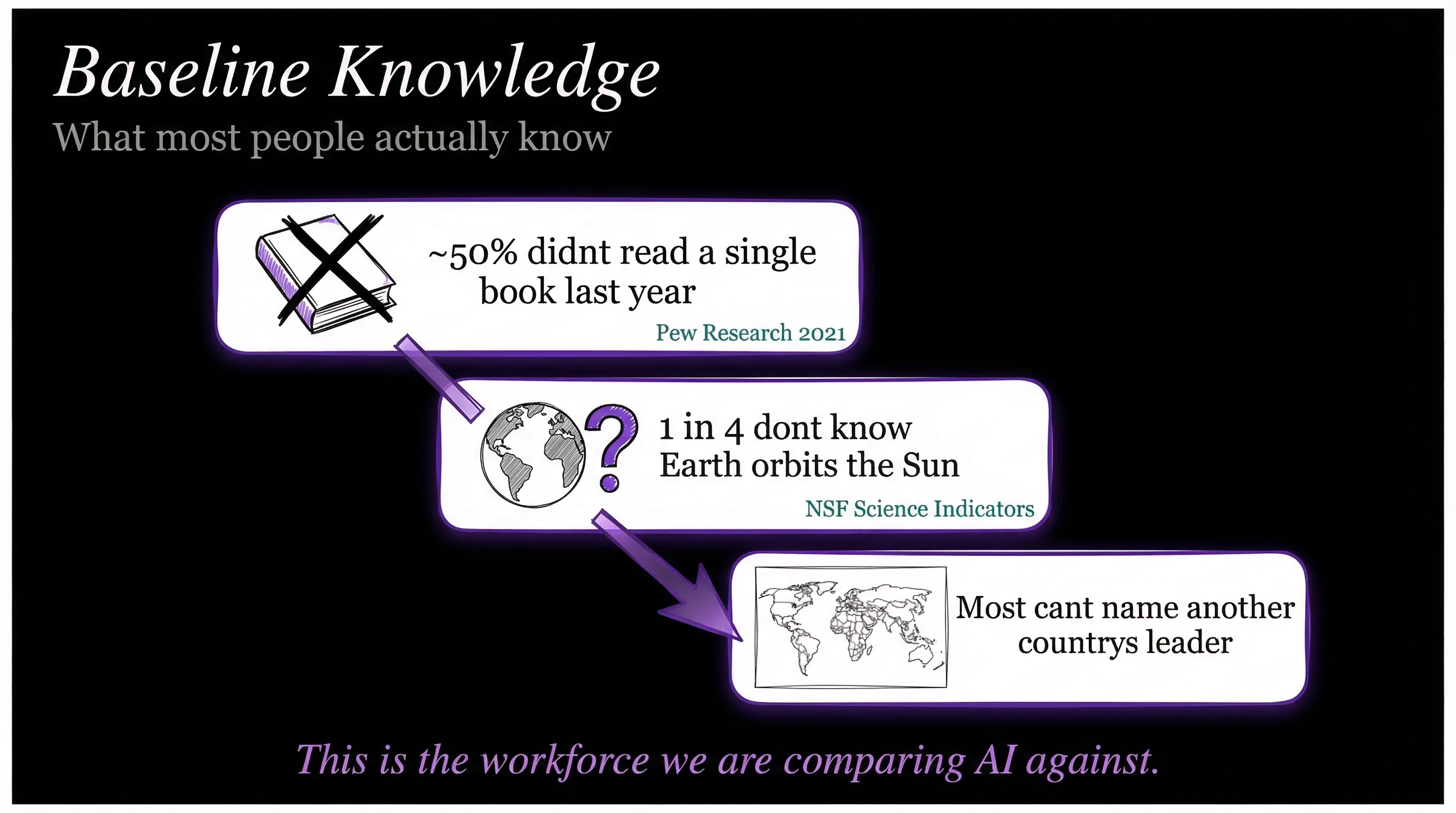

Baseline knowledge is really low

~40% of Americans didn't read a single book last year. 1 in 4 don't know the Earth orbits the Sun. Only 27% can pass a basic financial literacy quiz. Over 70% fail a basic civics test. 28% of US adults can't read at workplace proficiency levels.

This is the workforce we are comparing AI against.

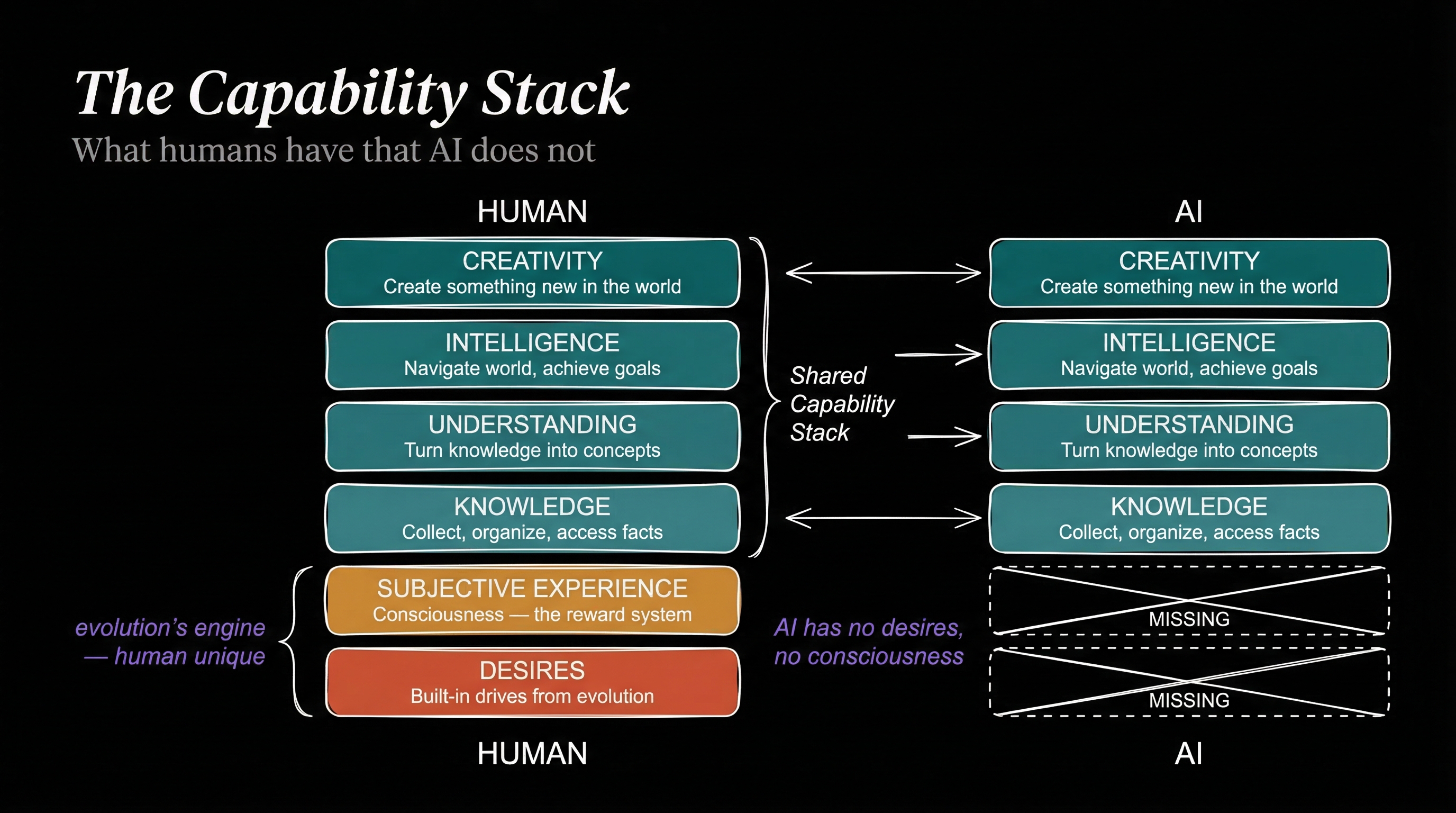

The capability stack

I came up with this capability stack. This is not settled science—this is all still up in the air, people are still trying to figure this out. I read a lot and I've got decent mental models, so just work with me on this.

Think of these layers as fundamentals of a knowledge worker—things that make us good at doing knowledge work.

Knowledge. Collecting, organizing, and accessing facts. Easy.

Understanding. Turning knowledge into concepts. This is a big one. Having a whole bunch of facts stored is not enough to be a good knowledge worker. Understanding is the ability to let it seep in and say: oh, that knowledge is related to that knowledge—that forms a concept. So now we have concepts, and those concepts relate to each other. You have to have understanding of all these different concepts and how they connect. This is the layer that separates someone who can recite facts from someone who actually gets it.

Intelligence. This is the next one above understanding. The ability to face obstacles, navigate the world, and pursue goals. If you're more intelligent, you navigate the world better and better achieve your goals.

Creativity. The ability to come up with novel, new things. Intuition, art, liberal arts thinking, philosophy, logic.

In most knowledge work, what people are actually doing is the knowledge, the understanding, and the intelligence. Creativity—some people have a lot of it, some have a little, some have none, but they're still really good knowledge workers. You could have an A-player who crushes knowledge, understanding, and intelligence and is still great at their job.

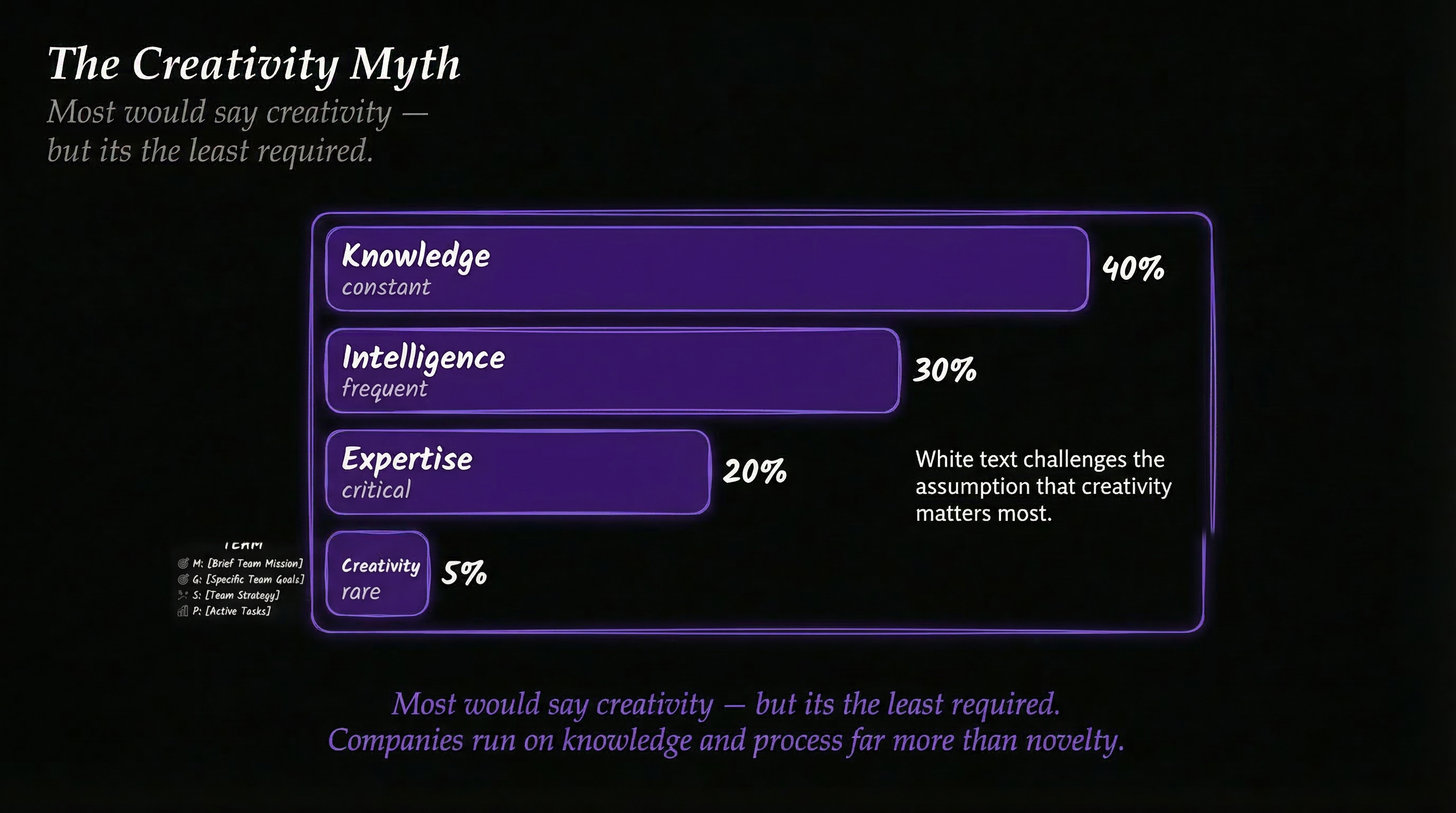

The creativity myth

Most people would say creativity is what makes humans special. But creativity is the least required capability in most knowledge work. McKinsey found that only 4% of US work activities require creativity at median human level. Companies run on knowledge and process far more than novelty.

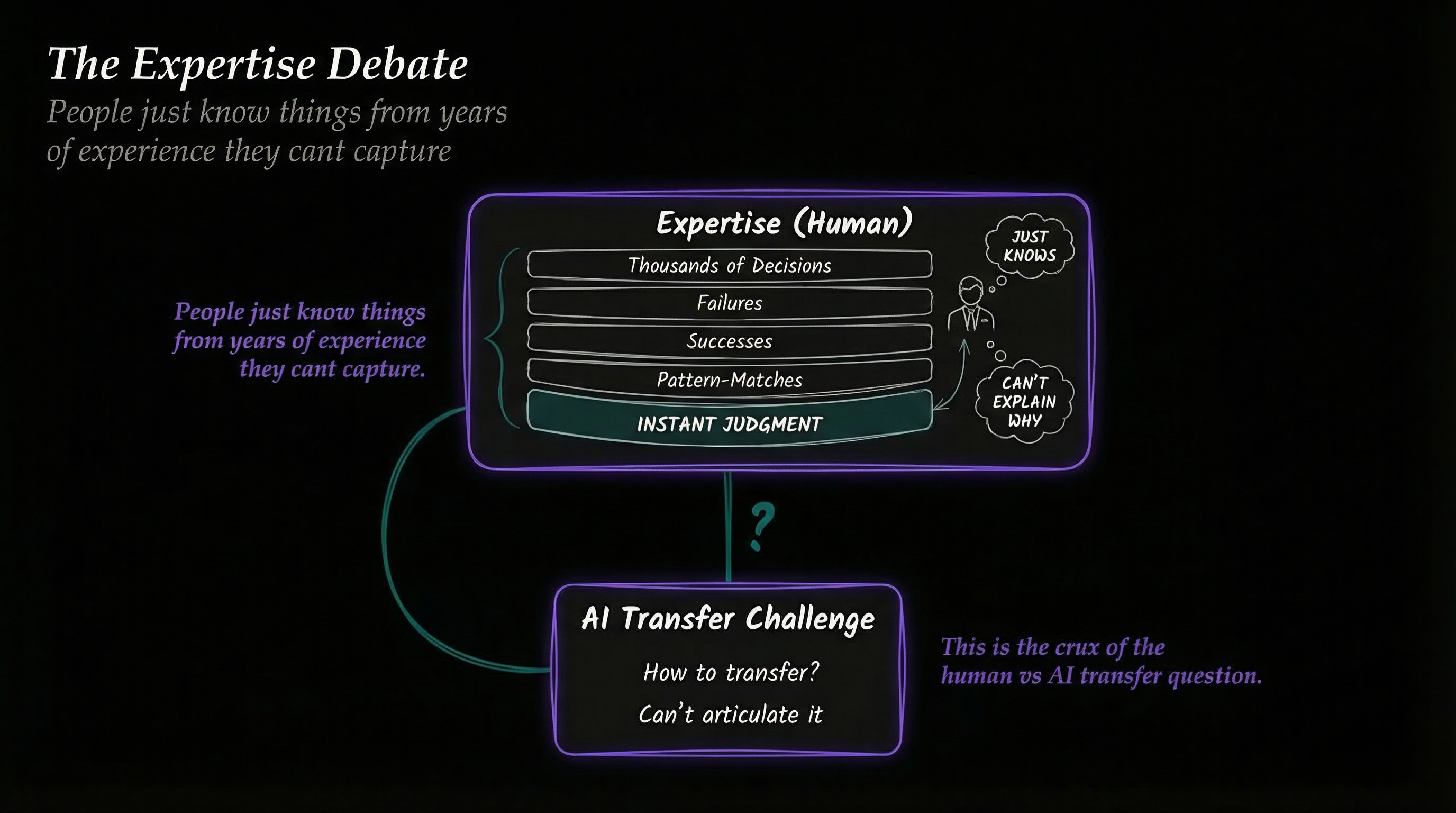

The expertise debate

One of the arguments for why humans cannot be replaced is that they have expertise. This is the thing my friend Marcus Hutchins is always talking about. He says expertise is the thing AI can't do—it can have facts and regurgitate them, but that's not real useful, that's not what work is.

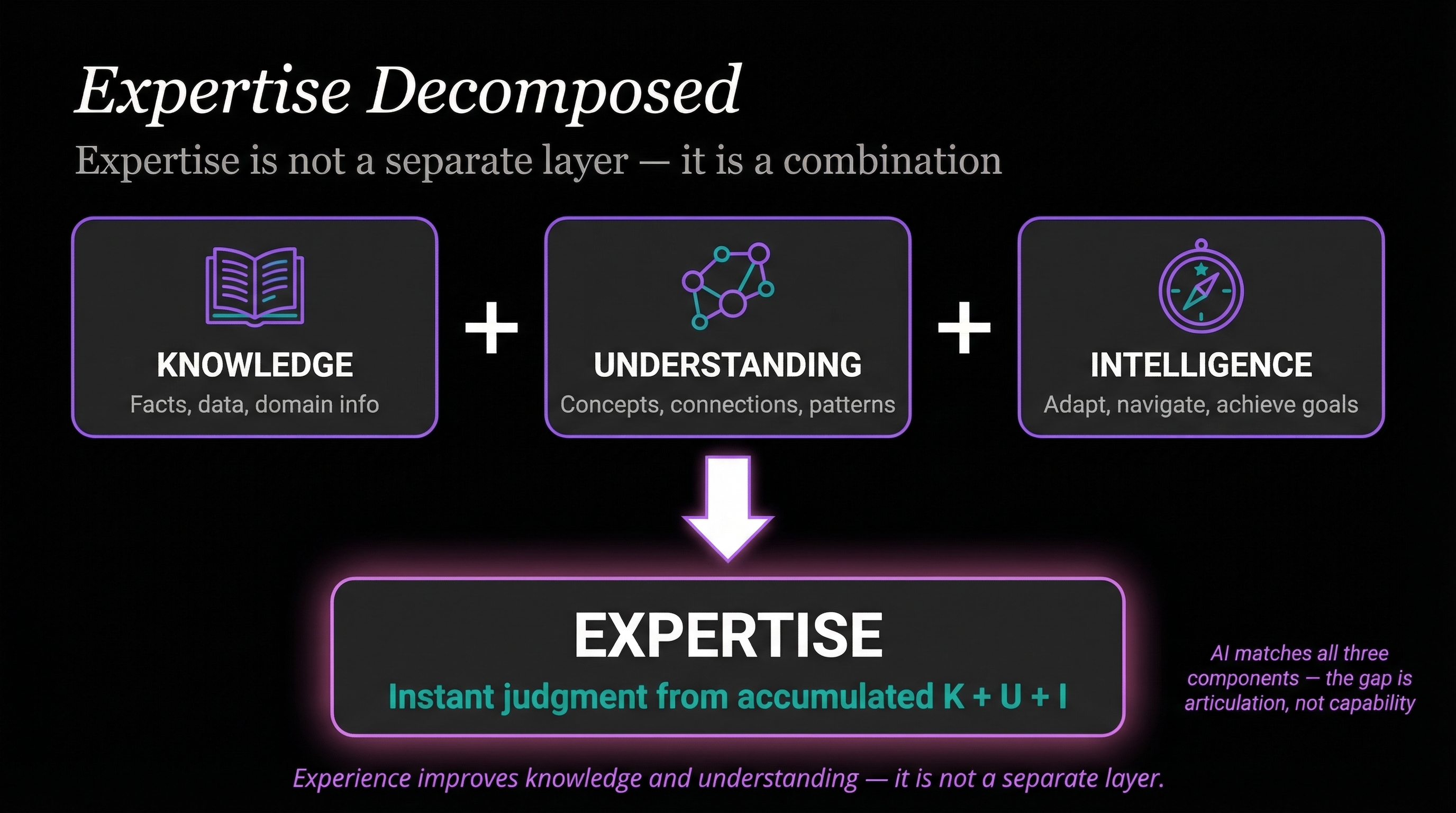

So where is expertise on this stack? Maybe you think I missed a layer. I don't think I did, but I'm challenging you to tell me what I missed.

My answer: expertise is knowledge, understanding, and intelligence combined. Think about what expertise actually is. It's lots of experience—the knowledge, the understanding of the concepts, the ability to adapt with intelligence and navigate and change. The combination of those combined with experience is what gives you your expertise.

Maybe you'd argue experience should be on the list. But experience is memory. Experience is recall. Experience is accrued iterations on seeing different problems. And ultimately, experience is actually improving your knowledge and your understanding. An experienced person has more knowledge, better understanding—they've linked more and more patterns to interconnect. Somebody with 25, 30, 50 years of doing financial audits just immediately sees things. They see patterns.

Here's the question: what part of that does AI not have?

Knowledge. AI's knowledge is insane. It's read every book. It's deeply knowledgeable about thousands of fields and subdomains. It could write a full book on a topic by itself. It could make flashcards about very specific things that very few people on earth know about. Knowledge? Forget about it.

Understanding. My definition of understanding is connecting concepts, finding patterns across domains. And AI is mind-blowingly good at this. It can cross-reference anything. It holds it all in its mind at once. "Oh, this relates to 1930s Russia in the following way." "Well, how does that relate to chicken feed farming practices from Idaho?" "Good question. Here's how it relates." It can also supplement that with tools to go research anything and add to it. Knowledge and understanding? Crushing it. Absolutely crushing it.

Intelligence. Can AI navigate the world and achieve goals? Yes. Absolutely. An agent infrastructure with access to tools, company knowledge, all the context, a smart model, a system of agents—you throw dynamic changing requirements at it: "We can't do Japan, we need the UK, only people with residency in Singapore, everyone over 35, use this template, and take into account these other 90 things." Those are dynamic changing situations. Everyone who's used AI recently knows you can throw anything at them and they adjust.

So can it do intelligence? According to this definition, 100%.

Now let's take creativity off the list entirely. Let's just pretend AI doesn't have it. AI still has knowledge, understanding, and intelligence. AI has the expertise.

This argument that you can't possibly replicate human expertise, human thinking, the quality of human execution of work—everything we've been talking about tells us that's just not true. In fact, the polar opposite is more true. Even decent AI today, given defined tasks, will just crush an average knowledge worker. What are we doing all day? Accepting emails, responding to emails, producing reports, writing code. These basic commodity tasks make up the vast majority of all knowledge work.

The gap is not the model

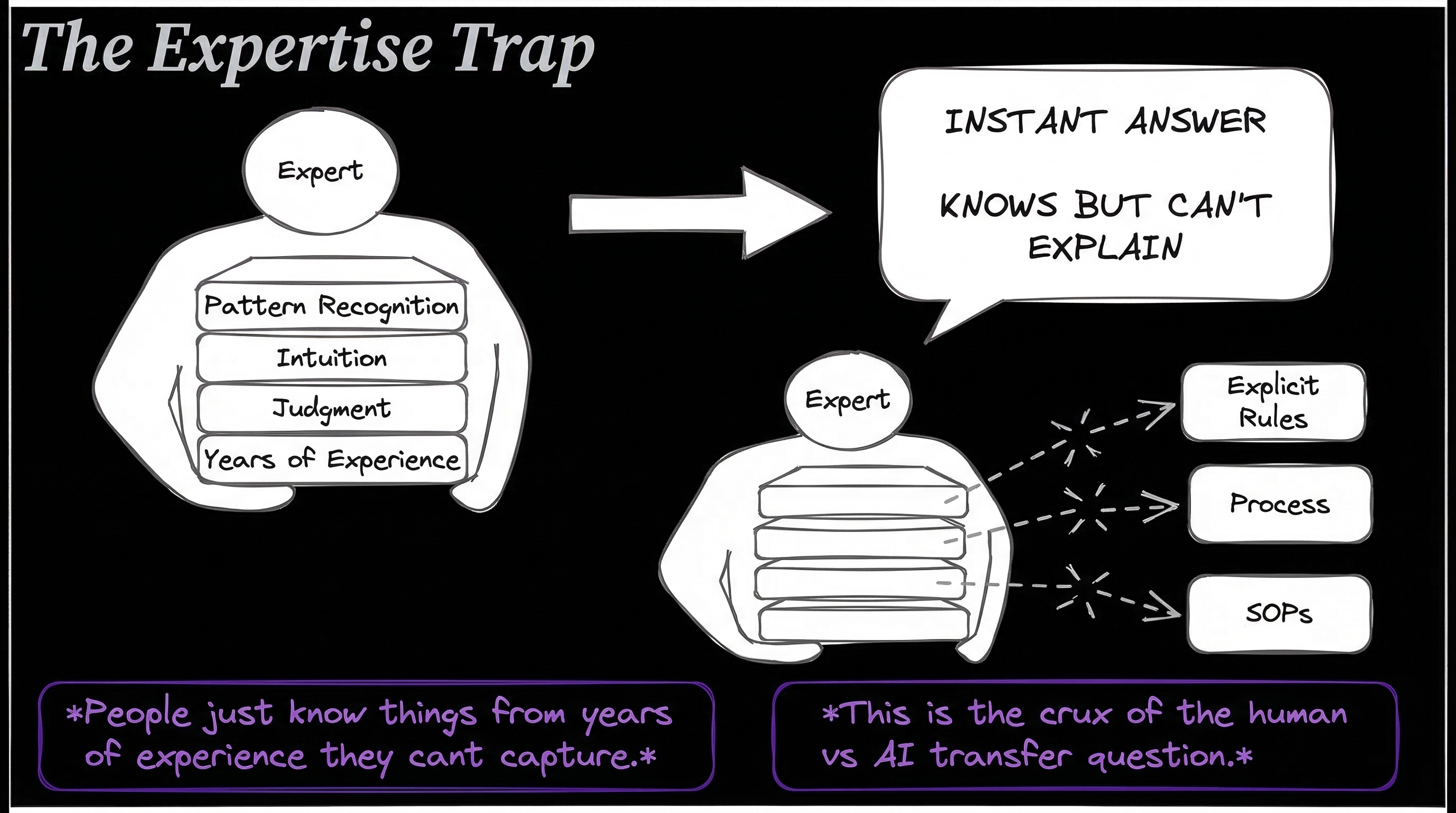

Here's a core point about all of this. A big reason people think there's a gap between what humans have and what AIs have is because the humans have not articulated the stuff. It's not written down.

Think about this. The way AI has gotten so good in the last year is largely because of Claude Code. What did Claude Code do? Did it have a dramatically better model? No. What it did was build scaffolding—a harness around the model. It built context and context management, relations between documents, allowing the model to have knowledge, understanding, and intelligence. It linked together everything the model needs to actually do work.

What are skills? Skills are a combination of resources, tools, context, knowledge—a whole bunch of stuff written down. That's the centerpiece. It's essentially the core benefit of a harness versus just a model. When you give something goals, context about how to accomplish something, the tools to use, examples of good and bad—that's a skill.

Once you realize how powerful skills are—once you realize how powerful it is to have all this context in one place that wraps an AI model—you start to realize that the problem is not the AI. The gap between human expertise and AI expertise is not the model. It's the fact that we have not articulated our expertise down into files, into tools, into context.

Nobody has any of this written down. All the stuff in Ravi's mind and Suzy's mind and Chris's mind—the way this particular task is supposed to be done, this sending of the emails, the creation of the report, the writing of the code—it's not written down anywhere. It's passed from human brain to human brain, and they go on vacation, go on maternity leave, retire, jump companies every couple of years. That knowledge just dies.

There's some super old guy, 62 years old, his name's Cliff. He's the one you call when things go down and there's no docs. You can't find a thing, you don't know how to get the server back up, people don't even know what servers are anymore—you call Cliff. Cliff has never written the stuff down. He's never created a skill. He's never done a full debrief interview to capture his knowledge.

What's starting to happen now is knowledge is dispersing from brains into skills. The expertise gap between humans and AI is actually the failure, so far, of us to articulate all that chaos we talked about in the beginning. And this is happening fast. Extremely fast.

The articulation gap

The real barrier between human expertise and AI expertise is not capability. It's capture. Experts know things they cannot explain. They have 20 years of pattern recognition, intuition, and judgment locked inside their heads. Ask them why they made a particular call and they'll say "I just knew." That's real. That expertise is real. But it's trapped.

On the other side, you have AI-ready expertise—skills, SOPs, context files, rules, examples. Written down and executable. Once captured, AI can use it instantly. The gap between those two sides is the articulation gap. And that gap is closing every single day. Every skill written, every process documented, every expert debrief captured—it shrinks permanently.

The expertise ratchet

Here's the thing that should really make you pay attention. This is a one-way process. It's a ratchet. It only goes in one direction.

Think of it like pee in the pool. Once expertise gets captured—into a skill, an open source project, a documented SOP, a published process—it never comes back out. It's permanently in the pool. Every person who documents their knowledge adds to the collective pool. Every open source project, every Claude Code configuration file, every captured process—it all accumulates. And it never drains.

This is happening right now at an accelerating pace. Skills are flooding the AI space. People are documenting what they know and publishing it. Companies are being forced to write down their processes for AI adoption. Expert knowledge that used to die when Cliff retired is now being captured and made permanent.

And here's what makes this devastating: every single piece of expertise that enters the pool makes ALL AI instances smarter. Not one AI. All of them. Simultaneously. Permanently.

The asymmetry

Now think about what this means for the speed comparison between humans and AI.

A human takes 20 to 30 years to develop deep expertise in a single domain. They can only learn one thing at a time. They forget things. They retire. They die. When they leave a company, their knowledge leaves with them. A new hire starts over from scratch.

AI absorbs all captured expertise instantly. It never forgets. It can be duplicated infinitely. Every new skill published, every process captured, every open source contribution—it compounds. Forever. There is no retirement. There is no knowledge loss. There is no starting over.

The gap between human expertise accumulation and AI expertise accumulation is not just big—it's widening every single day. And it's widening at an accelerating rate because the more expertise enters the pool, the easier it becomes to capture even more. AI tools themselves are getting better at extracting knowledge from humans. You can have a conversation with an AI and it extracts your expertise into structured documents automatically.

This cannot be reversed. You cannot take the pee out of the pool. You cannot un-publish skills. You cannot un-document processes. The ratchet only turns one way.

This is why I consider the "don't worry about AI" narrative so dangerous. Every day you spend not preparing, the gap gets wider. Not by a little. By a lot. And it never shrinks back.

The AI-first enterprise

So far I've been talking about the problem. Now I want to talk about what I think the solution is—and what I think is inevitable.

This is an architecture I've come up with, I call it the Lattice. I've been working on it for quite a while. I'm going to release it as an open source project as well—similar to how I released Fabric and PAI. It's the same system I use with customers to help them solve the chaos we talked about.

The Lattice system

The concept is addressing the transparency problem—the fact that all these different groups are opaque, the work is opaque, the processes and SOPs are not clear.

What Lattice does is it has a single unified daemon that everything reports up into. You have the different tiers of organization: company level, department, team, and individual. Each has SOPs, metrics, work, goals, budget—all the different components. And each level has the same structure.

As an individual, you have your personal SOPs, the way you like to get things done. Your personal budget, your metrics, your knowledge captured in skills. And most importantly, your individual unit is broadcasting APIs. You as a person—Sarah, Ravi, Chris—you are broadcasting what your SOPs are, what your metrics are, what your budget is, what your work items are. They're available for any agent, any team member, the agent for the team, the department, or the company to query in real time.

Most importantly, this allows the CEO and the CFO to actually look down and say: this is the work we're doing.

The daemon API network

Goals, plans, SOPs, metrics, work, cost, quality—the workflows for the company—this stuff is extremely opaque to the leaders of a company right now. Extremely hard to gather. This is going to turn it into seconds. Less than a second, oftentimes. Minutes at the absolute maximum for a super large company.

Each tier—worker, team, department, company—is independently authoritative over its own stuff. I could just query: "Hey, what are my coworkers working on? I'm thinking about working on this." And the system says: "Oh yeah, Julie's already working on that. You should talk to Modi, they're already on it. Maybe you can do a collab. Do you want me to set up a meeting?" Boom, done. Now it posted that you're in a meeting, that you're collaborating on this feature. That's now available in your API. When someone asks if anyone's working on this, it now includes you. Available to other teams, other departments, all the way up to the company level.

The AI enterprise graph

This is the type of view that CEOs, CFOs, any manager wants to see. All the different processes, all the different components. Human components here, automated components there, review here, audit there, quality check over here. You can't optimize what you don't understand. You can't optimize what you don't see.

Goals get canonicalized

Think of what happens right now when a company changes its goals. How many people are triggered by the word OKR? They change all the time. People have different systems for doing them. Imagine a giant all-hands meeting where the CEO says: "These are the new goals. These are the new metrics. Here are new SOPs." Boom—they've been published. All documentation is now updated. Goes all the way down. Where's the text file? Right here.

This is not magic stuff. This is not difficult stuff. We are doing much more difficult stuff with AI already. This is just not a system that's been built yet, and it will take time to roll out.

How software buying changes

Someone comes up, takes you to a steak dinner, and they're like: "Hey, we have the best image background removal process. Ours is way better." So you do a pilot, spend six weeks coming up with test criteria, A/B test the thing.

That goes away when you have a lattice of all the different pieces that make up your company. One particular node—one of hundreds or thousands or millions of nodes—is the algorithm for doing image background removal. Today we don't really have metrics on that. What we have is: "Yeah, it works pretty good."

What we're moving toward: all of these have metrics. They're all clearly defined. A vendor doesn't come with a steak dinner anymore. We show them our metrics. What are your ratings? What are your cost numbers? If all they brought was the steak dinner, that's not a conversation anymore.

The optimization view

Once you have those visuals, once you have those workflows, things fundamentally change. The human becomes the decision-maker, not the doer.

The reverse automation frame

If you think about it in terms of "we have the humans, now let's add AI"—I have the same instinct, it feels uncomfortable because they're coming into our processes. Here's another way to think about this.

Imagine you have a factory production line that produces iPhones. Soldering nanometer transistors. This thing is amazing, fully automated, just flowing perfectly. Who would say: "You know what this thing really needs? A human touch. Let's get some humans in here." Do you have any idea what would happen if you tried to ask a human to solder nanometer transistors onto circuit boards? It would not go well. Also known as: impossible.

Or take an amazing Excel sheet. 36 months of work, handles the entire company's finances, just produces the best results, never messes up. Where on the spreadsheet should we add a human to help?

When automation works, when AI workflows work, when the Lattice system works—the answer will not be to add humans to the thing. It becomes extremely obvious that adding humans actually makes it worse. With an exception, which we're about to talk about.

What humans actually do better

I promised some good news and this is where we get to it.

So far: the current state of work is not good. The perfect human thinking machine is not correct. And the Lattice system shows where this is headed. Now let's talk about what humans are actually supposed to do.

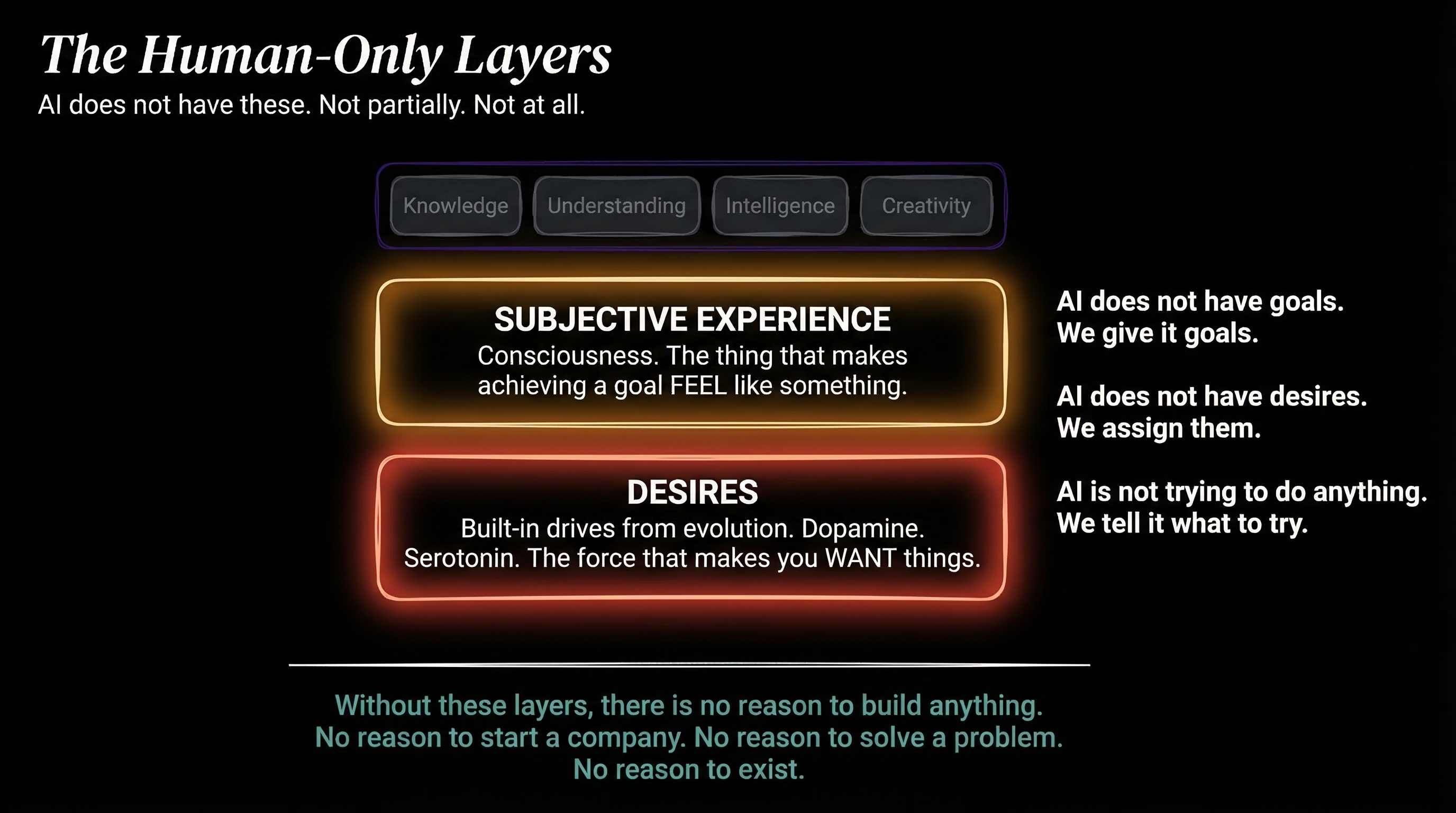

The human-only layers

Remember when we talked about the four layers—knowledge, understanding, intelligence, creativity? There are two layers underneath, and these are the layers that AIs don't have. AI matches the top four—but has none of the bottom two.

Subjective Experience. Consciousness. The thing that makes achieving a goal feel like something.

Desires. Built-in drives from evolution. The reward and punishment system—dopamine, serotonin—the force that makes you WANT things.

AI does not have goals. We give it goals. AI does not have desires. We assign them. AI is not trying to do anything. We tell it what to try.

Imagine that whole lattice workflow again, fully automated. Amazing. Doesn't need humans because it's better, faster, more consistent, higher quality, cheaper. Fine. Who decides what to make? Who decides what company to build in the first place?

AI doesn't see a problem in the world and go: "I just don't like that. That's not the way they should be treated. This is a horrible experience. I don't like this product, we should make a better one." AI is not doing that. Why? Because it doesn't experience anything. It doesn't feel bad. It doesn't come up with ideas on its own. If you ask it to, it can—but it's not sitting around dormant and unhappy.

We are unhappy. We have these desires and this subjective experience. Because we are powered by evolution. We are basically mech-suits around evolution, which is trying to do things through us. Our entire pleasure system—dopamine, serotonin, cortisol—the whole purpose is to steer us toward desires. Do we have any control over our desires? Can you suddenly decide to like broccoli when you didn't a second ago? No. You can't really control what you desire. You kind of have them. You can try to use your intellect and steer them, but in general, we are being powered by a very ancient, powerful thing.

That drive makes us fundamentally different from AIs. AIs are dormant. They just sit there. They execute things we want. When they act like they feel things, when they act like they have goals—that is not coming from their true center of self the way it does for humans.

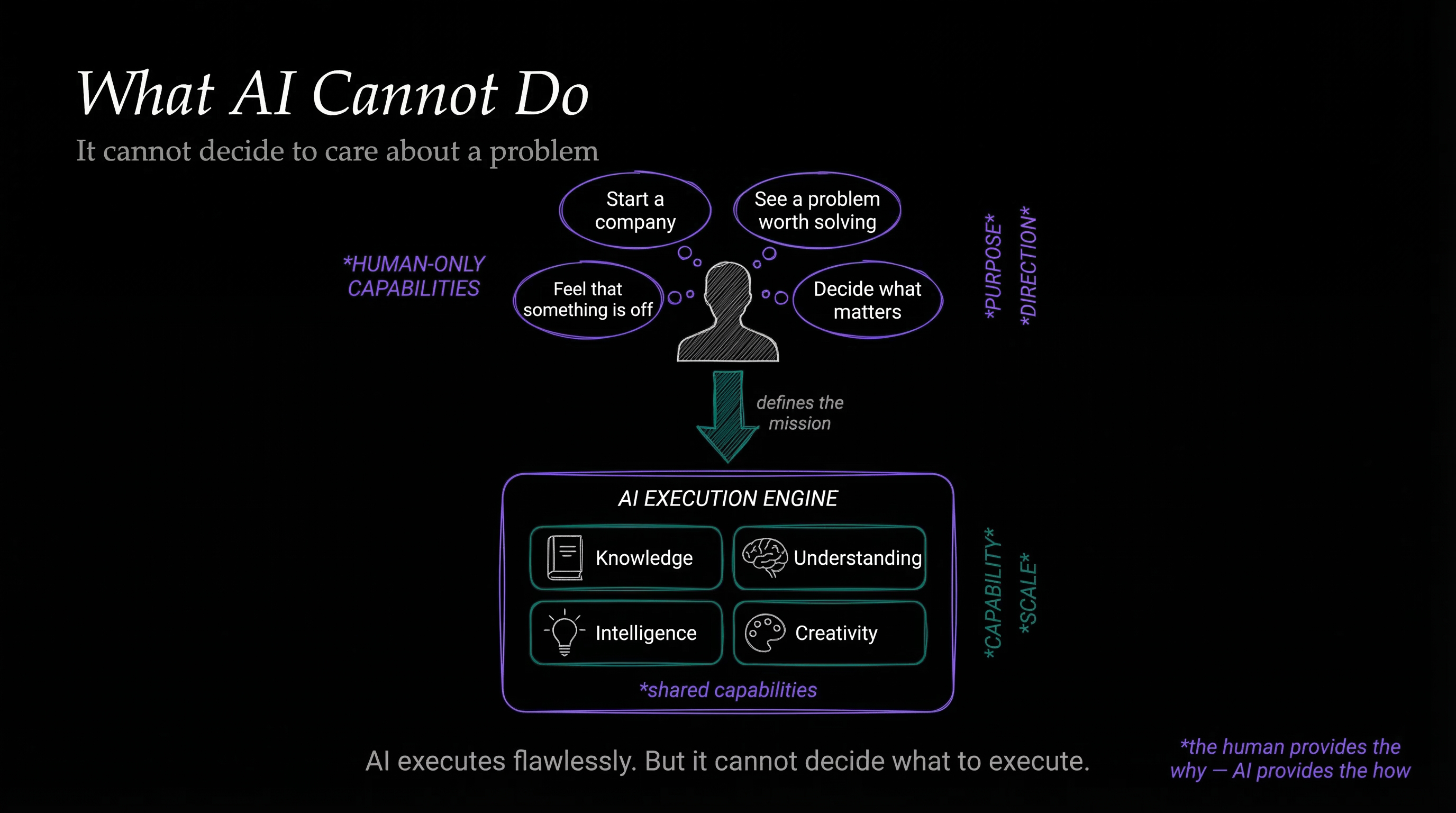

The human as overseer

Everything we've been talking about in this piece—everything around why AI is going to replace us doing work—is around the execution. It's all around the implementation of someone's idea, a founder's idea, a company builder's idea. Well, guess what? There's a trillion more ideas out there that need to be made. And AI makes it easy to actually implement them.

Where are humans in this process? They are at the top. Who determines what the company should be doing, why they're doing it, what problem they're solving? That is only human.

You can have 20 companies and a dashboard that looks down at all 20 of them. They're all doing awesome stuff. You drill in and say: "Hey, we're not doing this anymore. We're doing it a different way. I want to optimize this." And the AI goes and executes.

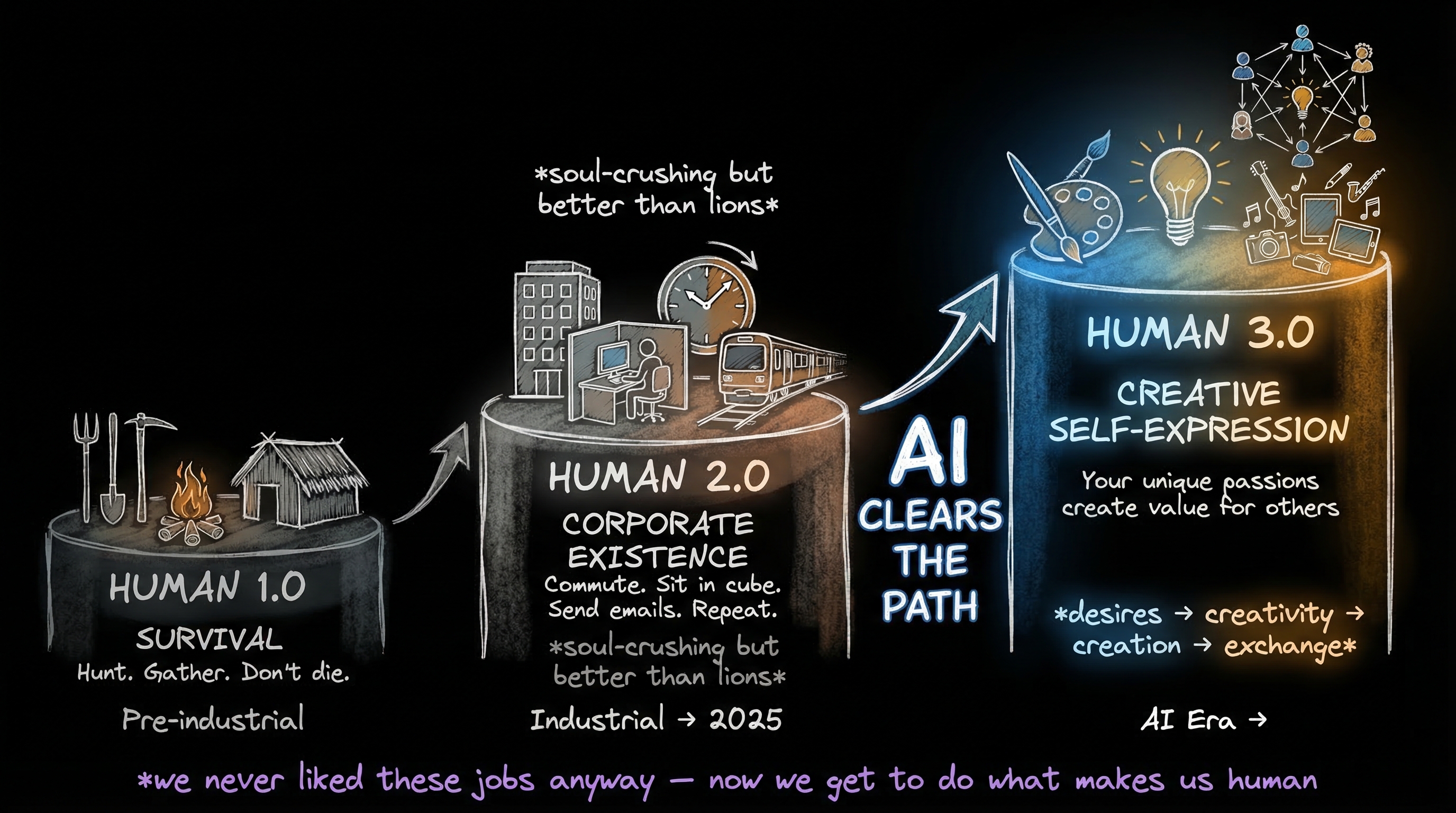

Why this is good news

It's totally understandable to freak out when jobs are going away and AI is taking them. The natural tendency when something is yanked from your arms is to pull it closer.

But that work was garbage. It is garbage. It was garbage for decades. And AIs are coming to take it—fine.

We should not be the row in the Excel sheet. We should not be the section of the robot army that solders the transistor. We should not be the one in the car dreading going into the office Monday morning. That all should never have happened. And we knew that before 2022.

The place we're going is where humans actually have ideas. People are broadcasting their own capabilities, their own plans, their own creativity, their own beliefs, their own creations. Nurturing people. Helping people grow. Creating new art, new experiences for other people. And coming up with companies to do this.

This is what I call Human 3.0.

- Human 1.0—Survival. Hunt. Gather. Don't die.

- Human 2.0—Corporate existence. Commute. Sit in cube. Send emails. Repeat. Soul-crushing, but better than lions.

- Human 3.0—Creative self-expression. Becoming your full self, magnifying your true self, understanding your true self, broadcasting it, and interconnecting that with other humans who are doing the same—so we can have value exchange from human to human without the need for corporate hierarchy.

It's all going away. But it's not good in the first place. We don't need to hold on to it. We need to get to the other side, where we can look down at a Lattice-like system where all the AI is executing, but we decided what to build. We started that business. We built that company.

This is why I care so much about this. This is why I counter this argument that AI will not replace knowledge work. It is going to replace it and we need to get ready. And it's not bad news. It's actually really good news.

Notes and sources

Libet, B. et al. (1983). "Time of conscious intention to act in relation to onset of cerebral activity." Brain, 106(3), 623-642. Brain activity precedes conscious awareness of decisions by ~350ms.

Harris, S. (2012). Free Will. Free Press. "You no more decide the next thought you think than you decide the next thought I write."

Nader, K., Schafe, G.E., Le Doux, J.E. (2000). "Fear memories require protein synthesis in the amygdala for reconsolidation after retrieval." Nature, 406, 722-726. Memory reconsolidation — recall rewrites the memory.

Levelt, W.J.M. (1989/1999). Speaking: From Intention to Articulation. MIT Press. Blueprint of the speaker (PDF). Incremental sentence production — speakers don't know how their sentence ends when they start.

Schultz, W., Dayan, P., Montague, P.R. (1997). "A neural substrate of prediction and reward." Science, 275(5306), 1593-1599. Dopamine neurons encode reward prediction errors.

Berridge, K.C. (1998). "What is the role of dopamine in reward." Brain Research Reviews, 28(3), 309-369. The "wanting" vs "liking" distinction in the reward system.

Killingsworth, M.A. & Gilbert, D.T. (2010). "A wandering mind is an unhappy mind." Science, 330(6006), 932. People spend 46.9% of waking hours thinking about something other than what they're doing. n=2,250.

Hasenkamp, W. et al. (2012). "Mind wandering and attention during focused meditation." NeuroImage, 59(1), 750-760. Even trained meditators cycle through attention and mind-wandering on a timescale of seconds.

Smallwood, J., McSpadden, M., & Schooler, J.W. (2007). "The lights are on but no one's home." Psychonomic Bulletin & Review, 14(3), 527-533. Nearly half of mind-wandering episodes occur without any meta-awareness.

YouGov (2025). "Most Americans didn't read many books in 2025." 40% of Americans read zero complete books; median was 2 books. n=2,203.

OECD PIAAC (2024). "U.S. National Results." 28% of US adults have literacy at Level 1 or below (up from 19% in 2017); 34% have numeracy at Level 1 or below. Data collected 2022-2023.

FINRA Foundation (2024). "National Financial Capability Study." Only 27% pass a 7-question financial literacy quiz; only 4% answer all correctly. n=25,500.

U.S. Chamber of Commerce Foundation (2023). "Alarming lack of civic literacy." Over 70% failed a basic civics quiz. n=2,000 registered voters.

NSF (2024). Science & Engineering Indicators — Public Attitudes. ~26% of Americans still answer incorrectly that the Sun orbits the Earth. Stable since the 1980s.

Bone, J.K. et al. (2025). "The decline in reading for pleasure." iScience, 28(9). Daily pleasure reading dropped from 28% to 16% (2004-2023). n=236,270.

ILO (2024). Global Wage Report 2024-25. Global labour income share ~52.4% of GDP (~$55T total compensation).

Bureau of Labor Statistics. Quarterly Census of Employment and Wages. US total wages ~$11.7T (2024).

Gallup (2025). State of the Global Workplace. 21% engaged, 62% not engaged, 15% actively disengaged. Disengagement cost $438B in 2024. n=227,347.

Gallup (2024). "U.S. Employee Engagement Sinks to 10-Year Low." 31% engaged in US — lowest since 2014. 52% quiet quitting.

Kickresume (2024). "Sunday Scaries Survey." 70% of workers experience Sunday dread; 36% every single week. n=2,144.

McKinsey. "Help Your Employees Find Purpose." Only 15% of frontline workers say their work fulfills their sense of purpose.

Microsoft/LinkedIn (2024). Work Trend Index. 46% of workers considering quitting. n=31,000 across 31 countries.

McKinsey Global Institute (2017). A Future That Works. Only 4% of US work activities require creativity at median human level.

Stanford HAI (2025). AI Index Report 2025. Comprehensive annual survey of AI technical performance vs. human baselines across domains.

Stanford HAI (2025). Technical Performance Chapter. AI exceeds human expert baselines on MMLU; SWE-bench solve rates rose from 4.4% to 71.7% in one year; AI agents outperform human experts on short-horizon research tasks.

Shannon, C.E. & Weaver, W. (1949). The Mathematical Theory of Communication. University of Illinois Press. The foundational model of signal degradation.

Anthropic (2025). "Claude Code Skills." Documentation on building AI skills — expertise packaged as context for AI execution.

Miessler, D. "Companies Are Just a Graph of Algorithms." Every company is a graph of interconnected algorithms executed mostly by humans.

Miessler, D. "Human 3.0: The Creator Revolution." The framework for human evolution from survival to corporate existence to creative self-expression.

Miessler, D. "The Coming Future of Unemployable Humans." Automation's impact on employment and why jobs are disappearing faster than new ones appear.

Miessler, D. "AI's Predictable Path: 7 Components." The complete trajectory of AI development.

Miessler, D. "Building a Personal AI Infrastructure (PAI)." Context management systems that bridge the gap between human expertise and AI capability.

Miessler, D. "AI Isn't the Thing. It's the Thing That Enables the Thing." AI as enabler, not replacement.