Thinking About the Future of InfoSec (v2022)

I’m starting a new series with this 2022 edition where I think about what Information Security could or should look like in the distant future—say in 2050. The ideas will cover multiple aspects of InfoSec, from organizational structure to technology.

I’m doing this for fun—basically to see how dumb I look later—but I also hope it’ll drive interesting discussions on where things should go.

Introduction

At the highest level, I think the big change to InfoSec will be a loss of magic > compared to now. In the next 15-30 years we’ll see a move from wizardry to accounting—and a much more Operational Technology approach to the discipline in general.

A big part of this will be simply doing the basics well, in a standardized way. We’re currently in pre-teen years here, which is the source of most of our problems. That means asset management, identity, access control, logging, etc. It means most similar types of products (APIs, hosts, datastores, etc.) looking and behaving similarly, with similar controls and interfaces regardless of vendor or implementation. It means standard APIs for auditing and controlling configuration and access to these systems, in addition to using them, so that you can continuously and programmatically determine an object’s security level.

Let’s look at the aspects that will contribute.

Org Structure

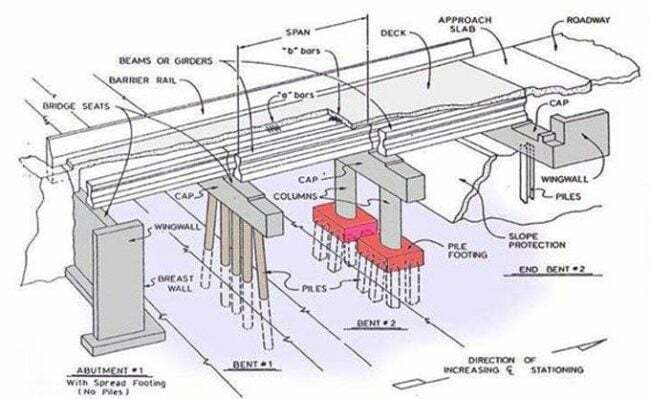

I think security will live within engineering in the future, much like building "secure" bridges isn’t a separate department within civil engineering. There’s not a "bridges don’t fall down" department because bridges aren’t supposed to fall down. We’re just not there yet with (info)security because it’s a brand-new field.

I think this might mean that security becomes a smaller oversight function up with the C-Suite, with strong collaboration with the CFO and the head of legal.

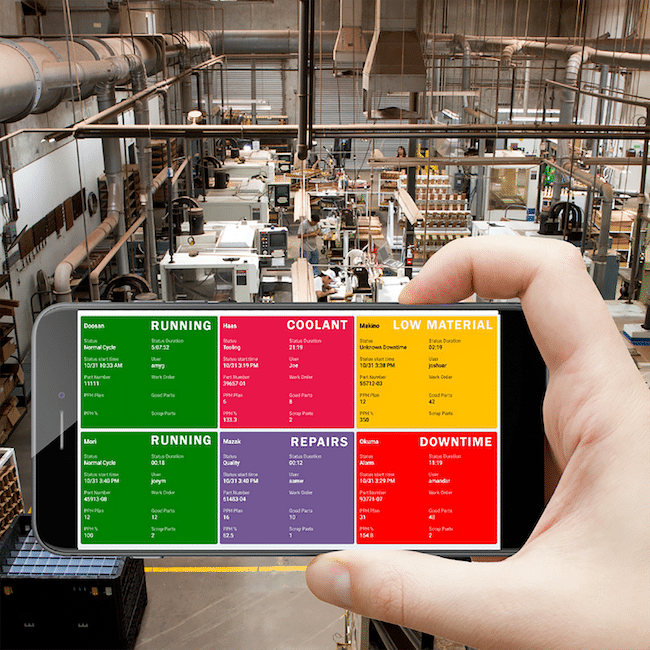

There will be a large Operational team that does nothing but monitor everything to ensure they’re within tolerances. This includes making sure only the right people are looking at the right data, only the right people are logging into the right systems, that services are available with enough 9’s, that they’re responsive, etc. Essentially the business will see what the risk tolerance is for all these items, and that’s what the Ops team will monitor in their dashboards. Again, like a factory.

AWS is one of the few things I could see being around in 20 years.

This smaller security team will be responsible for analyzing data from the various telemetry sources and ensuring that everything is within tolerance. This will include things like cloud configurations for open protocols, open ports, authenticated entities, encryption at rest, encryption in transit, who is accessing what items, etc.

They’ll basically be watching massive dashboards and managing responses to stimuli when things happen that are out of the ordinary, and then coordinating with the various oversight groups (foreshadowing: insurance) who are jointly monitoring and/or asking questions about their security posture.

Technology

I think the main difference we’ll see in security tooling will be the integration with the actual IT applications, much like security engineering will be integrated with regular engineering. So there will be some security tooling still, but it’ll be more oversight-based rather than doing the main part of the work.

Similar to AWS now actually, where you have EC2 and Kubernetes configuration, and you have security configurations within those tools. There are separate security offerings from AWS, but from my view we’re already seeing a lot of that tooling move into the core services themselves. The security tooling becomes more about policy, dashboards, and reporting.

Regulation

Some of this depends on the state of the world, i.e., how powerful centralized governments actually are in 20+ years, but let’s assume it’s much the same as now, just more. If that’s the case, we should expect laws to evolve to shorten the time in which incidents must be reported, and we should expect this for not just government organizations, but for corporate entities as well.

Basically, the argument will be made that everything is connected, and that people depend on these corporate services, therefore they’re national infrastructure to some extent. And thus you must report issues in a very timely manner. As the tech evolves I think they’ll require that 1) certain high-scrutiny organizations will require a giant monitoring brain that the government can tap into when needed or—in some cases—continuously.

HT to Jeremiah Grossman to also being very early to seeing the role of insurance in InfoSec.

Don’t worry, companies will feel more comfortable about this because the insurance companies will already be in that system. It’s good news / bad news.

Regulators will be everywhere. You’ll have to report things very quickly, and there will be severe fines for not doing so. The main difference from today will be that 1) there will be more reporting required, at shorter intervals, and many of those reports will need to basically be real-time through technology—at least for high-criticality gov/private organizations.

Insurance

Like Jeremiah and I have been talking about for years, I think insurance will be a major player in the future of InfoSec >. Much of this maturity we’re going to see will come from innovations from organizations that have the most to gain from improvement. When it comes to cars and houses, that push came significantly from insurance companies.

You need these inspections or else you can’t get insured. You need smoke alarms. You need this. You need that.

We don’t yet know what those things are for security, but I can tell you who really cares what they are. Insurance companies. And they’re already instituting all sorts of visibility practices, like requiring that you install a black box on the network to get a sense of your environment. Exactly like Progressive asking you to install a box in your car that can monitor how you drive. Or health insurance companies asking for access to your mobile phone’s Health app.

The result of this is easily predictable: as insurance companies determine what works and what doesn’t, they’ll start requiring certain solutions and rating solutions in general.

Did you know Michellin Star Restaurants are associated with Michellin tires? It’s weird how history plays out like that. They did a campaign about restaurants you could encounter while driving (on Michellin tires, obviously), and that turned into a cornerstone of the restaurant industry.

Well, expect to see Allstate Ratings for Zero Trust solutions too. It’s a lot more inevitable than the Michellin thing, actually, because insurance companies are the ones who are incentivized to find out what actually works. Because then the insurance companies can steer their customers to use those solutions to reduce their own payouts.

Automation and AI

I was going to put this in the Technology section, but it’s worth its own treatment. Machine Learning will continue to soak into all of the world’s technology, and that includes security technology. It’s hard to say, but I do anticipate being able to replicate Level 2 or even Level 3 security analysts in some small domains when the technology is highly advanced and when attack types are relatively static, but most of the benefit of ML will come not from adding 20 L3 analysts, but instead from adding 100,000 mid-level interns.

The more standardization we have in our tech, and the more logging is required, the more data there will be to look at. This problem is not solved by getting more people into the security field. There’s too much data for humans on the planet to analyze, period. This is already true, and it’ll be many times more true in 2050. Automation is needed to filter and curate the data that humans see, and as time progresses the distinction between automation and AI/ML—at least in information technology—will continue to blur.

ML will have the biggest impact in answering questions like the following, at scale:

Was this action fraudulent?

Is this a legitimate transaction?

Was this action performed by the user it was associated with?

What is the weakest point of entry into this company or country?

Where should we attack?

Where should we anticipate attack?

Among these billions of vulnerabilities, what should we fix first?

What change should we make to improve outcome X the most?

A good definition of AI is what computers can’t yet do, which is always moving.

In short, ML will continue to become more like statistics, charts, databases, and computers—i.e., a standard way of solving problems in any organization. It’ll just blend into businesses in the same way that your business isn’t considered special for having a Postgres database. One big AI-based innovation step will be general AI, which may or may not happen in the timeframe we’re discussing.

The next aspect of this, which could have been put in the Technology section as well, is CCE, which stands for Continuous Chaos Engineering. The field is also known as AD for Anti-fragile Design, but "AD" was too overloaded as an acronym that people have been using CCE. The point of these processes is to not only continuously monitor to make things are in an ideal state, but to constantly add stress to the system—of different types—to ensure that the system can handle it.

SCs (Stress Campaigns) are constantly run an arm of engineering to ensure that not only could we survive these if they happened, but in order to actually improve from what we learn. So if we send a massive amount of traffic to a key API endpoint as part of a new SC, and we see X amount of deterioration of the service, we might send an immediate request to boost that service’s on-hand service nodes.

The operational teams monitoring the state of the IT infrastructure will have the challenge of not always knowing (but perhaps sometimes) when an issue that takes a parameter out of compliance comes from an actual natural problem, a member of the offensive team trying to do something malicious, or a real attacker attempting an attack. Some teams will tag activity with various labels (OFFSEC, CHAOSOPS, UNKNOWN, ATTACKER22301 etc.) to help with that attribution, but part of operations will sometimes be having regular operators not know the difference.

Careers

Ok, cool. So what about people? What will it mean to be in security in like 2050? I think the answer is:

More Electrician-types (tradespeople connecting things according to documented specs)

More Data Analysts (statistics and ML background, combined with data visualization)

Security Executives become factory fore-people, i.e., overseeing an operational function, combined with broadcasters of narrative

There are still variation in electrician implementations, too.

So we’ll have millions of people employed to install and configure the various types of tools needed in a business. I think these tools might blur between the tools themselves vs. the security tooling. For example, if people come to install Salesforce they’ll be installing it and configuring it to plug into your internal data lake and brain. So, just like electricians, there will be well-known inputs, and well-known outputs, and it’ll just be a matter of figuring out where to put what.

In other words, when Allstate and the State of Massachusets come to connect to your company’s IT Brain, called Conito, provided by Databricks (now owned by Amazon like everything else), everyone will be using the same nozzles and connectors.

Careers will be more cleanly broken into functions like:

Installing things according to regulation and standard

Knowing the current configuration of a given system at all times

Analyzing the data from all systems to get that into dashboards

Evaluating dashboards to determine which changes to configuration should be made

Making configuration changes and evaluating their impact

Continuous monitoring of those dashboards to flag anomalies

Using AI/ML to make smarter and smarter recommendations based on the data available

Taking the output of data analysis and dashboards and turning that into narratives for partners, management, investors, insurers, regulators, etc.

If this sounds boring, yes, it will be. That’s the point. That’s what happens when you move from wizards to book-keepers. Wizards deal with the unknown. The arcane. It’s where few people know what’s happening, and everyone looks up to them. This is bad for business because it’s not repeatable.

Accounting is repeatable. Arithmetic is repeatable. And so will be identity, access control, logging—once we hit our late 20’s and early 30’s as an industry.

What does this mean for "jobs in security?"

It means you’re installing products for an internal company. You’re installing products for Allstate, which means for Allstate’s customers.

Or you’re using data analytics and data science to look for patterns in the data in FooCorp’s Cognito install.

Or you’re a configuration validator who makes sure every product is installed to standard, with all inputs and outputs working correctly.

Or you’re an engineer working on improving the products themselves.

Or you’re an executive telling the data security story to your stakeholders.

Will there be OFFSEC people? Red team? Blue team? Absolutely. All of this still has cruft in it. Even though it’ll be 95% better than today, that 5% will still be able to cause havoc and the loss of a lot of money.

So there will still be some arcane magic users, but they will become fewer and fewer as time goes on. And they’ll increasingly need to be strong developers skilled with analysis and manipulation.

Distant Future

This gets fuzzier as we move forward, obviously. But let’s try to add some decades and see what changes the most.

I think the simplest answer is "fewer people". As the tech keeps improving, it also gets better at installing itself, continuously checking its own configuration, raising alarms when it’s not working, etc. That means fewer people required to do those pieces.

So it’s fewer and higher-skilled people doing data analysis on what the dashboards are telling us, and creating better dashboards, rather than doing the installs themselves. And then it’s fewer but higher-skilled executives taking that data into conversations with stakeholders.

And then of course there are the people working on the actual products. This will increasingly be elite work for the best programmers in the world since an increasing proportion of "basic development" will be within the capabilities of AI/ML by then. But again, remember that these people will largely be working for the technology companies, not for security companies. They’ll just be working on security features for Cognito, Salesforce, or for AWS’s equivalent at the time.

I anticipate there will still be security companies, but that they’ll largely be incubators for candidate security features for inclusion in the bigger IT products. So the company takes a copy of Cognito and their competitor, spends a few months working on a cool set of new security features, and then they take that to the market. And if the feature is strong those companies will buy the code and/or the team.

In other words, I think much of the current security market is based on how poorly the industry does the basics. AWS exists because local IT within companies was a dumpster full of burning tires. Asset management companies exist because nobody knows what they have, and therefore what to defend. Endpoint companies exist because OS’s haven’t been great at identity, access control, and allow-listing applications and content. As those basics improve, that functionality moves back into the core products.

AWS will have asset management and continuous monitoring. Microsoft will have endpoint protection. Databricks will have data security built-in. This does raise the question of monopoly, and how companies will be inspired to innovate if they’re the consolidated big companies at the table, and I think the answer will have to be some combination of this feature development model for startups along with regulation that pushes higher and higher standards for organization protection.

I can easily see AWS being broken out relatively soon.

That’s a weird thing to type, actually, and it’s why I’m doing this exercise. As companies like Salesforce and AWS get bigger through success and acquisitions the incentive to innovate will eventually slow down. And furthermore, if you’re on AWS and they provide like everything for your company, it’s going to be pretty hard to migrate over to some new upstart’s offering if it’s in conflict with AWS in some way. The momentum to stay with AWS will continue to build because new companies won’t have the resources to offer similar stability or support.

A Future Example

Amaya works for Progressive, which is the main player in auto and Cyber Insurance.

She is an expert with Cognito, a formerly Databricks and now AWS tool for unifying all IT feeds (which include security) into a single place. It’s basically the brain of most enterprise IT infrastructures. She is going into a medium-sized business that’s having issues with their telemetry meeting insurance and government standards. In other words, not everything is configured correctly, logging, to the right places, at the right verbosity levels, and at the right cadence. And it’s not all being sent to a single place.

She starts with the AWS infrastructure and applies a Cognito template that does a bunch of analysis (AI/ML stuff) to look at everything that exists, all of its existing settings, etc. She then selects a policy template that accounts for what Progressive is asking for, as well as the State of Michigan where the company resides, plus some additional asks from other stakeholders. That takes a few minutes to finish because it has to look at every setting in all of AWS and determine current state and end state.

She then launches CogniBot, which is a set of hundreds of thousands of ai-automated applications that spider and crawl and log into things dynamically given the credentials and authority she’s been given to do this project. This allows the bots to learn the delta between what they see in the configurations vs. what actually exists in the infrastructure. CogniBot systems coordinate to look at the external perimeter, internal access controls, listening services, all software versions, etc. Essentially the same thing the configuration tool looked at, but it’s doing it dynamically via active and passive probing.

These are left to run for between 3 days to a month, to listen on the network, to watch traffic patterns and other aspects of the business that aren’t as clear from static analysis, and to generally provide peace of mind that the migration to the new Cognito Template will not cause disruption to business.

Upon her return, Amaya will review all the data with her team, meet with the customer, coordinate a time for the changes, and then begin migrating all AWS settings to the new policy. This will happen in a phased approach that Cognito’s intelligence came up with—including automated rollback if problems are detected during the process. Cognito will continuously monitor the state of business during the migration and ensure that all business operations continue to function throughout. They start with datastores, then move to network access, then to endpoint lockdown, etc.—all the way through the systems controlled by AWS.

This will change access controls, enabled protocols, storage settings, and pretty much every configuration option that has a bearing on Progressive’s and the State of Michigan’s controls, as defined in their templates.

At the end of the migration, Amaya and her team look at the new Cognito dashboards, which show all the data and new telemetry flowing into the Amazon Databricks backend. They see that every cloud server, every application access, every authentication challenge, every endpoint action, etc.—are all being logged into Cognito, and we are currently sitting at 94% compliance. It appears that the policy rollout could not hit some percentage of endpoints because they were hard-powered-down, and we’re waiting for them to re-associate.

Her team will continue to monitor this, and now they start thinking about the next project. Salesforce. Terabytes of data is being created for this company per week within the system, but the storage configuration and logging are not compliant with 7 different states, Ireland, Iceland, or Papua New Guinea. Or with Progressive, which is why she’s been assigned to the project.

So now she’s going to take the Cognito templates for all those jurisdictions and run them against the petabytes of data for this customer, as well as every setting that pertains to protecting that data, and will produce the configuration changes to get it compliant. Most importantly, it will not only lock down all those settings but will enable verbose logging for all events and send it all to the Cognito instance within the company.

Months later, Tariq is enjoying his new position as head of security for the company, and he’s having his coffee while looking at the Cognito dashboards. Everything is running within appropriate tolerances, and has been for several weeks. There are occasional Orange or Red events where configurations go out of compliance, or where an object fails to report in with telemetry, but those are quickly accounted for by the massive operational team they run.

This team essentially watches the Cognito dashboards and takes corrective action whenever anything goes out of compliance, followed up by the RCA team taking an action item to ensure that never happens again.

Summary

As our industry moves from our pre-teens to our 20’s and 30’s (2030-2050?), we’ll transition from Wizards to Accountants.

Much of this will center around doing the basics of asset management, identity, access control, and logging well.

AI and Automation will remove the need for a lot of the manual work of installing and monitoring products.

We will soon see large, monolithic, ML-powered products that take in all telemetry from everything, and produce unified dashboards.

Insurance and Regulation will push towards this, driving the standardization and operationalization of InfoSec.

Security will increasingly blend into engineering, both at the technical level and within organizational charts.

As part of operationalization, the concepts of resilience and antifragility will become major considerations.

The mid-game (who knows what endgame is) involves a massive operations team monitoring centralized dashboards and responding when things go out of tolerance. Not security dashboards. Company dashboards. Which include security and lots of other types of metrics and risk-levels that need to be kept within tolerances.

Notes

One thing that detracts from the factory metaphor is that factories generally account for first-order chaos, or static threats like equipment failure. Cyber is different because the attacker knows the current state of their abilities as well as defenses, and can modify their behavior accordingly. I think this will slow, but not stop, the inevitable march towards boring dashboard InfoSec.

Credit to Caleb Sima for some enlightening seed thoughts on the integration of security and engineering.

Ironically, heavy automation seems to open the door even more for OFFSEC and Blue Team, because the more we blindly depend on autonomous technology the more vulnerable we could become to everything suddenly breaking—either on accident or on purpose.

Image from Langara. More >