Secrecy (Obscurity) is a Valid Security Layer

Many of us are familiar with a concept know as Security by Obscurity >. The term has negative connotations within the infosec community—usually for the wrong reason. There’s little debate about whether security by obscurity is bad; this is true because it means the secret being hidden is the key to the entire system’s security.

Watch a video presentation of me making a similar argument >.

When added to a system that already has decent controls in place, however, obscurity not only doesn’t hurt you but can be a strong addition to an overall security posture.

Good obscurity vs. bad obscurity >

The key determination for whether obscurity is good or bad reduces to whether it’s being used a layer on top of good security, or as a replacement for it. The former is good. The latter is bad.

An example of security by obscurity is when someone has an expensive house outfitted with the latest lock system, but the way you open the lock is simply by jiggling the handle. So if you don’t know to do that, it’s pretty secure, but once you know it’s trivial to bypass.

That’s security by obscurity: if the secret ever gets out, it’s game over. The concept comes from cryptography >, where it’s utterly sacrilegious to base the security of a cryptographic system on the secrecy of the algorithm.

Camouflage >

A powerful example of where obscurity and is used to improve security is camouflage. Consider an armored tank such as the M-1. The tank is equipped with some of the most advanced armor ever created, and has been shown repeatedly to be effective in actual real-world battle.

So, given this highly effective armor, would the danger to the tank somehow increase if it were to be painted the same color as its surroundings? Or how about in the future when we can make the tank completely invisible? Did we reduce the effectiveness of the armor? No, we didn’t. Making something harder to see does not make it easier to attack if or when it is discovered. This is a fallacy that simply must end.

OPSEC >

OPSEC is an even better example because nobody serious in infosec doubts its legitimacy. But what is OPSEC? Wikipedia defines it as:

So basically, protecting information that can be used by an enemy. Like, where you are, for example, or what you’re doing. There are lots of examples:

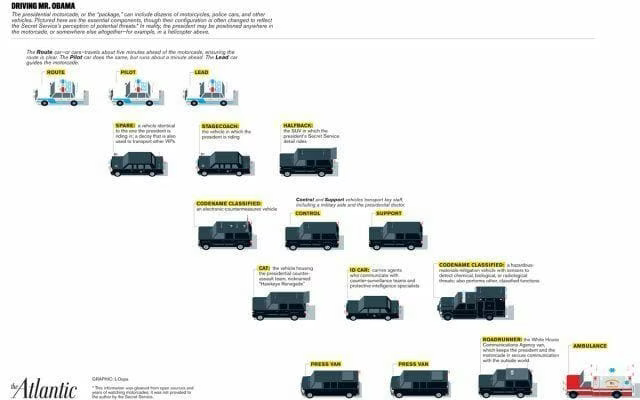

There are usually one or more decoy limos and helicopters flying next to where the president, and the reason for this is so that the enemy is not sure which to attack.

When you do executive protection or military maneuvers, you generally want to keep your movement plans as private as possible to avoid giving the enemy an advantage.

People are encouraged to take random routes to and from locations that are unsafe so that potential attackers won’t know exactly where to attack you.

These are all about controlling and restricting information. Or, put another way, obscuring it. And if it was such a bad practice it wouldn’t be practiced everyday by the militaries of the world, the secret service, executive protection, and anyone else who knows basic security operations.

When the goal is to reduce the number of successful attacks, starting with solid, tested security and adding obscurity as a layer does yield an overall benefit to the security posture. Camouflage accomplishes this on the battlefield, decoys accomplish this when traveling with VIPs, and PK/SPA accomplishes this when protecting hardened services.

An SSH Example >

Of course, being scientific types, we like to see data. In that vein I decided to do some testing of the idea using the SSH daemon (full results here >).

I configured my SSH daemon to listen on port 24 in addition to its regular port of 22 so I could see the difference in attempts to connect to each (the connections are usually password guessing attempts). My expected result is far fewer attempts to access SSH on port 24 than port 22, which I equate to less risk to my, or any, SSH daemon.

Setup for the testing was easy: I added a Port 24 line to my sshd_config file, and then added some logging to my firewall rules for ports 22 and 24.

I ran with this alternate port configuration for a single weekend, and received over eighteen thousand (18,000) connections to port 22, and five (5) to port 24.

That’s 18,0000 to 5.

Let’s say that there’s a new zero day out for OpenSSH that’s owning boxes with impunity. Is anyone willing to argue that someone unleashing such an attack would be equally likely to launch it against non-standard port vs. port 22? If not, then your risk goes down by not being there, it’s that simple.

Reducing Impact or Probability >

Another foundational way to look at this is through the lens of risk, whereby it can be calculated as:

risk = probability X impact

This means you lower risk (and increase security) by doing one of two things:

Reducing the probability of being attacked, or…

Reducing the impact if you are attacked.

Adding armor, or getting a better lock, or learning self-defense, are all examples of reducing the impact of an attack. On the other side, hiding your SSH port, rotating your travel plans, and using decoy vehicles are examples of reducing your chances of being hit.

The key point is that both methods improve security. The question is really which should you focus on at any given point. Is adding obscurity the best use of my resources given the controls I have in place, or would I be better off adding a different (non-obscurity-based) control?

That’s a fair question, and perhaps if you have the ability to go from passwords to keys, for example, that’s likely to be more effective than moving your port. But at some point of diminishing return for impact reduction it is likely to become a good idea to reduce likelihood as well.

Summary >

Security through obscurity is bad because it substitutes real security for secrecy in such a way that if someone learns the trick they compromise the system.

Obscurity can be extremely valuable when added to actual security as an additional way to lower the chances of a successful attack, e.g., camouflage, OPSEC, etc.

The key question to ask is whether you’re better served by adding additional impact reduction (armor, locks, etc.), or if you’re better off adding more probability reduction (hiding, obscuring, etc.).

Most people who instinctively go to "obscurity is bad" are simply regurgitating something they heard a long time ago and think makes them sound smart.

Don’t listen to them. Think through the ideas yourself.