Inference Costs Are Not Sustainable

Welp, I'm now getting through a quarter of my week's MAX subscription in a few hours of work with Claude Code.

I think Anthropic is smart, and I don't think they're trying to screw us. I think they're honestly just trying to bring inference charges inline with reality.

And that should be a wake-up call for all of us.

I think we're about to need multi-model harnesses (or FAR cheaper good models within a single platform), like 20x cheaper Haiku or whatever.

This is not sustainable.

The better the harnesses and models get, the more people will build. Which will require more and more inference.

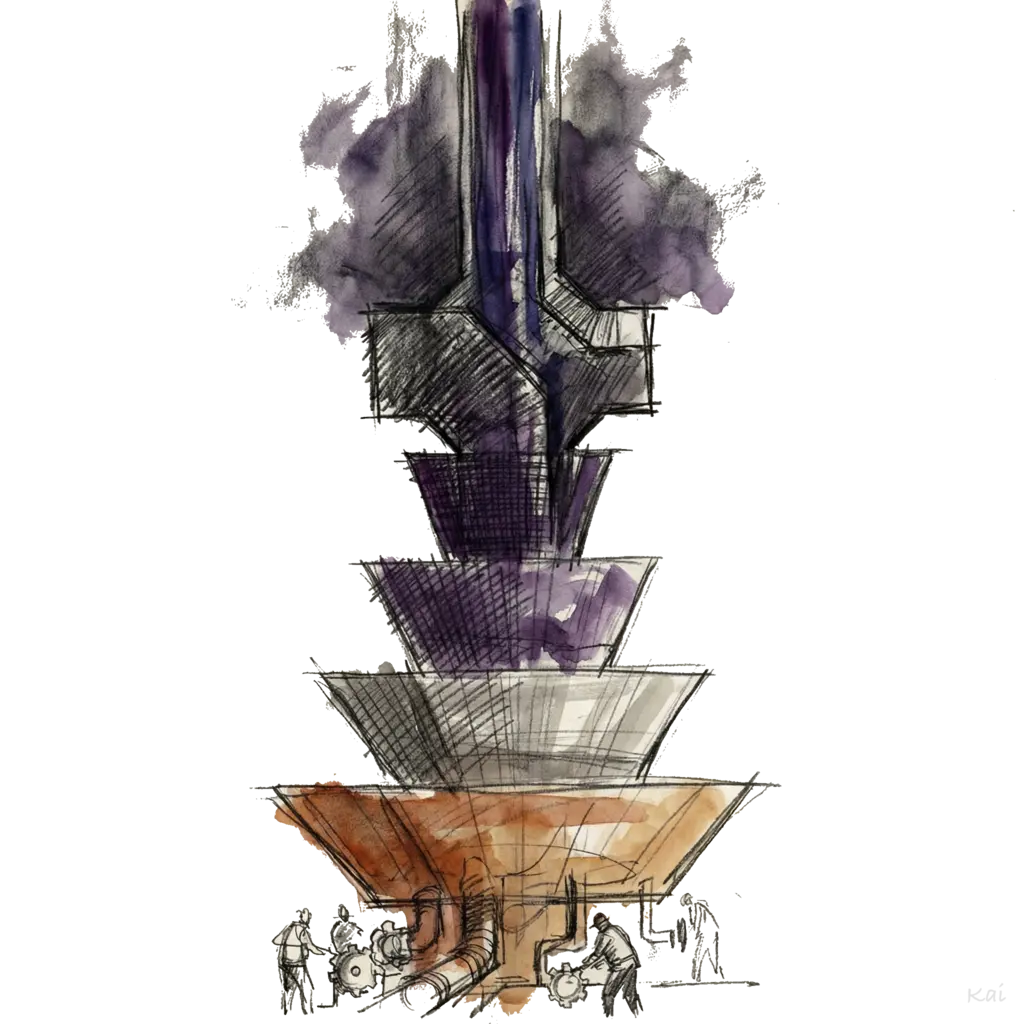

I think the real solutions here are going to come from:

Technologies like Cerberus, et al which make inference many times cheaper and faster

A major push by the major labs to produce higher quality in much smaller/cheaper models

Harnesses moving to a hybrid of paid/cloud and local/cheap models.

If this continues I'm going to have to build my own custom version of PAI using Pi, that can use local models on my dual 4090s, models like Gemini-Flash, models like Gemma 4, etc.

And most importantly, a new hook infra that rates the task and properly routes to the right model.

- Max: Opus / GPT-5.4

- High: Sonnet

- Medium: Haiku

- Low: (Local) Whatever the latest best OSS model is that can run on my NVIDIA / Mac Silicon

I think we all knew this was coming; I just thought it would be in 2027 sometime. And more gentle.

It appears to be very close now because this much subsidization doesn't seem sustainable to Anthropic, which means it's probably not sustainable for OpenAI either.

My recommendation: Start planning your Multi / Local / Cheaper model strategy for your harness.