The Most Interesting (Disallowed) Directories in the World

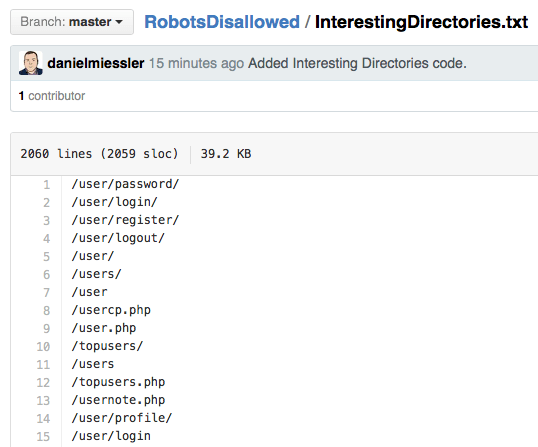

I just published RobotsDisallowed >, a Github project that finds the most common Disallowed entries in the robots.txt files of the worlds top 100,000 websites.

I have it broken down into Top-n lists that pull out the top 10, 1000, 10000, etc. directories listed—in case you’re pressed for time on your assessment.

But I just added the best list of them all: the InterestingDirectories.txt list >. This is a list of the directories from the Top 100K Disallowed entries that have the following words in them:

user

pass

secret

code

admin

source

The other lists are great to have, but if you’re looking to find the highest value hits in the shortest amount of time, this is probably the list to use.

[ InterestingDirectories.txt > ]

Notes

The RobotsDisallowed project is located here >.

The purpose of this project is to help legitimate web testers find vulnerabilities before the bad guys do. Protip: the bad guys are already doing this.

Improvement ideas welcome! I’ll put you in the credits. Looking for more good strings to improve the InterestingDirectories list, among other things.