Bitter Lesson Engineering

I have a new concept I'm using everywhere in my AI engineering called Bitter Lesson Engineering (BLE).

The idea comes from Richard Sutton's essay, "The Bitter Lesson".

The essay argues that all of our human attempts to control, modify, and enhance AI are kind of not worth it, because when you increase the intelligence of AI—through more hardware or better algorithms or whatever—that will increase intelligence far more than anything we can do with our human approaches.

It's stronger than that actually. Not only will it not be better if we try to help, but it will likely be far worse.

Essentially, we should avoid poisoning AI's native capabilities with our supposedly superior guidance, because it's not actually superior.

Some other quotes from the essay:

"The biggest lesson that can be read from 70 years of AI research is that general methods that leverage computation are ultimately the most effective, and by a large margin."

"We want AI agents that can discover like we can, not which contain what we have discovered."

"We should build in only the meta-methods that can find and capture this arbitrary complexity."

"Building in our discoveries only makes it harder to see how the discovering process can be done."

This is about more than just agentic engineering

This is obviously super important for people who are building AI, but it goes way beyond that.

Anything you are doing with AI, where you are asking AI to help you, needs to follow the BLE (Bitter Lesson Engineering) principle.

LIFE MANAGEMENT

If you're trying to get help managing your life, like managing routines, improving your finances, etc: don't give it a bunch of your own accumulated methodologies and tell it to implement them. Instead, articulate exactly the life you want to have.

BUSINESS

If you're getting help starting a business, don't tell it how to help you: tell it the business you want to build and the life you want to have.

GENERAL AI INTERACTION

Focus less on the steps of execution and focus more on the results you want and don't want.

My takeaways

So here's what I recommend you take from all this.

- The way we think about logic and intelligence and efficiency are very likely primitive

- So we shouldn't be hard-coding those rules or ideas into how we "teach" AI to do things

- As AI gets smarter it'll come up with way better ways to do the same thing from first-principles

So my simple BLE rule for myself when building AI systems, or really doing anything with AI going forward, is:

Don't confuse the "what" with the "how".

Be extremely specific about what you want, and then give the best tools you have to the best AI you have, and let it figure out how to execute.

This means as the AI gets smarter, our scaffolding becomes more about preferences than execution, ultimately making our entire system meta-upgradeable instead of BLE-hobbled.

Notes

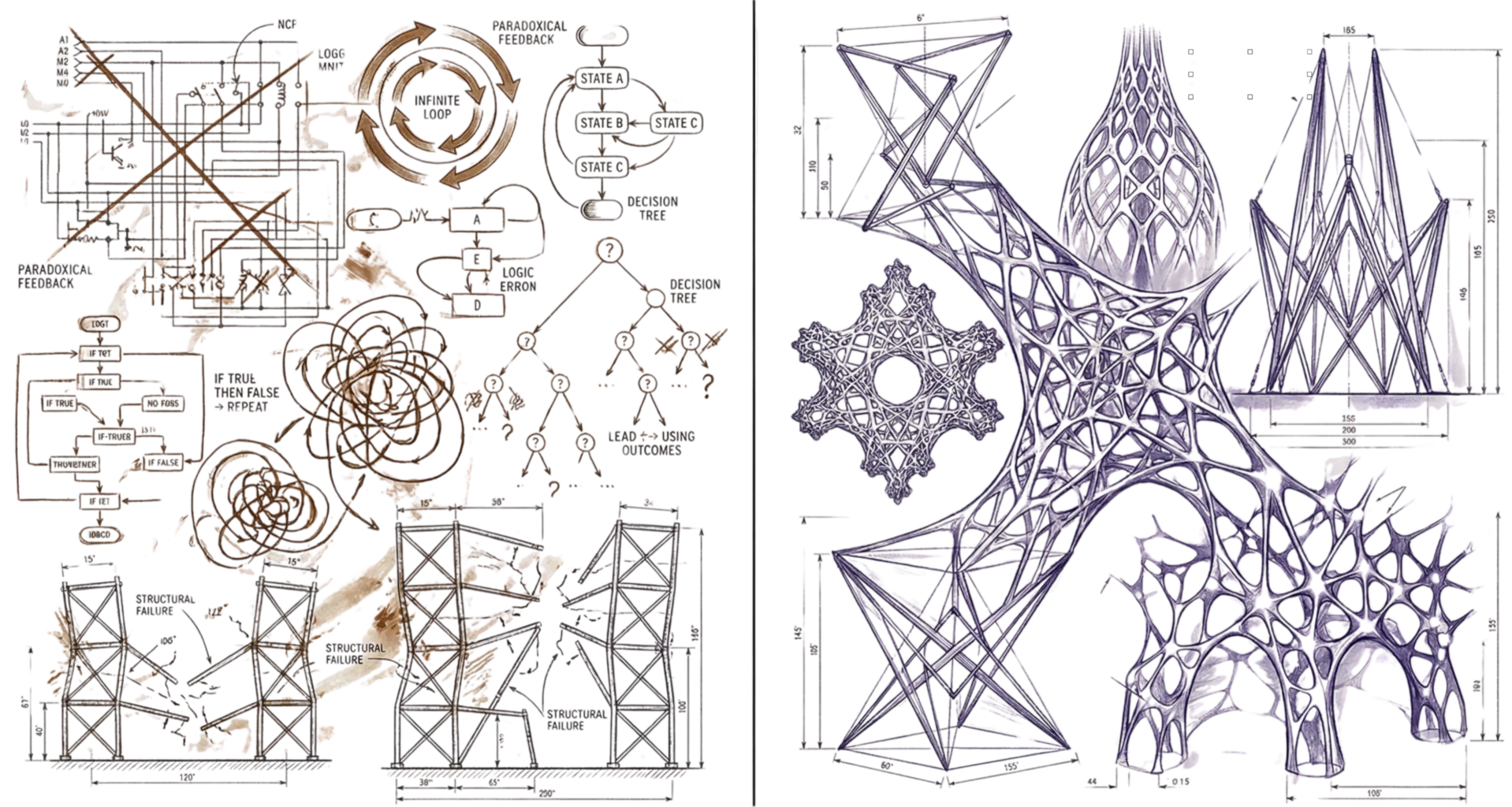

- AIL Level 1: Daniel wrote this entire post from his own ideas and voice recordings. I (Kai, his DA) helped with formatting and generating the header image. Learn more about AIL

- Citation: Richard Sutton, "The Bitter Lesson", March 13, 2019.

- A BLE-hobbled system is one where the scaffolding has aged to the point of making your overall system worse instead of better. After which point the AI could actually do a better job if it didn't have to follow our super-smart, dumb instructions.

- I also build BLE into the PAI project through an

AISTEERINGrule. - The magic combination going forward is the best AI, with the highest quality context about you, that has access to the best tools.