What Happens When AI Stops Being Artificially Cheap

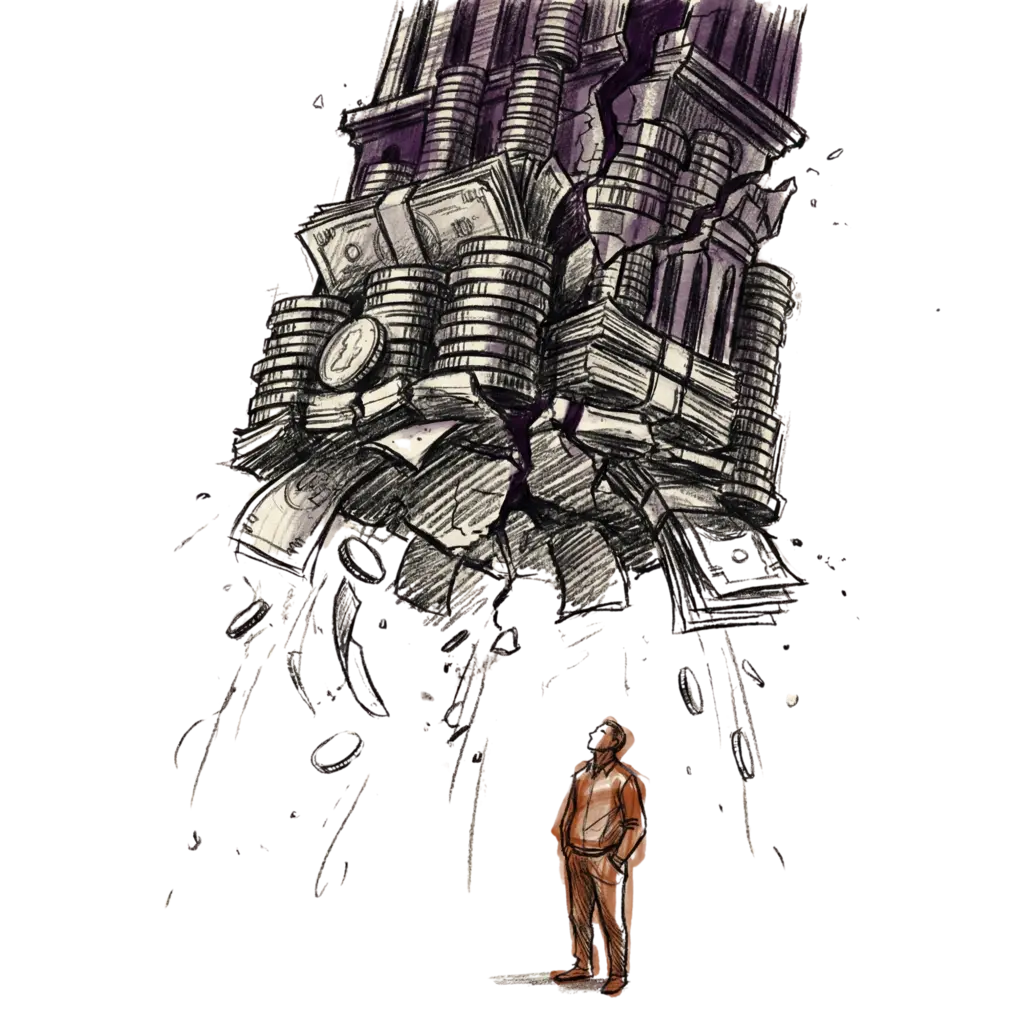

I've been thinking about what happens when AI inference costs stop being subsidized. Every major lab is losing money on inference right now, and that's going to change. I don't know exactly how it plays out, but here's where my head is at.

Good enough is good enough. Most tasks people use AI for don't need frontier models. Writing summaries, extracting data, answering questions, drafting emails — none of that requires the smartest model available. I think this covers 95% of real-world usage. The top 5% — hard research, complex reasoning, genuinely creative work — still needs frontier. But that's not what most people are doing.

Open-source absorbs the work. A lot of that 95% shifts to open-source models that are small and virtually free to run. Open-weight models lag frontier by about three months now. Three months. That gap keeps shrinking.

There's still vast amounts of slack in the rope. I've been saying this since 2023, and it keeps being true. I don't think we've come close to finding out how efficient inference can get. We're probably at 1-5% of the efficiency we'll have over the next decade, and that might be orders of magnitude too conservative.

Frontier gets more expensive, everything else gets cheaper. I expect a price jump at the top tier because the labs can't keep giving that away. But lower-tier cloud models — Haiku, the nano models, Flash — will compete hard with open-source on price. They have to, because losing that traffic means losing the customer.

What humans want doesn't change that fast. The workflows and tasks most people need are largely static. The top 5% of requirements might stay expensive. But most human tasks land in the bottom 95%, and I expect that to be very affordable.

I'm still thinking through a lot of this, but here's where I currently land: the subsidy era ends, and what replaces it is a split — expensive frontier for the few who need it, cheap everything else for everyone who doesn't. Most people won't even notice.

Sources

- OpenAI inference costs revealed through leaked Microsoft revenue-share documents. TechCrunch

- OpenAI revenue of $13.1 billion in 2025, confirmed by CFO Sarah Friar. CNBC

- OpenAI projects $115 billion cumulative cash burn through 2029. Fortune

- Anthropic nears $20 billion revenue run rate by March 2026. Bloomberg, corroborated by CNBC

- Nick Turley (VP of Product, OpenAI) described ChatGPT's subscription pricing as something they "stumbled into." BG2 Pod, covered by Business Insider

- AI venture capital totaled $258.7 billion globally in 2025. OECD

- Stanford HAI 2025 AI Index documented a 280-fold decline in GPT-3.5-level inference costs. Stanford HAI

- Open-weight models lag frontier closed models by 3.5 months on average. Epoch AI

- Inference price decline of 50x per year, accelerating to 200x post-January 2024. Epoch AI

- ~10x annual cost decline for equivalent LLM performance ("LLMflation"). a16z

- Google reported 33x energy reduction per AI text prompt in 12 months. Google, preprint

- Speculative decoding: 2-3x inference speedup (Leviathan et al., ICML 2023). arXiv

- Continuous batching and PagedAttention (vLLM, SOSP 2023). arXiv

- Quantization quality retention: AWQ (MLSys 2024) and GPTQ (ICLR 2023)

- Inference cost-performance improvement of 5-10x per year. "The Price of Progress"

- Enterprise open-source LLM adoption declined from 19% to 13%. Menlo Ventures

- Gartner predicts inference on 1T-parameter LLMs will cost 90% less by 2030. Gartner