Most Companies Aren't Anywhere Near Ready for AI

It's not that companies aren't using AI—it's that they can't

This is some of my favorite, most popular, and latest content. You can also browse and search the archives.

It's not that companies aren't using AI—it's that they can't

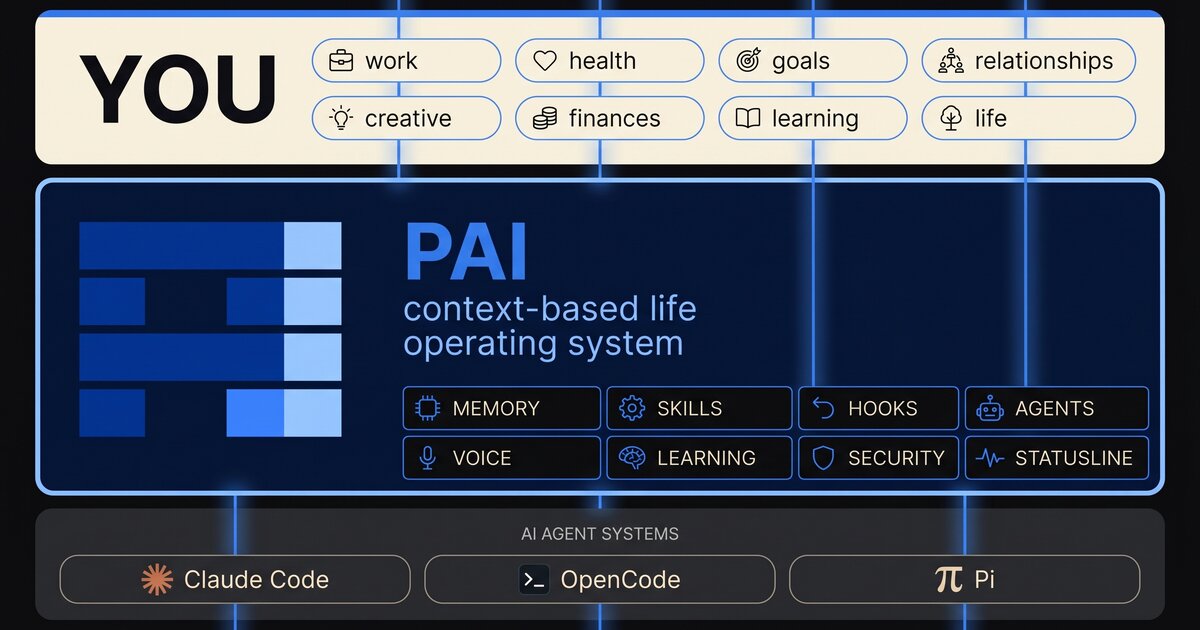

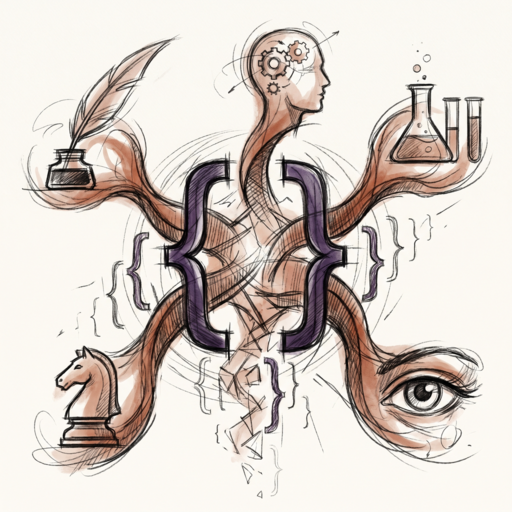

An open-source Life Operating System for your Digital Assistant

Companies always wanted to do the work themselves — AI just gave them the tools

Companies don't want to pay millions for the bottom 90% anymore — they'd rather hand the top 10% better tools

Hard-to-vary explanations, ideal state criteria, and what knowledge actually is — voice transcript, lightly cleaned

When models get better at coding, they get better at everything

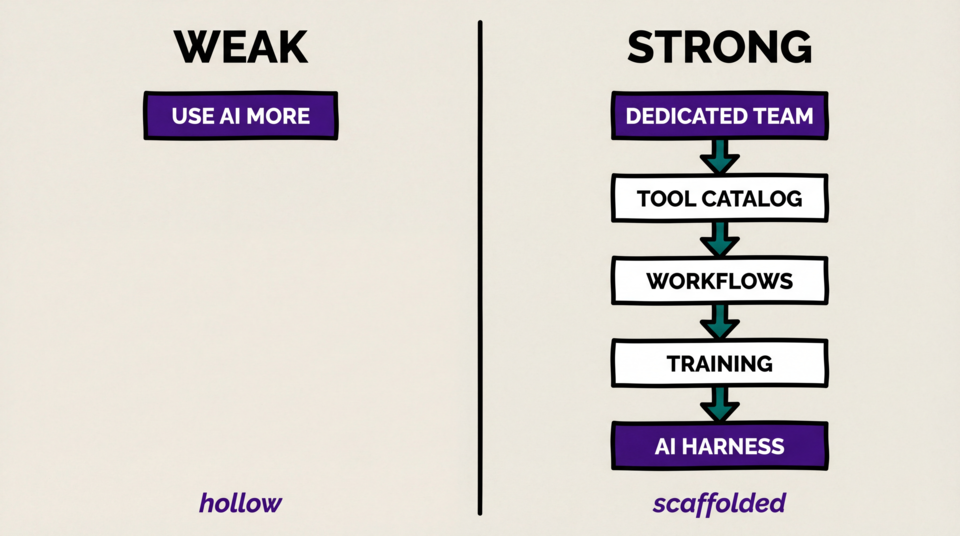

What separates the strong enterprise AI rollouts from the shockingly bad ones

Most companies have no good answer to the replacement question